What Is a Transformer Model?

A transformer model is a deep learning architecture that processes sequential data by attending to all positions in the input simultaneously, rather than reading tokens one at a time. It relies on a mechanism called self-attention to weigh the relevance of every element in a sequence against every other element, producing context-aware representations in parallel.

Introduced in the 2017 paper "Attention Is All You Need" by Vaswani et al. at Google, the transformer replaced recurrent neural networks as the dominant architecture for sequence tasks. RNNs processed tokens sequentially, creating bottlenecks for long sequences and making parallelization difficult.

The transformer eliminated recurrence entirely, enabling training on much larger datasets with significantly greater hardware efficiency.

The transformer architecture underpins virtually every major artificial intelligence system built since its introduction. Models like BERT, GPT-3, and their successors are all transformer models.

The architecture has expanded well beyond text to power advances in computer vision, protein folding, speech recognition, and multimodal reasoning.

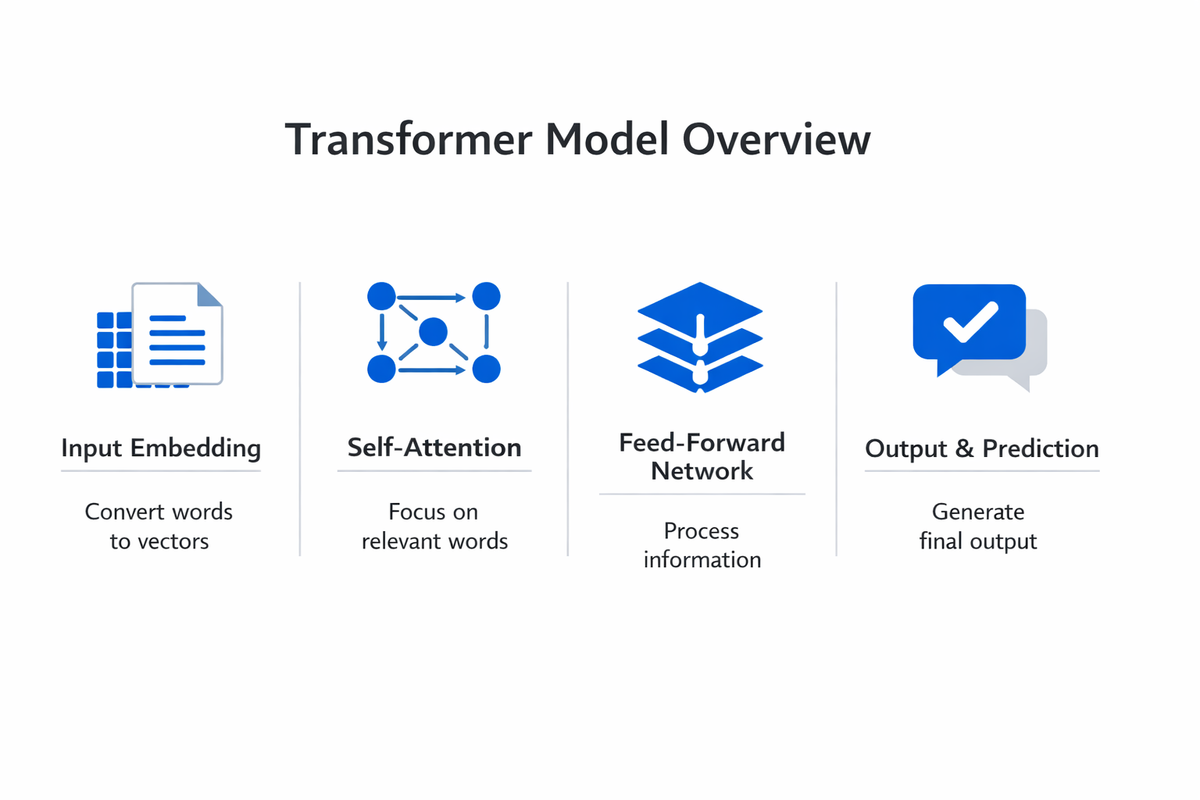

How Transformers Work

The original transformer model uses an encoder-decoder structure. The encoder reads the full input sequence and produces a set of continuous representations. The decoder generates the output sequence one token at a time, referencing both its own previous outputs and the encoder's representations.

Self-Attention Mechanism

Self-attention is the defining innovation of the transformer model. For each token in a sequence, the mechanism computes three vectors: a query, a key, and a value. The query represents what the token is looking for. The key represents what the token offers to other tokens. The value carries the actual information to be passed forward.

Attention scores are calculated by taking the dot product of a token's query with every other token's key, then scaling and applying a softmax function. The resulting weights determine how much each token's value contributes to the current token's updated representation. This operation runs across all tokens in parallel, allowing the model to capture relationships regardless of distance in the sequence.

In the sentence "The cat sat on the mat because it was tired," self-attention allows the model to associate "it" with "cat" by computing high attention scores between those positions. Prior architectures like RNNs struggled with such long-range dependencies because information decayed as it passed through sequential processing steps.

Multi-Head Attention

Rather than computing a single set of attention scores, transformer models use multi-head attention. The model runs several attention operations in parallel, each with independently learned query, key, and value projections. Each "head" can learn to focus on different types of relationships: one head might capture syntactic dependencies, another semantic similarity, and another positional patterns.

The outputs from all heads are concatenated and projected through a linear layer to produce the final attention output. Multi-head attention gives the transformer model the capacity to simultaneously represent multiple types of relationships within the same sequence.

Positional Encoding

Because self-attention processes all tokens simultaneously, the transformer model has no inherent sense of word order. Positional encodings solve this by injecting information about each token's position in the sequence. The original transformer used sinusoidal functions of varying frequencies to generate positional vectors, which are added to the token embeddings before they enter the encoder or decoder.

Later transformer variants introduced learned positional embeddings, relative position encodings, and rotary position encodings. Each approach balances the trade-off between representing absolute position, relative distance between tokens, and the ability to generalize to sequence lengths not seen during training.

Feed-Forward Networks and Layer Normalization

After each attention sublayer, the transformer model passes token representations through a position-wise feed-forward network. This consists of two linear transformations with a nonlinear activation function between them. The feed-forward network operates independently on each token, adding the capacity to transform representations beyond what attention alone achieves.

Residual connections wrap around both the attention and feed-forward sublayers, adding the sublayer's input directly to its output. Layer normalization then stabilizes the resulting values.

These design choices are critical for training deep transformer stacks, preventing gradient degradation and enabling models with dozens or hundreds of layers to converge during training through backpropagation.

Encoder and Decoder Stacks

The encoder stack consists of multiple identical layers, each containing a multi-head self-attention sublayer followed by a feed-forward sublayer. Every token in the input attends to every other token, producing rich bidirectional representations.

The decoder stack mirrors this structure but adds a cross-attention sublayer between the self-attention and feed-forward components. In cross-attention, decoder tokens attend to the encoder's output representations. The decoder's self-attention is also masked so that each position can only attend to earlier positions, preserving the autoregressive property needed for sequential generation.

Key Transformer Architectures

The original transformer model spawned three major architectural families, each optimizing for different task profiles.

Encoder-Only Models

Encoder-only transformer models use just the encoder stack with full bidirectional self-attention. Every token can attend to every other token, making these models strong at understanding and representing input text.

BERT is the defining encoder-only transformer model. It trains using masked language modeling, where random tokens in the input are hidden and the model predicts them from surrounding context. This bidirectional pre-training produces representations well suited for classification, entity recognition, and extractive question answering.

Variants like RoBERTa, ALBERT, and DeBERTa refined BERT's training procedures and architectural details, pushing benchmark scores higher while maintaining the encoder-only design. Encoder-only models remain the standard choice when the task requires analyzing existing text rather than generating new text.

Decoder-Only Models

Decoder-only transformer models use just the decoder stack with causal (left-to-right) attention masking. Each token can only attend to tokens that precede it, making these models naturally suited for text generation and language modeling.

The GPT series defines this category. GPT-3, with 175 billion parameters, demonstrated that scaling decoder-only transformer models produces strong few-shot and zero-shot performance across a wide range of tasks without task-specific fine-tuning. GPT-4 and subsequent models extended this scaling approach further, integrating multimodal inputs and improved reasoning.

Decoder-only models now dominate generative AI applications, including conversational assistants, code generation, creative writing, and automated summarization. Their autoregressive design makes them flexible general-purpose systems that can be prompted or fine-tuned for virtually any text output task.

Encoder-Decoder Models

Encoder-decoder transformer models use the full original architecture. The encoder processes the input, and the decoder generates the output while attending to the encoder's representations through cross-attention.

T5 (Text-to-Text Transfer Transformer) is the most prominent example. T5 frames every NLP task as a text-to-text problem: classification, summarization, translation, and question answering all involve converting an input text into an output text. This unified framing simplifies multi-task training and allows a single model to handle diverse workloads.

BART, mBART, and MarianMT are other notable encoder-decoder transformer models. Machine translation, document summarization, and tasks that require both understanding input and generating structured output align well with this architecture.

| Type | Description | Best For |

|---|---|---|

| Encoder-Only Models | Encoder-only transformer models use just the encoder stack with full bidirectional. | Every token can attend to every other token |

| Decoder-Only Models | Decoder-only transformer models use just the decoder stack with causal (left-to-right). | Conversational assistants, code generation, creative writing |

| Encoder-Decoder Models | Encoder-decoder transformer models use the full original architecture. | T5 frames every NLP task as a text-to-text problem: classification |

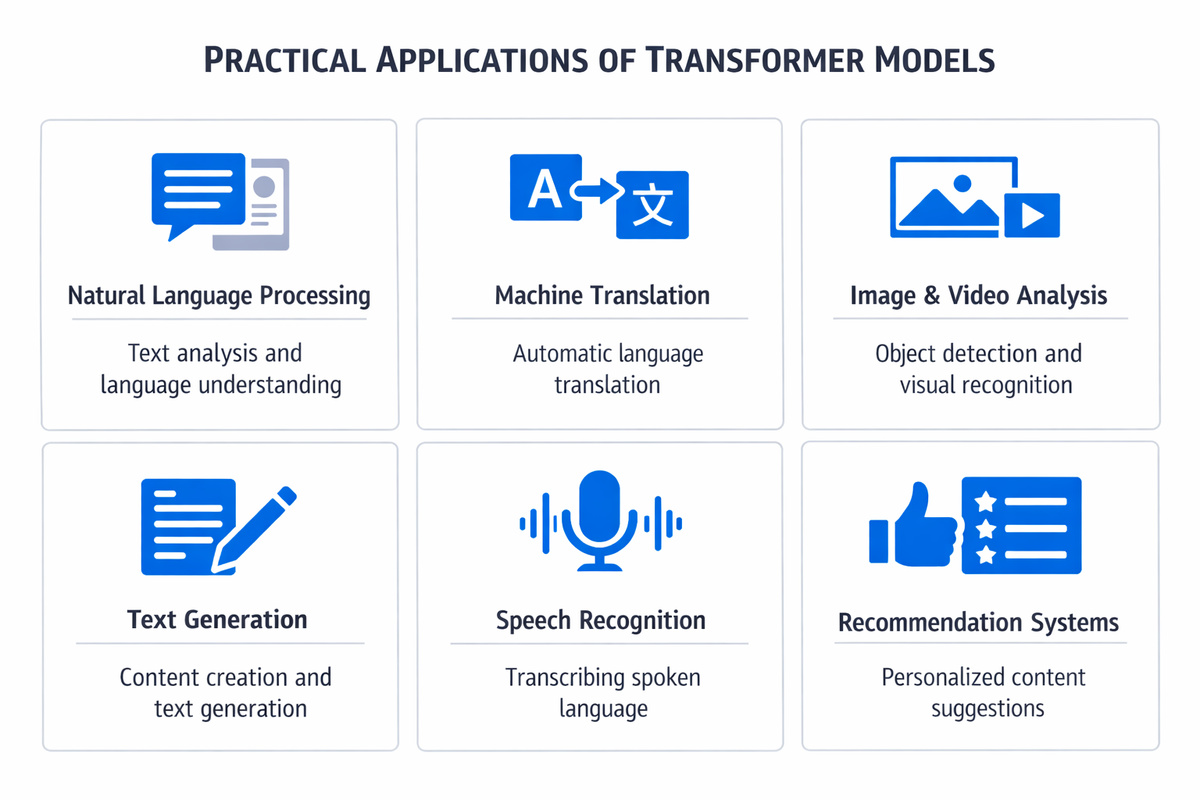

Transformer Use Cases

Natural Language Processing

Transformer models power nearly every modern natural language processing system. Machine translation was the original application, with transformer-based systems replacing phrase-based and RNN-based models at Google, Meta, and other organizations. Translation quality improved significantly because self-attention captures long-range grammatical dependencies that span entire sentences.

Sentiment analysis, text classification, named entity recognition, question answering, and text summarization all rely on transformer models in production. The pre-train-then-fine-tune workflow allows practitioners to adapt a single pre-trained transformer model to any of these downstream tasks with relatively small labeled datasets.

Computer Vision

Vision Transformers (ViT) apply the transformer model architecture to image data by dividing an image into fixed-size patches, flattening each patch into a vector, and treating these vectors as tokens in a sequence. Self-attention then captures relationships between patches across the entire image.

ViT and its successors have matched or exceeded convolutional neural networks on image classification benchmarks, particularly when pre-trained on large datasets. Detection Transformers (DETR) extend this approach to object detection. Swin Transformers introduce hierarchical feature maps that make the architecture practical for tasks like semantic segmentation and dense prediction.

Multimodal Applications

Vision-language models combine transformer encoders for images with transformer decoders for text, enabling systems that describe images, answer visual questions, and generate images from text prompts. Models like CLIP, Flamingo, and GPT-4V use transformer architectures to align visual and linguistic representations in a shared space.

Multimodal transformer models are driving advances in robotics, autonomous driving, medical image analysis, and creative tools. Their ability to process and relate information across data types makes them uniquely versatile compared to architectures designed for a single modality.

Code Generation and Software Engineering

Transformer models trained on code repositories can generate functions, complete code snippets, identify bugs, and translate between programming languages. Codex, CodeLlama, and StarCoder are decoder-only transformer models trained on large code corpora that power tools like GitHub Copilot.

These models reduce time spent on routine coding tasks and help less experienced developers write functional code more quickly. Code generation represents one of the highest-impact practical applications of the transformer model architecture in professional settings.

Speech and Audio Processing

Whisper, a transformer-based model from OpenAI, handles speech recognition across multiple languages with strong accuracy. Transformer models also power text-to-speech synthesis, music generation, and audio classification systems.

The self-attention mechanism adapts well to audio spectrograms and waveform representations, capturing temporal patterns and speaker characteristics that earlier architectures handled less effectively.

Challenges and Limitations

Computational Cost

Self-attention scales quadratically with sequence length. For an input of length n, the model computes n-squared attention scores at every layer. This makes processing very long sequences expensive in both memory and compute. Training large transformer models requires clusters of specialized hardware, and the energy consumption of these training runs has drawn scrutiny.

Efficient attention variants like Flash Attention, Longformer, and Performer reduce this cost through sparse attention patterns, linear approximations, or kernel-based methods. These approaches make it feasible to process longer sequences, but the underlying quadratic relationship remains a fundamental constraint of standard self-attention.

Data Requirements

Transformer models achieve their best performance when pre-trained on very large datasets. GPT-3 trained on hundreds of billions of tokens. Smaller training sets tend to produce weaker generalization, particularly for decoder-only models that rely on emergent capabilities at scale. This data dependency creates barriers for organizations with limited access to large, high-quality corpora.

Interpretability

Transformer models function as complex black boxes. While attention weights provide some visibility into which tokens the model focuses on, these weights do not straightforwardly explain the model's reasoning. Interpreting why a transformer model produces a particular output remains an active research challenge, complicating deployment in regulated industries that require explainable decisions.

Bias and Safety

Transformer models absorb biases present in their training data. These biases manifest in model outputs as stereotyped associations, uneven performance across demographic groups, and the potential to generate harmful content. Addressing bias requires careful dataset curation, evaluation frameworks, and post-training alignment techniques such as reinforcement learning from human feedback.

Deployment Complexity

Running large transformer models in production requires significant infrastructure. Serving a model with billions of parameters at low latency demands GPU acceleration, model parallelism, and careful optimization of memory and throughput. Techniques like quantization, pruning, and distillation reduce deployment costs but introduce trade-offs against model quality.

How to Get Started with Transformers

Foundational Knowledge

A practical understanding of transformer models requires familiarity with neural network fundamentals: linear algebra, probability, and the basics of machine learning training loops.

Understanding how loss functions, optimizers, and backpropagation work is important for grasping why specific design choices in the transformer architecture matter.

Reading the original "Attention Is All You Need" paper provides the canonical reference. Supplementary resources like Jay Alammar's "The Illustrated Transformer" make the mathematical concepts accessible through visual explanations.

Hands-On Tools

PyTorch is the most widely used framework for building and training transformer models. Hugging Face's Transformers library provides pre-trained models, tokenizers, and training utilities that let practitioners load, fine-tune, and deploy transformer models with minimal boilerplate code.

A typical starting workflow involves loading a pre-trained model like BERT or GPT-2 from Hugging Face, fine-tuning it on a task-specific dataset, and evaluating performance. This hands-on process builds intuition for how tokenization, attention, and training dynamics behave in practice.

Progressive Learning Path

The following sequence provides a structured approach to building transformer model expertise:

- Study the self-attention mechanism and implement a single-head attention function from scratch

- Build a minimal transformer encoder in PyTorch to understand layer stacking, residual connections, and normalization

- Fine-tune a pre-trained BERT model on a text classification task using the Hugging Face library

- Experiment with a decoder-only model like GPT-2 for text generation, observing how temperature and sampling strategies affect output

- Explore vision transformers to understand how the architecture adapts beyond text

- Read research papers on efficient attention and scaling laws to deepen theoretical understanding

Community and Research

The transformer model field evolves rapidly. Following conferences like NeurIPS, ICML, and ACL provides access to the latest research. Hugging Face's model hub, Papers With Code, and arXiv are essential resources for tracking new architectures, benchmarks, and best practices.

Participating in open-source projects and reproducing published results builds practical skills that reading alone cannot develop. Many organizations now integrate transformer model training into their technical learning and development programs, recognizing it as a core competency for engineering teams working with AI.

FAQ

What is the difference between a transformer and a neural network?

A transformer is a specific type of neural network architecture. All transformers are neural networks, but not all neural networks are transformers. What distinguishes the transformer model from other neural network architectures like RNNs or CNNs is its use of self-attention to process all elements of a sequence in parallel, rather than relying on recurrence or convolution.

Why did transformers replace RNNs?

Recurrent neural networks process sequences one token at a time, making them slow to train on long sequences and prone to losing information over many steps. Transformer models process all tokens in parallel through self-attention, which is far more efficient on modern hardware and captures long-range dependencies more effectively. This combination of speed and representational power made transformers the preferred architecture.

How large are transformer models?

Transformer model sizes vary enormously. BERT Base has approximately 110 million parameters. GPT-3 has 175 billion parameters. The largest models exceed one trillion parameters. Model size correlates with capability but also with computational cost. Smaller models like DistilBERT and TinyLlama demonstrate that careful training and distillation can achieve strong performance at a fraction of the scale.

Can transformers handle data types other than text?

Transformer models have been successfully applied to images (Vision Transformers), audio (Whisper), video, protein sequences (AlphaFold), chemical molecules, and time series data. The self-attention mechanism is domain-agnostic. Any data that can be represented as a sequence of tokens or patches can be processed by a transformer model.

What is the relationship between transformers and generative AI?

Generative AI systems like ChatGPT, DALL-E, and Gemini are built on transformer model architectures. The transformer provides the underlying computation that enables these systems to generate text, images, code, and other content.

Not all transformer applications are generative, as encoder-only models like BERT focus on understanding rather than generation, but the generative AI wave is fundamentally powered by transformer technology.

Conclusion

The transformer model reshaped artificial intelligence by demonstrating that self-attention alone, without recurrence or convolution, provides a faster, more scalable, and more effective foundation for sequence processing. From the original machine translation application to modern multimodal systems, the architecture has proven adaptable across domains and scales.

Understanding how transformer models work is no longer optional for practitioners in AI and machine learning. The architecture's influence on natural language processing, computer vision, code generation, and scientific research makes it a foundational topic.

Whether the goal is to fine-tune a pre-trained model for a specific business task or to understand the systems driving the current wave of AI capability, the transformer model is where the field begins.