What Are Vector Embeddings?

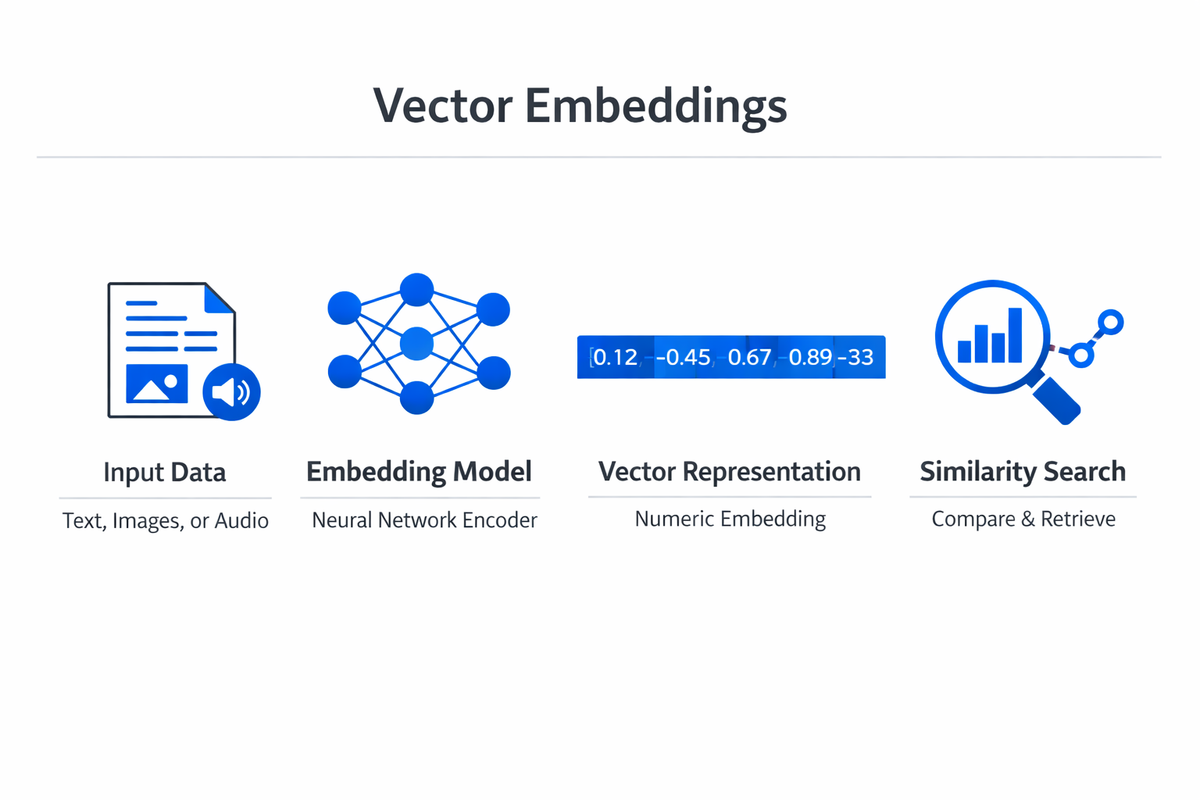

Vector embeddings are dense numerical representations that encode the meaning of data objects, such as words, sentences, images, or entire documents, as points in a high-dimensional space. Each embedding is a list of floating-point numbers, often called a vector, where the position in that space reflects the semantic properties of the original input. Objects with similar meanings cluster together, while dissimilar ones sit far apart.

The concept solves a fundamental problem in artificial intelligence: machines cannot interpret raw text, pixels, or audio the way humans do. They need structured numerical input. Early approaches like one-hot encoding assigned each unique item a separate binary dimension, creating vectors that were enormous, sparse, and unable to represent relationships between items.

Vector embeddings replaced this with compact, continuous representations that preserve relational structure.

A well-trained embedding space places the vector for "king" closer to "queen" than to "bicycle." It positions the vector for a photograph of a cat near other cat photographs and far from images of trucks.

This property, that geometric distance mirrors semantic similarity, is what makes vector embeddings useful across virtually every modern AI application, from semantic search to recommendation systems and retrieval-augmented generation.

How Vector Embeddings Work

From Raw Data to Numerical Vectors

The process of creating vector embeddings starts with a model that has been trained on large datasets to learn meaningful representations. For text, the model processes words or tokens through multiple layers of computation, each refining the representation. For images, the model processes pixel data through convolutional or attention-based layers. The output is a fixed-length numerical vector that captures the essential characteristics of the input.

The dimensionality of these vectors varies by model and use case. Word embeddings from early models like Word2Vec typically use 100 to 300 dimensions. Modern transformer model architectures like BERT and GPT produce embeddings with 768 to 4,096 or more dimensions. Higher dimensionality allows the model to encode more nuanced distinctions, but it also increases storage and computation costs.

Training Objectives That Shape the Space

The structure of an embedding space depends entirely on the training objective. Word2Vec uses a predictive approach: given a target word, the model learns to predict surrounding context words (skip-gram) or vice versa (CBOW). The weights learned during this process become the word embeddings. Words that appear in similar contexts develop similar vectors, which is why "doctor" and "physician" end up near each other even though they share no characters.

More advanced models use different objectives. BERT is trained to predict masked tokens within a sentence, forcing it to learn bidirectional context. Contrastive learning frameworks train the model to pull similar pairs closer together in the embedding space while pushing dissimilar pairs apart.

Sentence embedding models like Sentence-BERT are fine-tuned specifically so that semantically similar sentences produce vectors with high cosine similarity.

Measuring Similarity in Embedding Space

Once data is embedded, similarity between any two items is measured using distance or similarity metrics applied to their vectors. The most common metric is cosine similarity, which measures the angle between two vectors regardless of their magnitude. A cosine similarity of 1.0 indicates identical direction (maximum similarity), while 0 indicates orthogonality (no relationship).

Euclidean distance, which measures the straight-line distance between two points, is another option. Dot product similarity combines magnitude and direction and is used in many neural network architectures for attention calculations. The choice of metric depends on the embedding model and the application. Most modern embedding models are optimized for cosine similarity.

Types of Embeddings

Word Embeddings

Word embeddings assign a single fixed vector to each word in a vocabulary. Word2Vec and GloVe are the foundational models in this category. Word2Vec learns embeddings by training a shallow neural network on word co-occurrence patterns. GloVe constructs embeddings from a global word co-occurrence matrix, combining the strengths of count-based and prediction-based methods.

The limitation of static word embeddings is that they produce a single vector per word regardless of context. The word "bank" receives the same embedding whether it refers to a financial institution or a riverbank. Despite this constraint, word embeddings demonstrated that distributed numerical representations could capture analogy relationships (king minus man plus woman approximately equals queen) and launched the field of representation learning.

Contextual Embeddings

Contextual embeddings solve the polysemy problem by generating different vectors for the same word depending on its surrounding context. Models like BERT, ELMo, and GPT produce embeddings where "bank" in "I deposited money at the bank" has a different vector than "bank" in "We walked along the river bank."

These models are built on deep learning architectures, typically Transformers, and are pre-trained on massive text corpora. The resulting embeddings capture not just word meaning but syntactic structure, coreference, and discourse-level patterns.

Contextual embeddings are the standard for modern natural language processing tasks because they provide richer, more accurate representations than their static predecessors.

Sentence and Document Embeddings

Sentence embeddings represent entire sentences or paragraphs as single vectors. Models like Sentence-BERT, Universal Sentence Encoder, and more recent instruction-tuned embedding models produce vectors that capture the overall meaning of a text span. These are critical for tasks like semantic search, where the goal is to find documents that match a query by meaning rather than by keyword overlap.

Document embeddings extend this concept to longer texts. Approaches range from simple averaging of word or token embeddings to dedicated architectures that process full documents and produce a single representative vector. The quality of sentence and document embeddings directly determines the effectiveness of downstream retrieval and classification systems.

Image and Multimodal Embeddings

Vector embeddings are not limited to text. Convolutional neural networks and Vision Transformers produce image embeddings by processing pixels through learned feature extractors. The resulting vectors capture visual properties like shape, color, texture, and object composition.

Multimodal embedding models like CLIP map both text and images into a shared embedding space. This means the vector for the text "a golden retriever playing in the snow" will be close to vectors of images showing exactly that. Shared embedding spaces enable cross-modal search, where a text query retrieves relevant images, and vice versa.

This capability powers image search engines, content moderation tools, and generative AI systems that connect language and vision.

| Type | Description | Best For |

|---|---|---|

| Word Embeddings | Word embeddings assign a single fixed vector to each word in a vocabulary. | GloVe constructs embeddings from a global word co-occurrence matrix |

| Contextual Embeddings | Contextual embeddings solve the polysemy problem by generating different vectors for the. | — |

| Sentence and Document Embeddings | Sentence embeddings represent entire sentences or paragraphs as single vectors. | These are critical for tasks like semantic search |

| Image and Multimodal Embeddings | Vector embeddings are not limited to text. | — |

Vector Embedding Use Cases

Semantic Search and Information Retrieval

Traditional keyword search fails when users and documents use different vocabulary to describe the same concept. Vector embeddings enable semantic search systems that match queries to documents based on meaning. A search for "how to reduce employee turnover" can return documents about "staff retention strategies" because the embeddings for both phrases occupy nearby regions of the vector space.

This approach powers enterprise search, e-commerce product discovery, and knowledge base retrieval.

Organizations implementing retrieval-augmented generation (RAG) use embedding-based retrieval as the first step: the user query is embedded, the most relevant document chunks are retrieved by vector similarity, and a language model generates an answer grounded in those documents.

Recommendation Systems

Recommendation engines use vector embeddings to represent users and items in the same space. A user's embedding reflects their preferences, learned from interaction history. Item embeddings reflect content characteristics. Recommendations are generated by finding items whose embeddings are closest to the user's embedding.

This approach captures nuanced preference patterns that collaborative filtering alone misses. Streaming platforms use it to recommend content based on viewing patterns. E-commerce sites use it to surface products that match a shopper's implicit preferences. Educational platforms can apply the same logic to recommend courses, learning paths, or study materials based on a learner's engagement history and demonstrated knowledge gaps.

Classification and Clustering

Vector embeddings serve as input features for classification and clustering tasks. Once text, images, or other data are converted into embeddings, standard machine learning algorithms can operate on them. A support vector machine or logistic regression classifier trained on sentence embeddings can categorize customer support tickets, flag toxic content, or route inquiries to the correct department.

Clustering algorithms like k-means or HDBSCAN group similar embeddings together without labeled training data. This is useful for exploratory analysis: discovering topic clusters in a document corpus, identifying user segments based on behavior patterns, or detecting emerging themes in social media data. The quality of the embedding model determines how meaningful the resulting clusters are.

Anomaly and Duplicate Detection

Because vector embeddings place similar items near each other, outliers in the embedding space correspond to anomalies in the underlying data. Fraud detection systems embed transaction records and flag those whose vectors fall far from the normal cluster. Content moderation systems embed user posts and detect unusual patterns that might indicate bot activity or coordinated manipulation.

Duplicate detection works by the same principle in reverse. Near-duplicate documents, product listings, or database records produce embeddings with very high cosine similarity. Organizations use this to deduplicate datasets, identify plagiarism, or consolidate redundant entries across systems.

Education and Adaptive Learning

In education technology, vector embeddings enable systems that understand learner intent and content meaning at a deeper level than keyword matching. A student searching for "how neurons fire" can be matched with content about "action potentials in neural cells" because the embeddings capture the semantic overlap.

Embedding-based systems can also map relationships between learning objectives, course materials, and assessment items. This supports adaptive learning platforms that recommend the next piece of content based on what a learner has mastered and where gaps remain. As machine learning continues to advance, these personalization capabilities become more precise and actionable.

Challenges and Limitations

Dimensionality and Storage

High-dimensional embedding vectors require significant storage, especially at scale. A database of 100 million documents, each represented by a 1,536-dimensional float32 vector, requires roughly 600 GB of storage for the vectors alone. Specialized vector databases like Pinecone, Weaviate, Milvus, and Qdrant have emerged to handle storage and retrieval at this scale, but they add infrastructure complexity and cost.

Approximate nearest neighbor (ANN) algorithms, such as HNSW and IVF, trade a small amount of retrieval accuracy for dramatic speed improvements. These are essential for production systems that need to search millions of vectors in milliseconds. Choosing the right index type, quantization level, and search parameters requires careful benchmarking against the specific dataset and latency requirements.

Embedding Quality and Bias

The quality of vector embeddings is limited by the data and objectives used during training. Models trained on internet text absorb the biases present in that data. Embeddings may associate certain professions more strongly with one gender, or encode cultural stereotypes as geometric relationships. These biases propagate into every downstream application that uses the embeddings.

Debiasing techniques exist, including post-processing methods that project out bias directions and training-time interventions that modify the loss function. However, removing bias without degrading embedding quality is difficult, and no current method provides a complete solution. Teams deploying embedding-based systems should audit their models for harmful associations relevant to their domain.

Domain Specificity

General-purpose embedding models trained on broad internet data may not perform well in specialized domains. Medical, legal, and scientific text contains terminology and relationships that generic models have not encountered frequently enough to embed accurately. A general model might not place "myocardial infarction" close enough to "heart attack" in the vector space for a medical search system to function reliably.

Fine-tuning embedding models on domain-specific data addresses this gap but requires labeled pairs or curated datasets that can be expensive to produce. Deep learning techniques for domain adaptation are improving, yet the need for domain expertise in the training pipeline remains a practical barrier for many organizations.

Interpretability

Vector embeddings are inherently opaque. A 1,536-dimensional vector does not come with labels explaining what each dimension represents. Unlike a feature vector where dimension 3 might correspond to "word count" or "price," embedding dimensions encode abstract learned features with no straightforward human interpretation.

This lack of interpretability makes debugging difficult. When a search system returns irrelevant results, it is hard to determine whether the problem lies in the embedding model, the similarity metric, the indexing strategy, or the query itself. Visualization tools like t-SNE and UMAP can project embeddings into two or three dimensions for inspection, but these projections inevitably lose information and can create misleading visual patterns.

Computational Cost of Generation

Generating embeddings for large datasets requires substantial compute. Processing millions of documents through a transformer model takes hours or days even on GPU hardware. Every time the embedding model is updated or replaced, the entire corpus must be re-embedded, creating operational overhead.

Batching, caching, and incremental embedding strategies help manage this cost. Some organizations maintain a pipeline that embeds new content on ingestion and stores the vectors alongside the original data. Others use lighter embedding models for initial retrieval and re-rank with heavier models only for the top candidates.

How to Get Started

Getting started with vector embeddings involves selecting a model, building a pipeline to generate and store embeddings, and integrating similarity search into an application.

- Choose an embedding model. For text, start with well-supported models like OpenAI's text-embedding-3-small, Cohere Embed, or open-source alternatives from Hugging Face such as BGE, E5, or GTE. For images, CLIP or DINOv2 are strong starting points. Evaluate models on your specific data before committing to one.

- Set up a vector database. For prototypes and small datasets, in-memory solutions like FAISS or Annoy are sufficient. For production workloads, managed vector databases like Pinecone, Weaviate, or Qdrant provide persistence, scaling, and filtering capabilities. Many traditional databases like PostgreSQL (with pgvector) now also support vector search.

- Build an embedding pipeline. Create a workflow that converts raw data into embeddings and stores them with metadata. This pipeline should handle batching for efficiency, error recovery, and incremental updates when new data arrives. Libraries like LangChain and LlamaIndex provide pre-built abstractions for common embedding workflows.

- Implement similarity search. Start with a basic nearest-neighbor search: embed a query, retrieve the top-k most similar vectors, and return the associated content. Test with real user queries to evaluate whether the results are semantically relevant. Adjust the embedding model, chunk size, or metadata filtering based on the results.

- Iterate on quality. Embedding quality is the single largest factor in system performance. Experiment with different models, test domain-specific fine-tuning, and build evaluation datasets that measure retrieval accuracy against known-good results. Understanding the fundamentals of neural network architectures and natural language processing will help you make informed decisions about model selection and tuning.

FAQ

What is the difference between vector embeddings and traditional feature vectors?

Traditional feature vectors are hand-engineered: a data scientist decides which features to extract and how to represent them. Vector embeddings are learned automatically by a neural network during training. The key advantage of embeddings is that they capture semantic relationships that manual feature engineering typically misses.

Embeddings also produce dense vectors where every dimension carries information, unlike sparse one-hot or bag-of-words representations where most values are zero.

How many dimensions should vector embeddings have?

Dimensionality depends on the complexity of the data and the task. Common ranges are 256 to 1,536 dimensions for text embeddings. Higher dimensions capture more nuance but increase storage and computation costs. Many modern models offer configurable dimensionality, allowing you to trade off between accuracy and efficiency. For most practical applications, 768 or 1,024 dimensions provide a strong balance.

Can vector embeddings be updated without retraining the model?

No. Embeddings are a direct output of the model's learned parameters. Changing an embedding requires either retraining or fine-tuning the model. When a model is updated, all previously generated embeddings become inconsistent with newly generated ones and must be regenerated. This is why choosing a stable, well-tested embedding model is important before building production systems around it.

What is a vector database, and do I need one?

A vector database is a storage and retrieval system optimized for high-dimensional vectors. It supports operations like approximate nearest neighbor search, metadata filtering, and efficient indexing. You need a vector database when your dataset grows beyond what fits in memory or when you require persistent, production-grade similarity search. For small experiments, in-memory libraries like FAISS work well. For anything facing real users at scale, a dedicated vector database is the practical choice.

How do vector embeddings relate to large language models?

Large language models (LLMs) use vector embeddings as a core internal mechanism. Every token in the input is first converted to an embedding, and the model's transformer layers transform these embeddings through attention and feed-forward operations. Many LLM providers also offer standalone embedding endpoints that produce vector representations for use in search, retrieval, and classification.

The embeddings generated by these models benefit from the vast knowledge encoded during pre-training on large text corpora.