What Is a Variational Autoencoder?

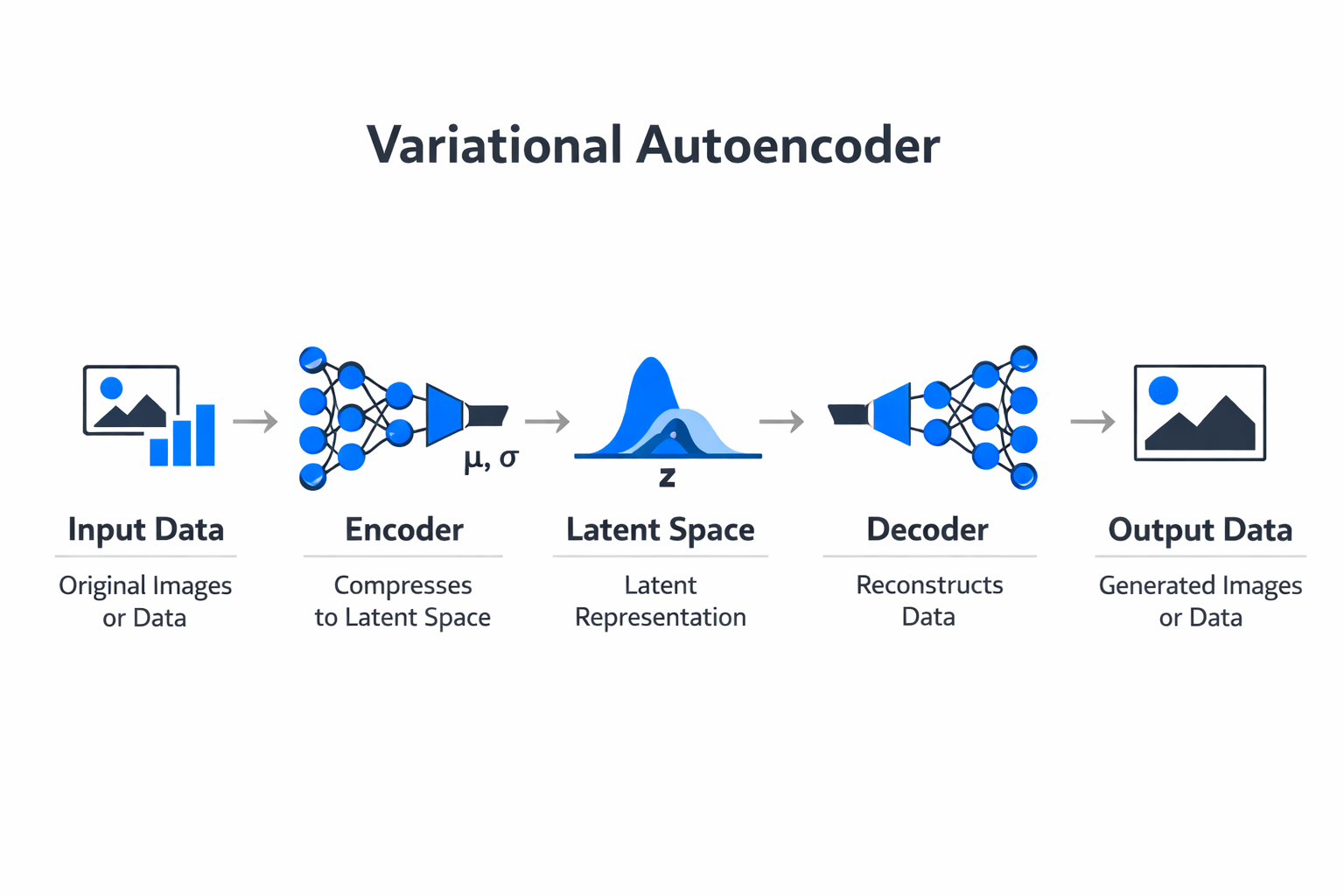

A variational autoencoder (VAE) is a type of generative model that learns to encode input data into a compact probabilistic representation and then decode that representation back into data that resembles the original input. Unlike a standard autoencoder, which maps each input to a single fixed point in latent space, a VAE maps each input to a probability distribution.

This probabilistic approach allows the model to generate new, realistic data samples by sampling from the learned latent space.

VAEs were introduced by Diederik Kingma and Max Welling in 2013 and have since become one of the foundational architectures in generative AI. They combine ideas from deep learning and Bayesian probability to create a framework that is both mathematically principled and practically useful.

The model learns a smooth, continuous latent space where similar data points are positioned near each other, making it possible to interpolate between examples and generate novel outputs that remain coherent.

At the core of a VAE is a trade-off between two objectives: reconstructing the input data accurately and keeping the learned latent distributions close to a simple prior distribution (typically a standard normal distribution). This trade-off is what gives VAEs their generative power. By regularizing the latent space, the model ensures that every region of that space decodes into something meaningful, not just the specific points that correspond to training examples.

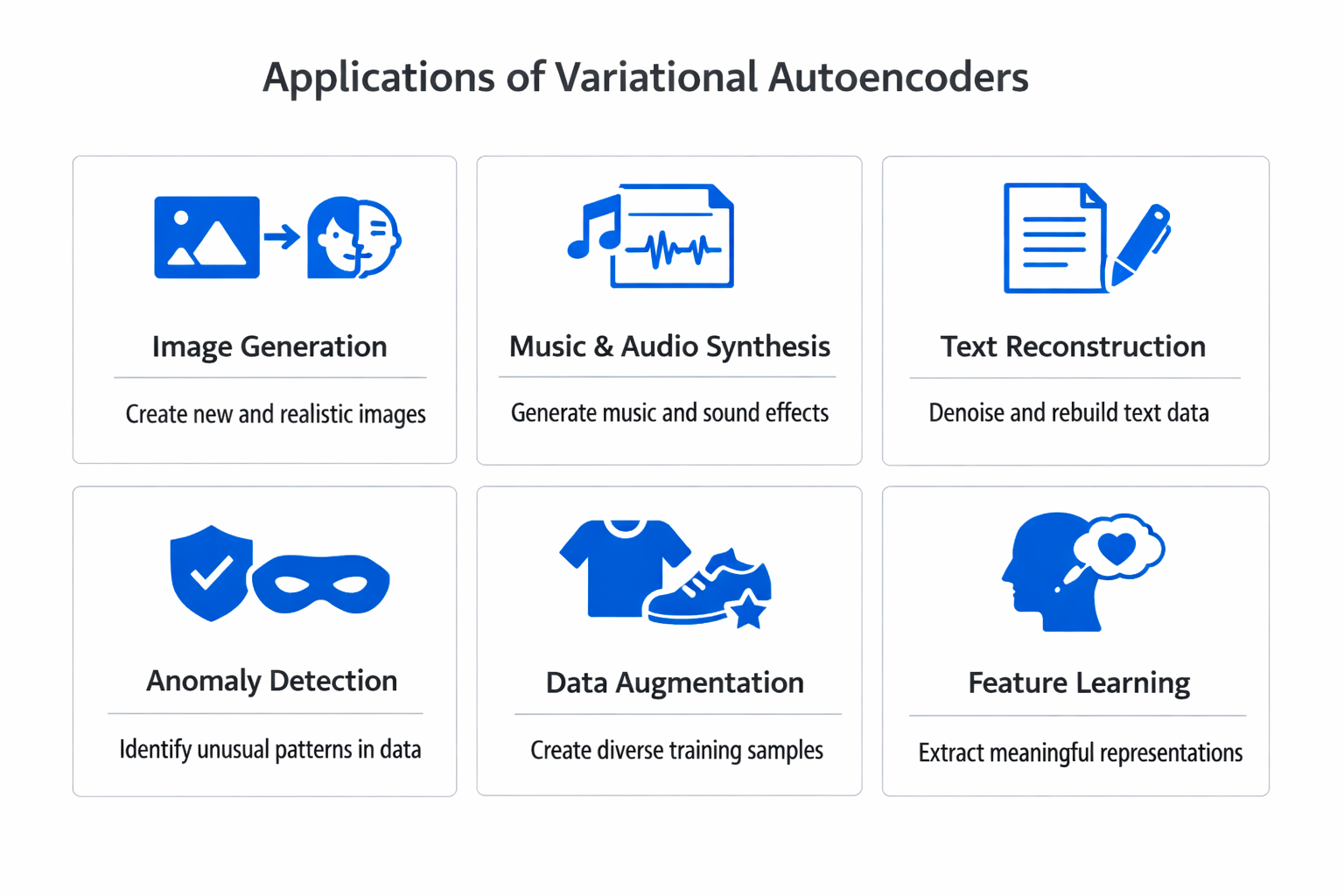

VAEs are widely used in image generation, anomaly detection, drug discovery, and data augmentation. They serve as both a standalone generative tool and a critical component inside larger architectures, including the latent diffusion models that power modern image generation systems like Stable Diffusion.

How VAEs Work

The Encoder: Mapping Data to a Distribution

The encoder is the first half of the VAE architecture. It takes an input, such as an image, audio sample, or structured data record, and passes it through a neural network to produce two vectors: a mean vector and a variance vector. Together, these define a multivariate Gaussian distribution in the latent space.

This is the key difference from a standard autoencoder. A regular autoencoder compresses the input to a single point (a deterministic encoding). A VAE compresses it to a distribution, capturing not just the most likely encoding but also the uncertainty around it. The result is that nearby points in latent space correspond to similar but not identical data, creating a smooth manifold that supports meaningful interpolation and generation.

The encoder is trained using backpropagation just like any other neural network component. Its architecture can range from simple fully connected layers for low-dimensional data to deep convolutional networks for images. The choice of encoder architecture depends on the complexity of the input domain and the desired capacity of the latent representation.

The Latent Space: Where Generation Happens

The latent space is the compressed, lower-dimensional representation that the encoder produces. In a VAE, this space has special properties because of the probabilistic encoding and the regularization imposed during training.

Each training example maps not to a single point but to a region defined by its mean and variance parameters. The training objective pushes these regions toward a standard normal distribution, which prevents the model from creating isolated clusters with empty gaps between them. The result is a latent space that is both organized and continuous. Moving smoothly through this space produces smoothly changing outputs when decoded.

This continuity is what makes VAEs useful for generation. To create a new data sample, you simply draw a random vector from the prior distribution (a standard normal) and pass it through the decoder. Because the latent space has been regularized to match this prior, the decoder knows how to interpret random samples from it and produce coherent outputs.

The Reparameterization Trick

Training a VAE requires computing gradients through the sampling operation that connects the encoder to the decoder. Sampling is inherently random, and random operations are not differentiable, which would normally prevent gradient descent from flowing through this step.

The reparameterization trick solves this problem elegantly. Instead of sampling directly from the distribution parameterized by the encoder, the model samples a noise value from a fixed standard normal distribution and then shifts and scales it using the learned mean and variance. Mathematically, z = mean + variance * epsilon, where epsilon is drawn from N(0, 1).

This reformulation makes the sampling step a deterministic function of the encoder outputs plus independent noise, allowing gradients to flow through the mean and variance parameters during backpropagation.

Without the reparameterization trick, VAEs could not be trained with standard gradient-based optimization. This seemingly simple mathematical reformulation was one of the key innovations that made the VAE framework practical and is a common pattern in modern probabilistic machine learning models.

The Decoder: Reconstructing Data from Latent Codes

The decoder mirrors the encoder. It takes a latent vector (either sampled during training or drawn from the prior during generation) and maps it back to the original data space through a neural network. For image data, the decoder typically uses transposed convolutions or upsampling layers to progressively increase the spatial resolution from the compact latent code to a full-resolution output.

The decoder is trained to maximize the likelihood of the original input given the latent code. In practice, this means minimizing a reconstruction loss, often binary cross-entropy for binary data or mean squared error for continuous data. The decoder learns the mapping from abstract latent representations to concrete data patterns, effectively learning what different regions of the latent space "look like" when rendered into the output domain.

The Loss Function: ELBO

The VAE loss function combines two terms into what is known as the Evidence Lower Bound (ELBO). The first term is the reconstruction loss, which measures how well the decoder reproduces the original input from the latent code. The second term is the KL divergence, which measures how far the encoder's learned distribution deviates from the prior distribution.

The reconstruction loss ensures the model retains useful information about the input. The KL divergence term regularizes the latent space, preventing the encoder from creating highly specific, narrow distributions that would memorize the training data without supporting generalization. Balancing these two terms is the central tension in VAE training.

When the KL divergence term dominates, the model produces blurry, generic outputs because it is forced to compress everything into a nearly identical distribution. When the reconstruction term dominates, the latent space becomes fragmented and loses its generative properties. Practitioners often introduce a weighting coefficient (called beta, as in beta-VAE) to control this balance and tune the model for specific applications.

| Component | Function | Key Detail |

|---|---|---|

| The Encoder: Mapping Data to a Distribution | The encoder is the first half of the VAE architecture. | An image, audio sample, or structured data record |

| The Latent Space: Where Generation Happens | The latent space is the compressed. | Generation |

| The Reparameterization Trick | Training a VAE requires computing gradients through the sampling operation that connects. | Sampling is inherently random |

| The Decoder: Reconstructing Data from Latent Codes | The decoder mirrors the encoder. | — |

| The Loss Function: ELBO | The VAE loss function combines two terms into what is known as the Evidence Lower Bound. | The first term is the reconstruction loss |

VAE vs GAN

VAEs and generative adversarial networks (GANs) are the two foundational paradigms for generative modeling, and they approach the problem from fundamentally different directions. Understanding their differences helps clarify when each architecture is the better choice.

A GAN consists of two neural networks trained in competition: a generator that produces synthetic data and a discriminator that tries to distinguish real data from generated data. The generator improves by fooling the discriminator, and the discriminator improves by getting better at detecting fakes.

This adversarial training dynamic can produce extremely sharp, photorealistic outputs, but it introduces significant training challenges including mode collapse (where the generator only produces a narrow range of outputs) and training instability.

A VAE takes a cooperative rather than adversarial approach. The encoder and decoder work together, optimizing a single unified loss function. This makes VAE training substantially more stable and predictable. The trade-off is output quality: VAE-generated images tend to be blurrier than GAN outputs because the reconstruction loss averages over possible outputs rather than selecting the sharpest one.

Key differences include:

- Output sharpness. GANs typically produce crisper, more detailed outputs. VAEs tend toward softer, slightly blurred results, especially for high-resolution images.

- Training stability. VAEs train reliably with standard gradient-based optimization. GANs require careful balancing of generator and discriminator learning rates, and training can collapse without warning.

- Latent space structure. VAEs produce a well-organized, continuous latent space that supports interpolation and controlled generation. GAN latent spaces exist but are less structured and harder to interpret.

- Mode coverage. VAEs tend to cover the full data distribution, generating diverse outputs. GANs are prone to mode collapse, where they ignore parts of the data distribution and repeatedly generate similar samples.

- Likelihood estimation. VAEs provide a principled approximation of the data likelihood through the ELBO. GANs have no built-in mechanism for estimating how probable a given data point is under the model.

In practice, the choice between VAE and GAN depends on the application. Tasks that require sharp visual quality, such as face generation or artistic image synthesis, have historically favored GANs. Tasks that require structured latent representations, stable training, or density estimation, such as anomaly detection or scientific data modeling, favor VAEs.

Many modern architectures combine elements of both, using VAE-style encoding with adversarial training objectives to get the benefits of each approach.

VAE Use Cases

Image Generation and Synthesis

The most visible application of VAEs is generating new images by sampling from the learned latent space. Once trained on a dataset of faces, objects, or scenes, a VAE can produce novel examples that capture the statistical patterns of the training data. While the outputs are typically less sharp than those from GANs or diffusion models, VAEs remain useful for applications where diversity and latent space control matter more than pixel-level crispness.

VAEs also power the autoencoder components within latent diffusion models. In architectures like Stable Diffusion, a VAE-trained encoder compresses images into a latent representation, and the diffusion process operates in that compressed space. The VAE decoder then reconstructs the final image from the denoised latent code. This hybrid approach combines the structured latent space of VAEs with the high-quality generation of diffusion models.

Anomaly Detection

VAEs are well suited for anomaly detection because they learn the distribution of normal data during training. When the model encounters an anomalous input, something that falls outside the training distribution, the reconstruction error increases significantly. By setting a threshold on reconstruction error or on the latent space likelihood, organizations can flag unusual patterns automatically.

This approach is used in fraud detection, manufacturing quality control, network security monitoring, and medical diagnostics. The advantage of using a VAE for anomaly detection is that the model only needs examples of normal behavior during training, making it a form of unsupervised learning that does not require labeled anomaly data.

Drug Discovery and Molecular Design

In computational chemistry and pharmaceutical research, VAEs are used to generate novel molecular structures. The model is trained on a dataset of known molecules, learning a latent space where chemical properties are organized continuously. Researchers can then navigate this space to find molecules with desired properties, such as binding affinity to a specific protein target or favorable toxicity profiles.

The smooth latent space of a VAE is particularly valuable here because it allows gradient-based optimization over molecular properties. Instead of searching through a combinatorial explosion of discrete molecular structures, researchers can optimize a continuous latent vector and decode it into a candidate molecule. This approach accelerates the early stages of drug discovery significantly.

Data Augmentation

Training machine learning models often requires large, diverse datasets. When data is scarce or expensive to collect, VAEs can generate synthetic training examples that augment the real dataset. Because the VAE learns the underlying distribution of the data, the synthetic examples it produces capture realistic variation without simply duplicating existing samples.

This technique is especially useful in medical imaging, where patient data is limited and privacy-sensitive, and in supervised learning tasks where class imbalance makes it difficult to train robust classifiers. By generating additional examples of underrepresented classes, VAEs help improve model performance without requiring additional real-world data collection.

Text and Natural Language Processing

VAEs have been applied to text generation and representation learning in natural language processing. Text VAEs encode sentences into continuous latent vectors and decode them back into text, enabling operations like sentence interpolation, style transfer, and controlled text generation.

The challenge with text VAEs is that language is discrete, and the reconstruction objective can struggle with the mismatch between continuous latent representations and discrete token outputs. Techniques like KL annealing and word dropout help address this, but text generation with VAEs remains more challenging than image generation. Still, VAEs provide useful sentence-level representations for downstream tasks and remain an active research area.

Image-to-Image Translation

Conditional VAEs extend the standard architecture by incorporating conditioning information, such as class labels, attributes, or reference images, into both the encoder and decoder. This enables image-to-image translation tasks, where the model transforms an input image from one domain to another while preserving structural content.

Applications include converting sketches to photographs, translating between different artistic styles, and generating diverse output variations for a single input. The probabilistic nature of the VAE means it can produce multiple plausible translations for the same input, which is valuable when the mapping between domains is inherently ambiguous.

Challenges and Limitations

Blurry Outputs

The most commonly cited limitation of VAEs is the tendency to produce blurry outputs, particularly for high-resolution images. This blurriness stems from the reconstruction loss, which penalizes pixel-level differences between the input and the reconstruction. Because the decoder must account for the stochasticity in the latent sampling, it tends to produce outputs that average over possible reconstructions, smoothing out fine details.

This is a fundamental consequence of the VAE objective, not simply an engineering problem to be solved with a larger model. Approaches like using perceptual loss functions, adversarial training components, or hierarchical VAE architectures can reduce blurriness, but the issue has not been eliminated entirely. For applications where output sharpness is critical, GANs or diffusion models are often preferred.

Posterior Collapse

Posterior collapse occurs when the encoder learns to ignore the input data and produce the same distribution (the prior) regardless of the input. When this happens, the KL divergence term goes to zero, and the decoder generates outputs based only on the prior, without any input-specific information passing through the latent space.

This problem is especially common in models with powerful decoders, such as autoregressive decoders in text VAEs. The decoder becomes capable enough to generate plausible outputs without relying on the latent code, so the latent representation becomes unused.

Techniques to mitigate posterior collapse include KL annealing (gradually increasing the weight of the KL term during training), free bits (setting a minimum information threshold for each latent dimension), and using weaker decoders that force reliance on the latent code.

Balancing the Loss Terms

The tension between reconstruction quality and latent space regularity is a persistent challenge. The beta-VAE framework provides a tuning knob by weighting the KL divergence term, but selecting the right beta value requires experimentation and depends on the specific dataset and application.

A beta value that is too high produces a highly regularized latent space with good generative properties but poor reconstruction. A beta value that is too low produces excellent reconstructions but a fragmented latent space that does not support coherent generation. Finding the sweet spot is an empirical process, and the optimal value can change as the model architecture, dataset, and training procedure evolve.

Scalability to High Dimensions

VAEs can struggle with very high-dimensional data, such as high-resolution images, long audio sequences, or complex 3D structures. The latent bottleneck must compress an enormous amount of information into a relatively small number of dimensions, and the reconstruction loss must evaluate quality across all output dimensions simultaneously.

Hierarchical VAEs, which use multiple levels of latent variables at different resolutions, partially address this limitation. Models like NVAE (Nouveau VAE) and VDVAE (Very Deep VAE) have demonstrated that carefully designed deep hierarchical architectures can produce competitive image quality. However, these models are significantly more complex to implement and train than standard VAEs.

Limited Applicability to Discrete Data

VAEs are most naturally suited to continuous data like images and audio, where the Gaussian assumptions in the latent space align well with the data structure. Applying VAEs to discrete data, such as text, categorical variables, or graph structures, requires additional techniques to bridge the gap between continuous latent representations and discrete outputs.

The Gumbel-Softmax relaxation and other continuous approximations to discrete distributions have been developed to address this, but working with discrete data in a VAE framework remains more complex than working with continuous data. This limitation is important for teams working in natural language processing or with structured categorical datasets.

How to Get Started with VAEs

Getting started with variational autoencoders is accessible for anyone with a working knowledge of deep learning fundamentals and Python programming. The mathematical foundation draws on Bayes' theorem and variational inference, but practical implementation can begin before mastering every theoretical detail.

The most direct path is to implement a basic VAE on a standard dataset like MNIST or CelebA using PyTorch or TensorFlow. Both frameworks have extensive tutorials and reference implementations for VAEs.

Starting with a simple fully connected VAE on MNIST allows you to understand the encoder-decoder structure, the reparameterization trick, and the ELBO loss function in a controlled setting before moving to more complex architectures and datasets.

Key steps for a practical learning path include:

- Understand the standard autoencoder first. Build a deterministic autoencoder and observe its latent space. Then modify it into a VAE by adding the probabilistic encoding and KL divergence term. Comparing the two helps clarify what the probabilistic approach adds.

- Experiment with the beta parameter. Train the same VAE with different beta values and observe how it affects reconstruction quality versus latent space organization. This hands-on experimentation builds intuition for the reconstruction-regularization trade-off.

- Visualize the latent space. For two-dimensional latent spaces, plot the encoded training data and sample a grid of decoded outputs across the space. This visualization reveals how the model organizes data and whether the latent space is smooth and continuous.

- Move to convolutional architectures. Once comfortable with the basics, replace the fully connected layers with convolutional and transposed convolutional layers to handle image data effectively. This step introduces architectural choices that affect both reconstruction quality and training dynamics.

- Explore conditional VAEs. Add class labels or other conditioning information to the model to control the generation process. Conditional VAEs demonstrate how additional information can shape the latent space and guide output generation.

For practitioners in artificial intelligence and data science, understanding VAEs provides a foundation that transfers to many related architectures. The concepts of latent space, probabilistic encoding, and the reconstruction-regularization trade-off appear throughout modern generative modeling, from diffusion models to flow-based generators and beyond.

Teams building capabilities in generative AI will find that a solid understanding of VAEs accelerates learning across the broader field.

FAQ

What is the difference between an autoencoder and a variational autoencoder?

A standard autoencoder maps each input to a single fixed point in a latent space and learns to reconstruct the input from that point. A variational autoencoder maps each input to a probability distribution (defined by a mean and variance) and samples from that distribution before decoding.

This probabilistic approach, combined with a regularization term that keeps the latent distributions close to a standard normal, gives the VAE a smooth, continuous latent space that supports generation of new data. A standard autoencoder can compress and reconstruct data but cannot reliably generate new samples because its latent space has gaps and irregular structure.

Are VAE outputs always blurry?

VAEs do tend to produce softer outputs than GANs or diffusion models, particularly for high-resolution images. This is a consequence of the reconstruction loss averaging over the stochasticity in the latent sampling process. However, blurriness is not absolute. Techniques like hierarchical VAEs, perceptual loss functions, and adversarial training augmentations can significantly sharpen outputs.

For many practical applications, including anomaly detection, data augmentation, and latent space analysis, the level of detail produced by VAEs is entirely sufficient.

Can VAEs be used for tasks other than image generation?

Yes. While image generation is the most visible application, VAEs are used across many domains. In molecular design, they generate novel chemical structures for drug discovery. In natural language processing, they learn sentence-level representations and enable controlled text generation. In anomaly detection, they identify unusual patterns in time-series data, financial transactions, and manufacturing processes. In music, they model and generate audio.

The VAE framework is general enough to apply to any domain where learning a compressed, structured representation of data is valuable.

How does the reparameterization trick work?

The reparameterization trick allows gradients to flow through the stochastic sampling step that connects the encoder to the decoder. Instead of sampling z directly from the distribution parameterized by the encoder (which is not differentiable), the model samples a noise variable epsilon from a standard normal distribution and computes z = mean + standard_deviation * epsilon.

This reformulation makes z a deterministic, differentiable function of the encoder outputs (mean and standard deviation) plus independent noise, enabling standard backpropagation to optimize the entire model end to end.

What is the KL divergence term in the VAE loss?

The KL (Kullback-Leibler) divergence term measures how much the encoder's learned distribution for each data point differs from the prior distribution (typically a standard normal). Minimizing this term regularizes the latent space by pulling all encoded distributions toward a common shape. This regularization prevents the model from creating isolated, arbitrary encodings and ensures the latent space is organized in a way that supports generation.

Without the KL term, the model would degenerate into a standard autoencoder with no generative capability.

When should I use a VAE instead of a GAN?

Choose a VAE when you need a structured, interpretable latent space, stable training without extensive hyperparameter tuning, density estimation capabilities, or when working with limited data. VAEs are particularly strong for anomaly detection, scientific data modeling, and applications where understanding the latent representation matters.

Choose a GAN when output sharpness and photorealism are the primary requirements and you have the engineering resources to manage adversarial training dynamics. Many modern systems combine both approaches to leverage their respective strengths.

%201.svg)

.png)

%201.svg)