What Is Supervised Learning?

Supervised learning is a category of machine learning in which an algorithm learns from labeled training data to make predictions about new, unseen inputs. Each example in the training set consists of an input paired with the correct output, and the algorithm's job is to learn a mapping function that generalizes from these examples to accurately predict outputs for data it has never encountered.

The term "supervised" comes from the analogy of a teacher overseeing the learning process. The labeled data acts as the instructor, providing the correct answer for every training example so the model can measure its own errors and adjust accordingly.

This distinguishes supervised learning from unsupervised learning, where models must find structure in data without any predefined labels, and from reinforcement learning, where agents learn through trial and reward signals.

Supervised learning is the most widely used paradigm in applied artificial intelligence. It powers email spam filters, medical diagnostic tools, credit scoring systems, voice assistants, and recommendation engines. Whenever a system needs to predict a specific outcome based on historical patterns, supervised learning is almost always the foundational approach.

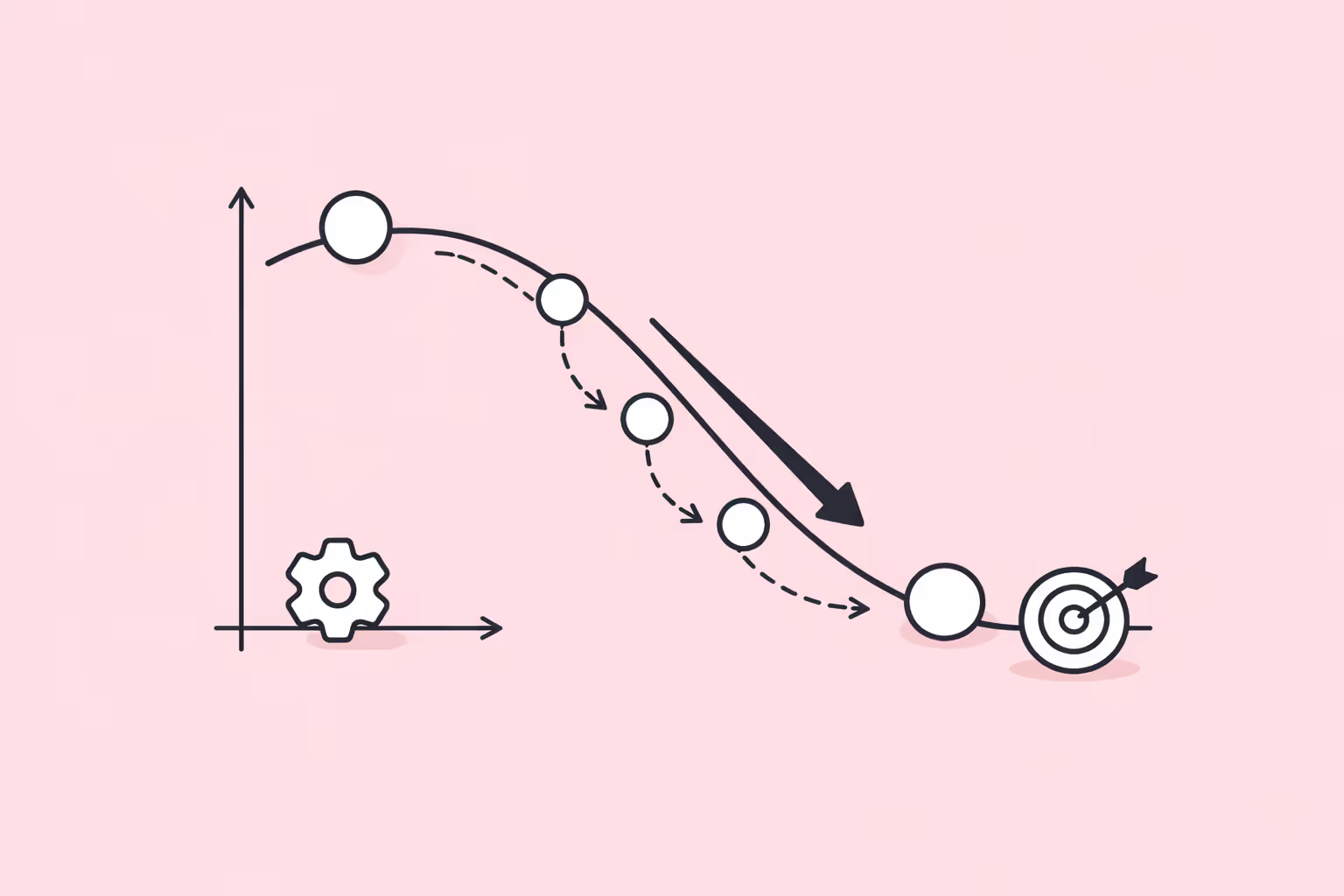

How Supervised Learning Works

Data Collection and Labeling

Every supervised learning project begins with a labeled dataset. Labels are the ground truth outputs that the model aims to predict. In an image classification task, the label might be "cat" or "dog." In a loan approval system, the label might be "approved" or "denied." The quality and representativeness of these labels directly determine how well the model will perform in production.

Acquiring labeled data is often the most expensive and time-consuming part of a supervised learning pipeline. Labels may come from human annotators, domain experts, existing business records, or automated systems. Poorly labeled data introduces noise that degrades model accuracy, so organizations invest heavily in annotation quality control.

Feature Selection and Engineering

Features are the measurable properties of each data point that the model uses to make predictions. In a housing price model, features might include square footage, number of bedrooms, neighborhood, and distance to public transit. Selecting the right features is critical because irrelevant or redundant inputs can confuse the algorithm and reduce performance.

Feature engineering transforms raw data into representations that make patterns easier for the model to detect. This might involve normalizing numerical values, encoding categorical variables, creating interaction terms, or extracting domain-specific signals from unstructured data.

While deep learning automates much of this process for unstructured data like images and text, feature engineering remains essential for tabular datasets and classical algorithms.

Training, Validation, and Testing

The labeled dataset is typically divided into three subsets through a process called data splitting. The training set, usually 60 to 80 percent of the data, is what the model learns from. The validation set is used during training to tune hyperparameters and monitor for overfitting. The test set, held out entirely, provides the final unbiased evaluation of model performance.

During training, the model makes predictions on training examples, computes a loss function that quantifies prediction error, and updates its internal parameters to reduce that error. This iterative cycle repeats over many passes through the data. Optimization algorithms like gradient descent and its variants control how parameter updates are calculated and applied, adjusting the model incrementally toward better predictions.

Overfitting occurs when a model memorizes the training data instead of learning generalizable patterns. It performs well on training examples but poorly on new data. Regularization techniques, cross-validation, and early stopping are standard strategies to prevent this. Underfitting, the opposite problem, happens when a model is too simple to capture the patterns in the data.

Model Evaluation

After training, the model is evaluated on the held-out test set using metrics appropriate to the task. For classification, common metrics include accuracy, precision, recall, F1 score, and area under the ROC curve. For regression, mean absolute error, mean squared error, and R-squared are standard.

No single metric tells the whole story. A medical diagnostic model might have high overall accuracy but fail to detect rare conditions, making recall for the positive class a more critical metric than aggregate accuracy. Choosing evaluation metrics requires understanding the domain, the cost of different error types, and the intended use case.

| Component | Function | Key Detail |

|---|---|---|

| Data Collection and Labeling | Every supervised learning project begins with a labeled dataset. | In an image classification task, the label might be "cat" or "dog. |

| Feature Selection and Engineering | Features are the measurable properties of each data point that the model uses to make. | In a housing price model, features might include square footage |

| Training, Validation, and Testing | The labeled dataset is typically divided into three subsets through a process called data. | The training set, usually 60 to 80 percent of the data |

| Model Evaluation | After training, the model is evaluated on the held-out test set using metrics appropriate. | For classification, common metrics include accuracy, precision |

Types of Supervised Learning

Supervised learning divides into two primary categories based on the nature of the output variable.

Classification

Classification algorithms predict discrete categories or classes. The output is a label from a finite set of possibilities. Binary classification involves two classes, such as spam versus not spam or malignant versus benign. Multiclass classification extends this to three or more categories, such as classifying images into dozens of object types.

Common classification algorithms include:

- Logistic regression. Despite its name, logistic regression is a classification algorithm that estimates the probability of an input belonging to a particular class. It is simple, interpretable, and effective for linearly separable problems.

- Decision trees. Decision trees split data along feature thresholds to create a tree of decisions leading to a predicted class. They are intuitive and easy to visualize but prone to overfitting without pruning or ensemble methods.

- Random forests. A random forest builds many decision trees on random subsets of data and features, then aggregates their predictions through majority voting. This ensemble approach reduces overfitting and improves generalization.

- Support vector machines (SVMs). SVMs find the hyperplane that maximizes the margin between classes. They are effective in high-dimensional spaces and work well with clear margin-of-separation data.

- Neural networks. Neural networks with one or more hidden layers can learn complex, non-linear decision boundaries. For tasks involving images, text, or audio, deep neural networks trained with backpropagation are the dominant approach.

Regression

Regression algorithms predict continuous numerical values. Instead of assigning a class label, the model outputs a number on a continuous scale. Predicting stock prices, estimating delivery times, and forecasting energy consumption are all regression problems.

Common regression algorithms include:

- Linear regression. The simplest regression method fits a straight line (or hyperplane in multiple dimensions) to minimize the sum of squared errors between predictions and actual values. It is fast, interpretable, and serves as a baseline for more complex models.

- Polynomial regression. An extension of linear regression that fits a polynomial curve to capture non-linear relationships. It is more flexible but more susceptible to overfitting as the degree increases.

- Ridge and lasso regression. These are regularized versions of linear regression that add penalty terms to the loss function. Ridge regression (L2) penalizes large coefficients, and lasso regression (L1) can drive coefficients to zero, effectively performing feature selection.

- Gradient-boosted trees. Algorithms like XGBoost, LightGBM, and CatBoost build ensembles of decision trees sequentially, with each new tree correcting the errors of the previous ones. These are among the most effective algorithms for structured, tabular data.

- Neural network regressors. The same architectures used for classification can predict continuous values by changing the output layer activation function. Deep learning regressors are common in time series forecasting and complex predictive modeling tasks.

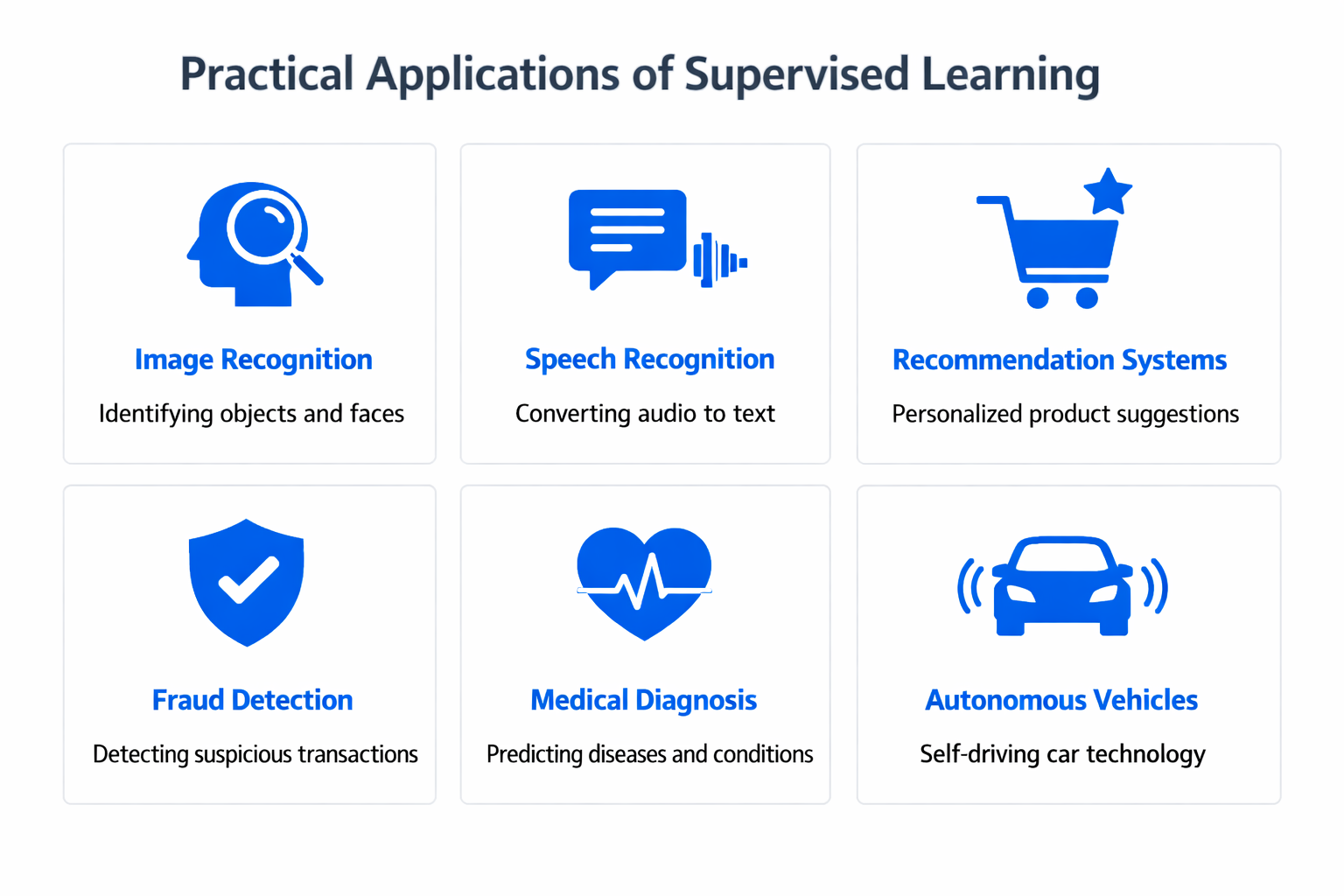

Supervised Learning Use Cases

Healthcare and Medical Diagnostics

Supervised learning powers diagnostic systems that classify medical images, predict patient outcomes, and flag anomalies in lab results. A model trained on thousands of labeled X-rays can detect pneumonia, fractures, or tumors with accuracy that rivals specialist radiologists. Hospitals use supervised models to estimate readmission risk, predict sepsis onset, and prioritize patients in emergency departments.

Pharmaceutical research relies on supervised learning for drug discovery, using molecular structure data labeled with efficacy and toxicity outcomes to predict which candidate compounds are worth advancing to clinical trials. These applications reduce the time and cost of bringing new treatments to market.

Finance and Risk Assessment

Banks and financial institutions use supervised learning extensively for credit scoring, fraud detection, and market forecasting. Credit models trained on historical loan data predict the probability of default for new applicants. Fraud detection systems classify transactions as legitimate or suspicious in real time, processing millions of events per day.

Algorithmic trading systems use regression models to forecast asset prices and optimize portfolio allocations. Insurance companies apply supervised learning to assess claim risk and set premiums based on policyholder attributes and historical claim data.

Natural Language Processing

Many natural language processing tasks are supervised learning problems at their core. Sentiment analysis classifies text as positive, negative, or neutral. Named entity recognition labels words in a sentence as persons, organizations, or locations. Text classification routes customer support tickets to the appropriate department.

Transformer models fine-tuned on labeled datasets have pushed the state of the art across virtually all NLP benchmarks. These models are pre-trained on large text corpora using self-supervised objectives and then fine-tuned with labeled data for specific downstream tasks, combining the benefits of large-scale representation learning with the precision of supervised training.

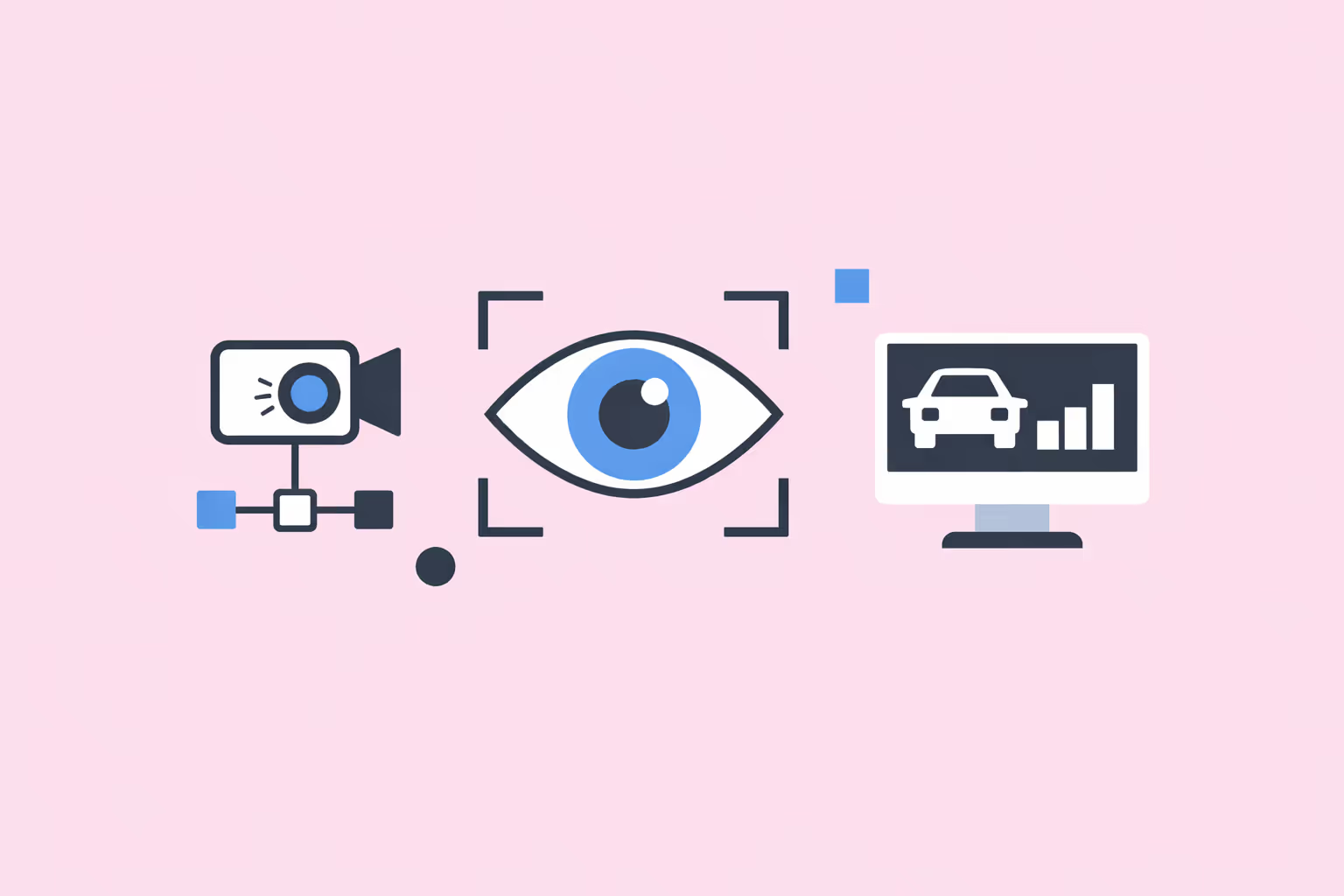

Computer Vision

Image classification, object detection, and semantic segmentation are all supervised learning tasks. Self-driving cars use supervised models trained on labeled road imagery to detect pedestrians, traffic signs, and lane markings. Manufacturing plants deploy supervised vision systems to identify product defects on assembly lines. Agriculture companies use aerial imagery classified by supervised models to monitor crop health and detect disease.

Education and Adaptive Learning

Supervised learning enables adaptive learning platforms to predict student performance and personalize learning pathways. A model trained on historical engagement data can forecast which students are at risk of falling behind, allowing instructors to intervene early. Automated essay scoring systems use supervised models to evaluate written responses against rubric-based labels.

These approaches connect directly to how organizations use data to build more effective training programs. Platforms that leverage machine learning can tailor content difficulty, recommend resources, and adjust pacing based on individual learner signals.

Challenges and Limitations

Label Dependency and Cost

Supervised learning requires labeled data, and obtaining high-quality labels is often the primary bottleneck. Labeling medical images requires board-certified specialists. Annotating legal documents requires lawyers. Every domain carries its own labeling cost, and scaling annotation while maintaining quality is a persistent operational challenge.

When labels are scarce, techniques like semi-supervised learning (which combines a small labeled set with a large unlabeled set) and active learning (which selectively requests labels for the most informative examples) can stretch limited annotation budgets. Transfer learning, particularly with pre-trained deep learning models, also reduces the volume of labeled data needed for new tasks.

Bias and Fairness

A supervised learning model can only be as fair as the data it trains on. If historical data reflects discriminatory patterns, the model will reproduce and potentially amplify those biases. A hiring model trained on biased past decisions will learn to prefer the same demographic profiles. A criminal risk model trained on biased policing data will assign higher risk scores to over-policed communities.

Addressing machine learning bias requires careful data auditing, bias measurement across protected attributes, and fairness-aware training procedures. It is not a purely technical problem. It demands cross-functional collaboration between data scientists, domain experts, and ethicists.

Overfitting and Generalization

Models that fit training data too closely fail to generalize to new observations. This is especially common with complex models trained on small datasets. A decision tree with no depth limit will memorize every training example perfectly but perform poorly in production. Regularization, dropout, ensemble methods, and cross-validation are standard countermeasures.

The related problem of distribution shift occurs when the real-world data encountered during deployment differs systematically from the training data. A model trained on summer weather data will underperform in winter. Continuous monitoring and periodic retraining are necessary to maintain performance as conditions change.

Scalability and Computational Demands

Training supervised learning models on large datasets requires significant computational resources. Deep neural networks in particular demand GPU or TPU hardware, and training runs can take days or weeks for large-scale tasks. Frameworks like PyTorch have optimized distributed training, but the cost of compute remains a barrier for smaller organizations and research groups.

Feature engineering at scale also introduces infrastructure challenges. Processing billions of records, maintaining feature stores, and serving predictions with low latency all require purpose-built data pipelines that go well beyond the model training code itself.

How to Get Started

Building competence in supervised learning involves developing skills across data handling, algorithm selection, and iterative experimentation.

- Learn the mathematical foundations. Linear algebra, calculus, probability, and statistics underpin all supervised learning algorithms. Understanding how a loss function works, why gradient descent converges, and what a probability distribution represents is necessary before meaningful algorithm design or debugging.

- Start with classical algorithms. Before moving to deep learning, build strong intuition with logistic regression, decision trees, and linear regression. These algorithms are transparent enough to reason about directly, and they establish baselines that more complex models should outperform.

- Master a programming framework. Python with scikit-learn is the standard starting point for classical supervised learning. For deep learning tasks, PyTorch and TensorFlow provide the tools for building, training, and evaluating neural networks. Both ecosystems have extensive documentation and community support.

- Practice with benchmark datasets. The UCI Machine Learning Repository, Kaggle, and Hugging Face Datasets host thousands of labeled datasets spanning classification and regression tasks. Working through well-known problems like Titanic survival prediction, MNIST digit classification, or Boston housing price estimation builds practical experience with end-to-end workflows.

- Prioritize evaluation rigor. Always split your data properly. Never evaluate on data the model has seen during training. Use cross-validation for small datasets. Track multiple metrics, not just accuracy. Build the habit of diagnosing where and why your model fails, not just measuring aggregate performance.

- Iterate on features before architectures. In most applied settings, improving data quality and feature engineering yields larger performance gains than switching algorithms. Clean your data, handle missing values thoughtfully, and engineer features that encode domain knowledge before reaching for a more complex model.

Teams exploring supervised learning as part of a broader AI initiative should consider how these techniques integrate with clustering for exploratory analysis and predictive modeling pipelines for production deployment.

FAQ

What is the difference between supervised and unsupervised learning?

Supervised learning uses labeled data where each input has a known correct output. The model learns to map inputs to outputs by minimizing prediction error. Unsupervised learning works with unlabeled data and seeks to discover hidden structure, such as grouping similar items into clusters or reducing data dimensionality. The choice between them depends on whether labeled data is available and what the goal of the analysis is.

What types of problems is supervised learning best suited for?

Supervised learning is ideal when you have a clearly defined output variable and sufficient labeled examples. Classification tasks like spam detection, image recognition, and disease diagnosis are natural fits. Regression tasks like price prediction, demand forecasting, and risk scoring are equally well suited. If the relationship between inputs and outputs can be learned from historical examples, supervised learning is likely the right approach.

How much labeled data does supervised learning require?

There is no universal threshold. Simple linear models can perform well with a few hundred examples. Complex deep learning models may require tens of thousands or millions. The amount depends on the complexity of the task, the number of features, the noise in the data, and the algorithm being used. Transfer learning and data augmentation can reduce requirements for deep learning, while classical algorithms typically need less data overall.

Can supervised learning handle real-time predictions?

Yes. Once trained, supervised learning models can make predictions in milliseconds. The training phase is computationally expensive, but inference (making predictions on new data) is fast. Real-time applications like fraud detection, recommendation systems, and autonomous driving all rely on supervised models making predictions at scale with low latency.

How does supervised learning relate to deep learning?

Deep learning is a subset of machine learning that uses neural networks with many layers. When a deep learning model is trained on labeled data to predict specific outputs, it is performing supervised learning. The two are not mutually exclusive. Deep learning can also operate in unsupervised or self-supervised modes, but its most common and commercially successful applications are supervised.

%201.svg)

.png)

%201.svg)