What Is Agentic AI?

Agentic AI refers to artificial intelligence systems that pursue goals through autonomous, multi-step reasoning and action.

Rather than responding to a single prompt with a single output, an agentic AI system interprets an objective, breaks it into subtasks, selects tools or methods to complete each subtask, and adjusts its approach based on intermediate results.

The distinction matters because most AI tools people interact with today are reactive. A user provides a prompt, the model generates a response, and the interaction ends.

Agentic AI operates differently. It maintains a goal across multiple steps, makes decisions about what to do next, and can interact with external systems, databases, APIs, or files, to accomplish its objective.

Consider the difference between asking an AI to "write a summary of this document" and instructing it to "research competitor pricing, compile the data into a spreadsheet, identify the three lowest-cost options, and draft a recommendation email."

The first task is a single generation step. The second requires planning, tool use, intermediate evaluation, and sequenced execution. That second pattern is what makes a system agentic.

Agentic AI does not require sentience, general intelligence, or full autonomy. It requires the ability to decompose goals, execute multi-step plans, use external tools, and revise its approach when something does not work.

These capabilities can operate within tightly defined boundaries, which is how most production deployments function today.

How Agentic AI Differs from Generative AI

Generative AI produces content. Given an input, it generates text, images, code, or audio. Large language models, diffusion models, and code generation tools all fall into this category. They are powerful at producing outputs but do not, on their own, pursue objectives across multiple steps.

Agentic AI builds on generative capabilities but adds a layer of autonomy and orchestration. The difference is not in the underlying model but in how the system is structured to use that model.

Three distinctions clarify the boundary:

Scope of action. Generative AI completes a single task per interaction. Agentic AI chains multiple tasks together, deciding what to do next based on what happened before. A generative model writes code. An agentic system writes code, runs it, reads the error output, diagnoses the problem, rewrites the code, and tests again.

External interaction. Generative AI operates within its own context window. Agentic AI reaches beyond it. It calls APIs, queries databases, reads files, triggers workflows, and writes to external systems. This interaction with the environment is what enables multi-step execution.

Persistence and adaptation. A generative model forgets the interaction once it ends. Agentic systems maintain state across steps. They track what has been tried, what succeeded, what failed, and what remains. This working memory allows them to adjust strategy mid-execution rather than starting from scratch.

The practical implication for organizations: generative AI is a tool you use. Agentic AI is a system you deploy. The operational requirements, risk profiles, and governance needs differ significantly between the two.

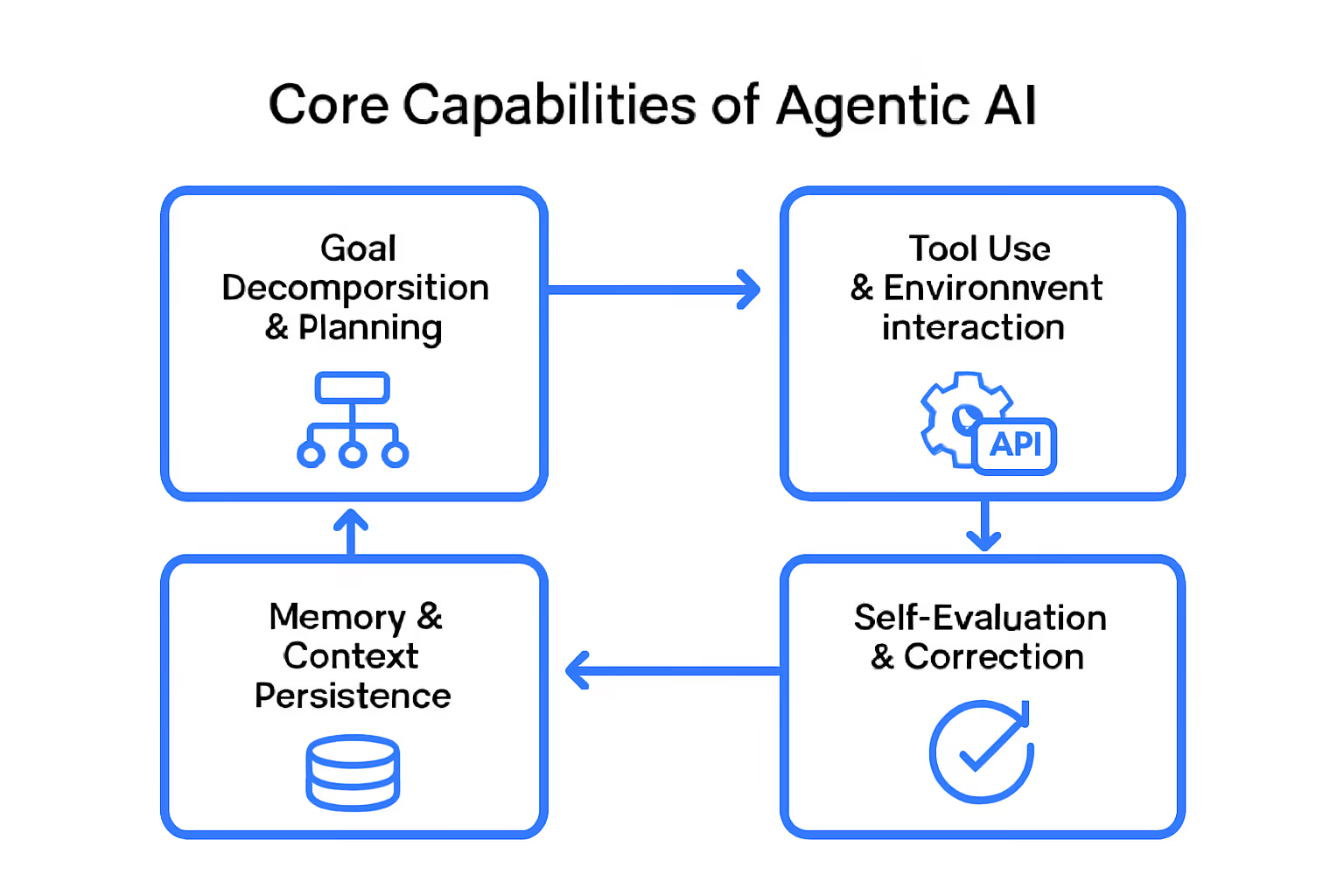

Core Capabilities of Agentic AI Systems

Calling a system "agentic" implies specific technical capabilities. Four are foundational. Without any one of them, the system is either a standard generative model or a scripted automation, not an agent.

Goal Decomposition and Planning

An agentic system receives a high-level objective and breaks it into executable steps. This is not keyword extraction or template matching. It requires the model to reason about dependencies, sequence actions logically, and anticipate what information each step will need from previous steps.

Planning quality determines agent effectiveness. A poorly planned sequence wastes compute, hits dead ends, or produces outputs that do not serve the original goal.

The most capable agentic frameworks use iterative planning: the agent creates an initial plan, begins execution, and revises the plan as new information emerges.

Tool Use and Environment Interaction

Agents act on the world. They execute code, call APIs, search the web, read documents, write files, and trigger workflows in external systems. Tool use is what separates an agent from a chatbot that merely describes what could be done.

Each tool call introduces a new variable. The agent must select the right tool, construct the correct input, interpret the output, and decide what to do next based on the result. Errors in tool selection or input construction cascade through subsequent steps.

Memory and Context Persistence

Agents maintain state across their execution. Short-term memory holds the current plan, intermediate results, and pending actions. Some systems also implement long-term memory, storing lessons from past executions to improve future performance.

Context persistence is constrained by model architecture. Language models have finite context windows. Agents that handle complex, multi-step tasks must manage what stays in context and what gets summarized or stored externally. Memory management is one of the hardest engineering problems in agentic system design.

Self-Evaluation and Correction

Capable agents review their own outputs. After completing a step, the agent evaluates whether the result meets the expected criteria. If it does not, the agent can retry with a different approach, request additional information, or escalate to a human.

Self-correction prevents small errors from compounding into large failures. Without it, an agent that makes a wrong assumption in step two will confidently build incorrect outputs through steps three through ten. The most reliable agentic systems treat self-evaluation as a required checkpoint, not an optional feature.

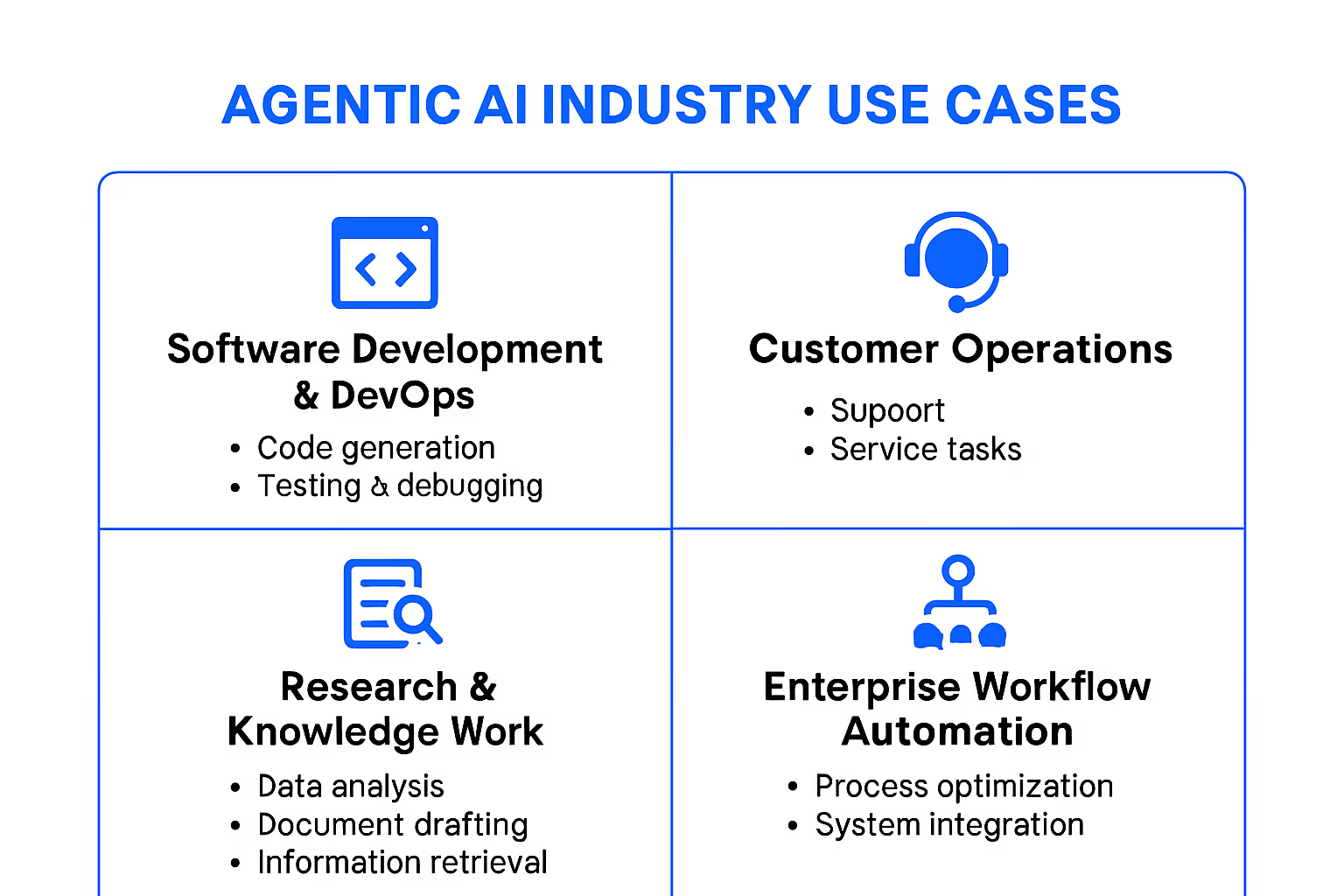

Real-World Use Cases for Agentic AI

The capabilities described above are not theoretical. Agentic AI systems are deployed across industries where tasks require multi-step execution, external system interaction, and adaptive decision-making.

Software Development and DevOps

Agentic coding assistants go beyond autocomplete. They accept a feature request, analyze the existing codebase, write implementation code across multiple files, run tests, interpret failures, and iterate until tests pass. Some systems handle pull request reviews, identifying issues, suggesting fixes, and verifying that corrections resolve the flagged problems.

In DevOps, agents monitor infrastructure, detect anomalies, diagnose root causes by querying logs and metrics, and execute remediation steps. The value is not just speed; it is the ability to handle incidents at hours when human operators are unavailable.

Customer Operations

Agentic systems in customer support resolve issues end-to-end rather than routing tickets. An agent receives a customer complaint, looks up account history, checks order status across systems, applies a resolution (refund, replacement, escalation), and sends a personalized response. The interaction spans multiple systems and decisions, not a single templated reply.

The constraint is trust. Organizations must define which actions an agent can take autonomously and which require human approval. Most production deployments use tiered authority: simple resolutions are automated, complex or high-value cases are escalated.

Research and Knowledge Work

Research agents handle literature discovery, synthesis, and reporting. Given a research question, an agent searches academic databases, filters results by relevance and recency, extracts key findings, identifies contradictions across sources, and produces a structured summary with citations.

In legal, finance, and consulting, similar agents automate due diligence, regulatory analysis, and competitive intelligence. The tasks share a pattern: gather information from multiple sources, synthesize it against specific criteria, and produce a structured output.

Enterprise Workflow Automation

Traditional automation follows fixed rules. Agentic automation handles variable workflows. An agent processing invoices does not just extract fields from a template; it interprets non-standard formats, flags discrepancies, cross-references purchase orders, and routes exceptions to the appropriate team.

Enterprise agents connect across systems that were not designed to work together. They bridge ERP, CRM, email, and document management by acting as an intelligent intermediary that understands context and makes routing decisions.

Limitations and Risks of Agentic AI

Agentic AI demonstrations are impressive. Production deployments are harder. The gap between the two is where most organizations encounter friction.

Error compounding is the primary reliability risk. Each step in an agentic chain introduces a probability of error. In a ten-step task, even a 95% per-step accuracy rate produces a cumulative accuracy below 60%. A small misinterpretation in step three propagates through every subsequent decision. Unlike single-generation tasks where a user can immediately spot and correct an error, multi-step agents may produce plausible-looking outputs built on flawed intermediate reasoning.

Hallucination risk amplifies with autonomy. When a generative model hallucinates a fact in a chatbot response, a human reads it and can verify. When an agentic system hallucinates a fact and then acts on it, querying a wrong API endpoint, sending an incorrect email, or making a flawed calculation, the consequences are operational, not just informational.

Safety boundaries require explicit design. Agents that interact with external systems can take irreversible actions: deleting records, sending communications, modifying configurations, or committing code. Without carefully designed permission boundaries, an agent operating with good intentions and bad judgment can cause real damage. Guardrails must define what actions are permitted, what require human approval, and what are prohibited regardless of context.

Observability is immature. Understanding why an agent made a specific decision across a multi-step execution is significantly harder than understanding a single model output. Debugging agentic failures often requires reconstructing an entire chain of reasoning, tool calls, and intermediate outputs. Most current frameworks lack the logging and tracing infrastructure that production systems require.

Cost scales with complexity. Agentic systems make multiple model calls per task. A single user request might trigger dozens of LLM inferences, tool calls, and evaluation steps. For organizations evaluating agentic AI, the cost-per-task calculation must include the full execution chain, not just a single API call.

These limitations are not arguments against agentic AI. They are engineering constraints that determine where and how it should be deployed. Organizations that treat agentic AI as a mature, drop-in solution will be disappointed. Those that treat it as a powerful but bounded capability, requiring oversight, testing, and incremental trust-building, will extract real value.

How to Evaluate Agentic AI for Your Organization

Not every workflow benefits from agentic AI. Before investing, organizations need a clear evaluation framework that distinguishes genuine opportunities from hype-driven experimentation.

Start with the task, not the technology. Identify workflows that are multi-step, require interaction with multiple systems, and currently depend on human judgment for routing and decision-making. Single-step tasks that a generative model handles well do not need an agentic layer. Adding agent complexity to simple problems increases cost and risk without proportional benefit.

Assess error tolerance. Agentic systems are best suited for tasks where errors are detectable and reversible. A research agent that produces an inaccurate summary can be reviewed before action is taken. An agent that autonomously sends contractual documents has far less room for error. Map your candidate workflows by error impact, and deploy agents first where mistakes are recoverable.

Evaluate your data and system access. Agents need to interact with external tools and data sources. If your organization's systems are fragmented, poorly documented, or lack API access, agent deployment will stall at the integration layer. Technical readiness means not just having the AI capability but having the infrastructure that agents need to act on.

Define human-in-the-loop requirements. Decide in advance which decisions require human approval and which the agent can execute autonomously. This is not a binary choice. Most effective deployments use graduated autonomy: agents handle routine decisions independently and escalate edge cases. The graduation criteria should be explicit and documented.

Evaluate vendors on observability, not just capability. Many agentic AI vendors demonstrate impressive demos. Fewer provide the logging, tracing, and debugging tools needed for production. Ask vendors how you will understand why an agent made a specific decision, how you will audit its actions, and how you will identify when it fails silently. Observability is what separates a prototype from a production system.

Plan for iteration, not immediate deployment at scale. Start with a bounded pilot on a well-understood workflow. Measure results against predefined metrics. Expand scope only after the pilot generates evidence that the agent performs reliably in your specific environment.

Frequently Asked Questions

What is the difference between agentic AI and autonomous AI?

Agentic AI and autonomous AI overlap but are not identical. Agentic AI describes systems that pursue goals through multi-step reasoning and tool use, typically with defined boundaries and human oversight.

Autonomous AI is a broader term that implies a system operates independently without human intervention. Most agentic AI systems today are semi-autonomous: they execute complex tasks independently but operate within permission boundaries and escalate uncertain decisions to humans.

Can agentic AI operate without human supervision?

Technically, yes. Practically, most production deployments include human oversight. The level of supervision depends on the task's error tolerance and consequence severity.

Low-risk, reversible tasks like research synthesis or data formatting can run with minimal oversight. High-stakes tasks like financial transactions or customer communications typically require human approval at key decision points. Full unsupervised operation is possible but not recommended for most enterprise use cases today.

What industries benefit most from agentic AI?

Industries with high volumes of multi-step, knowledge-intensive workflows see the strongest returns. Software development, customer operations, financial services, legal research, and enterprise administration are leading adoption areas.

The common thread is tasks that require gathering information from multiple sources, making decisions based on context, and executing actions across systems. Industries with rigid, predictable workflows may benefit more from traditional automation.

Is agentic AI the same as an AI agent?

An AI agent is a specific implementation of agentic AI. "Agentic AI" describes the category of AI systems that exhibit goal-directed, multi-step autonomous behavior. An "AI agent" is a particular system built with those characteristics.

The relationship is similar to "electric vehicle" (the category) and a specific car model (the implementation). All AI agents are agentic, but agentic AI as a concept also encompasses frameworks, architectures, and design patterns beyond any single agent.

%201.svg)

.png)

%201.svg)