What Is Retrieval-Augmented Generation?

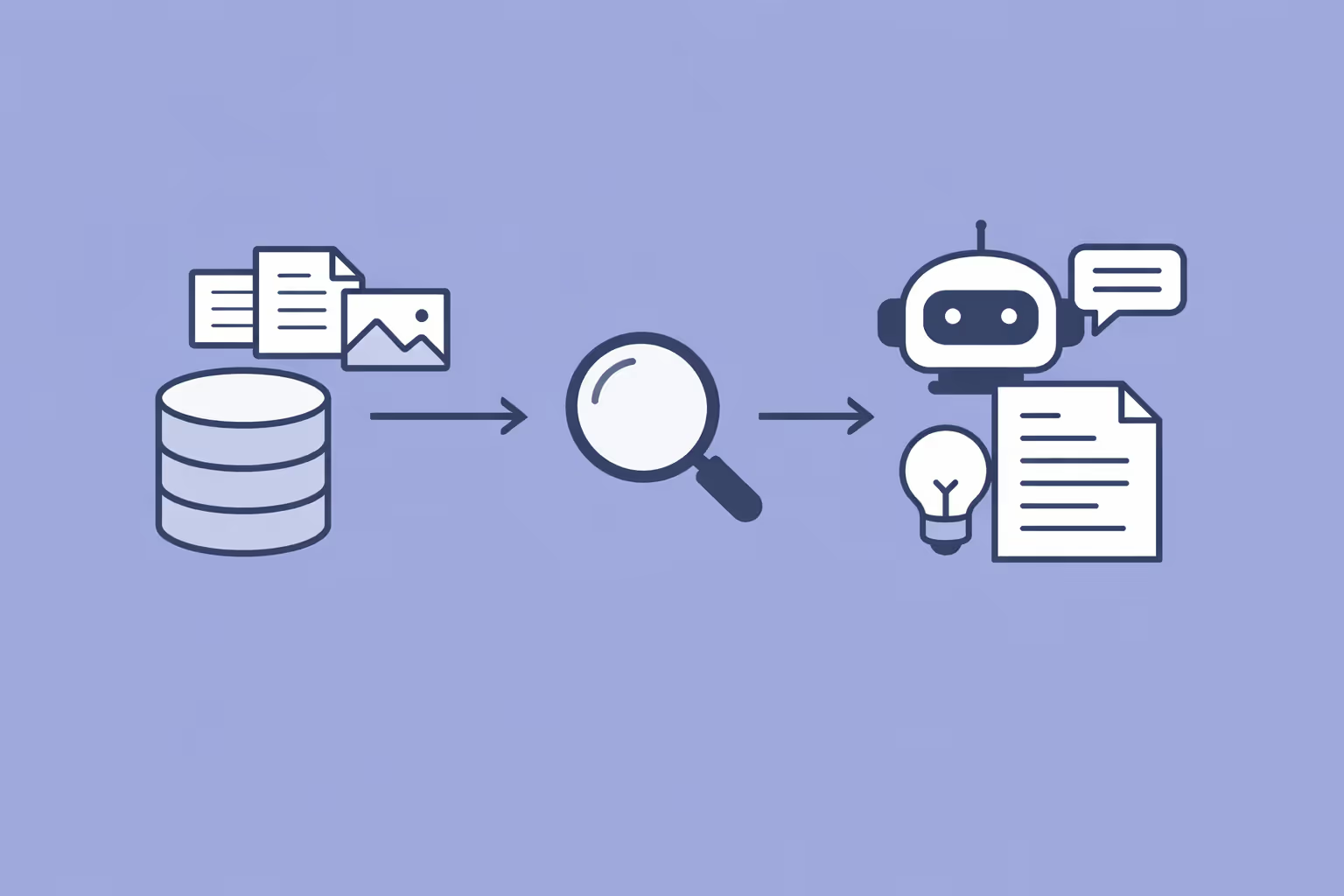

Retrieval-augmented generation (RAG) is an artificial intelligence architecture that enhances the output of a large language model by first retrieving relevant information from external knowledge sources and then using that information to generate a response.

Rather than relying solely on the patterns stored in its parameters during training, a RAG system pulls in up-to-date, domain-specific data at inference time to produce answers that are more accurate, verifiable, and grounded in real sources.

The concept was introduced by researchers at Facebook AI Research (now Meta AI) in 2020. Their paper proposed combining a pre-trained sequence-to-sequence generative AI model with a dense passage retrieval mechanism, allowing the generator to condition its output on documents fetched from a knowledge corpus.

This addressed a core limitation of standard language models: once training ends, their knowledge is frozen, and they cannot incorporate new or specialized information without retraining.

RAG sits at the intersection of two well-established fields within natural language processing. The retrieval component draws on decades of research in information retrieval, the same discipline behind search engines. The generation component leverages the capabilities of transformer model architectures that power modern language models.

By connecting these two systems, RAG creates a pipeline where the model can "look things up" before answering, much like a human consulting reference material before writing.

In practical terms, retrieval-augmented generation has become one of the most widely adopted patterns for deploying large language models in enterprise settings. It allows organizations to ground AI outputs in their own proprietary data, such as policy documents, knowledge bases, product catalogs, and research archives, without the cost and complexity of fine-tuning a model from scratch.

How RAG Works

The Retrieval Step

The retrieval step is responsible for finding the most relevant pieces of information from an external knowledge source. This process begins with converting both the user's query and the documents in the knowledge base into numerical representations called vector embeddings.

These embeddings capture the semantic meaning of text, allowing the system to match queries with documents based on conceptual similarity rather than simple keyword overlap.

When a user submits a query, the system encodes it into an embedding vector using a pre-trained encoder model, often based on architectures like BERT or similar dense retrieval models such as REALM.

This query vector is then compared against a vector index of pre-processed documents using semantic search techniques. The system returns the top-k most similar documents or passages based on cosine similarity or other distance metrics.

The retrieved documents are typically stored in a vector database, a specialized data store optimized for fast nearest-neighbor lookups across high-dimensional embedding spaces. Popular vector databases include Pinecone, Weaviate, Milvus, and Chroma.

The quality of retrieval depends heavily on how the source documents were chunked (split into smaller segments), how the embeddings were generated, and how well the retriever model captures the semantic relationship between queries and relevant passages.

The Generation Step

Once the retriever returns a set of relevant documents, the generation step begins. The retrieved passages are concatenated with the original user query and passed as input context to a large language model. The generator, typically a pre-trained model such as GPT-3 or a similar autoregressive model, then produces a response conditioned on both the query and the retrieved evidence.

This conditioning is what distinguishes RAG from standard language model generation. Instead of relying entirely on internalized knowledge from pre-training, the model synthesizes an answer from the provided context. This significantly reduces hallucination, the tendency of language models to generate plausible but factually incorrect statements. The generator can attend to specific passages, quote relevant details, and construct responses that are directly traceable to source material.

The generation step can employ various prompt engineering techniques to optimize output quality. System prompts can instruct the model to only answer based on the provided context, to cite sources, or to indicate when the retrieved information is insufficient to answer the question.

The End-to-End Pipeline

A complete RAG pipeline integrates several components into a cohesive system. The typical workflow proceeds as follows:

- The user submits a natural language query.

- The query is encoded into a vector embedding by a retriever model.

- The embedding is used to search a vector database for relevant document chunks.

- The top-k retrieved chunks are formatted and prepended to the query as context.

- The combined input is sent to the language model for generation.

- The model produces a response grounded in the retrieved information.

- Optionally, the system returns source citations alongside the generated answer.

Orchestration frameworks like LangChain and LlamaIndex simplify building and managing these pipelines. They provide abstractions for document loading, text splitting, embedding generation, vector store integration, and prompt templating, making it substantially easier to prototype and deploy RAG applications.

| Component | Function | Key Detail |

|---|---|---|

| The Retrieval Step | The retrieval step is responsible for finding the most relevant pieces of information from. | — |

| The Generation Step | Once the retriever returns a set of relevant documents, the generation step begins. | GPT-3 or a similar autoregressive model |

| The End-to-End Pipeline | A complete RAG pipeline integrates several components into a cohesive system. | — |

Why RAG Matters

Reducing Hallucination

Hallucination is one of the most pressing challenges in deploying generative AI systems. When a language model lacks relevant information or encounters an ambiguous query, it may fabricate details that sound convincing but are entirely wrong. In high-stakes domains like healthcare, legal research, and financial advisory, hallucinated outputs can cause serious harm.

RAG directly addresses this problem by providing the model with verified source material at inference time. When the model has access to authoritative documents, it is far less likely to invent information. Organizations can further constrain the model by instructing it to abstain from answering when retrieved context is insufficient, creating a safety mechanism that pure generation models lack.

Keeping Knowledge Current

Large language models are trained on data collected up to a specific cutoff point. After training, they have no awareness of events, publications, or changes that occurred afterward. Retraining or even fine-tuning a large model to incorporate new information is expensive and time-consuming.

RAG solves this by decoupling the model's knowledge from its parameters. When new documents are added to the knowledge base, the RAG system can immediately access them without any modification to the underlying model. This makes retrieval-augmented generation particularly valuable for domains where information changes frequently, such as regulatory compliance, technical documentation, product specifications, and news analysis.

Cost Efficiency Over Fine-Tuning

Fine-tuning a large language model on domain-specific data requires curating training datasets, running computationally expensive training jobs, and managing model versioning and deployment. For many use cases, RAG achieves comparable or superior results by simply indexing the relevant documents and retrieving them at query time.

This makes RAG an attractive option for organizations operating within tight LLMOps budgets. The infrastructure cost of maintaining a vector database and running a retriever is a fraction of the cost of repeated fine-tuning cycles, especially when the knowledge base needs to be updated frequently.

Transparency and Traceability

Because RAG systems retrieve specific source documents, they can surface citations alongside their generated answers. This traceability is critical for building user trust and enabling human oversight. A user can verify the model's claims by reviewing the original source, something that is not possible with a standard language model that generates from opaque internal parameters.

RAG Use Cases

Retrieval-augmented generation has found applications across virtually every industry that relies on large bodies of text-based knowledge.

Enterprise Knowledge Management

Large organizations maintain vast repositories of internal documentation, including policy manuals, process guides, engineering specifications, and HR handbooks. RAG-powered chatbots and search tools allow employees to query these repositories in natural language and receive synthesized answers with source references, replacing the need to manually search through hundreds of documents.

Customer Support Automation

RAG enables conversational AI systems that answer customer questions using an organization's official support documentation, FAQs, and product knowledge bases. Unlike static FAQ bots, RAG systems can compose nuanced answers that combine information from multiple sources, handle follow-up questions, and escalate gracefully when the retrieved information does not cover the query.

Legal and Compliance Research

Legal professionals use RAG to search across case law, statutes, regulations, and contract archives. The retrieval component identifies relevant precedents and clauses, while the generation component summarizes findings and highlights key implications. This dramatically reduces the time required for legal research while maintaining the precision that the profession demands.

Healthcare and Biomedical Research

Medical practitioners and researchers use RAG systems to query clinical guidelines, drug databases, and peer-reviewed literature. The approach is particularly useful when clinicians need quick, evidence-based answers during patient care. By grounding responses in published medical literature, RAG reduces the risk of clinically dangerous hallucinations.

Education and E-Learning

RAG transforms how learners interact with educational content. Instead of passively reading materials, students can ask questions and receive answers grounded in their course readings, textbooks, and supplementary resources. Instructors can build RAG-powered tutoring systems that draw on curated curricula, ensuring that student-facing AI stays aligned with learning objectives and authoritative sources.

Technical Documentation and Developer Tools

Software companies deploy RAG to power documentation assistants that help developers navigate APIs, code libraries, and configuration guides. By indexing the full documentation corpus, these tools provide contextually relevant answers to specific technical questions, often outperforming traditional documentation search.

Challenges and Limitations

Retrieval Quality

The effectiveness of any RAG system is bounded by the quality of its retrieval component. If the retriever fails to surface relevant documents, the generator has no reliable context to work with and may fall back on its parametric knowledge, reintroducing the hallucination problem.

Retrieval quality depends on multiple factors: the choice of embedding model, the chunking strategy used to split documents, the metadata and filtering capabilities of the vector store, and the diversity and coverage of the knowledge base.

Improving retrieval often requires experimentation with chunk sizes, overlap strategies, re-ranking models, and hybrid search approaches that combine dense vector embeddings with traditional keyword-based retrieval (BM25). This tuning process can be time-intensive and domain-specific.

Context Window Constraints

Large language models have a fixed context window, the maximum number of tokens they can process in a single inference call. When the number of retrieved documents exceeds this limit, the system must either truncate the context or implement strategies to prioritize the most relevant passages. Longer context windows are becoming available in newer models, but they come with increased computational cost and latency.

Research has also shown that language models struggle with the "lost in the middle" problem, where information placed in the center of a long context is attended to less than information at the beginning or end. Thoughtful context assembly and re-ranking strategies are essential to mitigate this effect.

Data Preparation and Maintenance

Building a high-quality RAG knowledge base requires significant effort in document ingestion, cleaning, chunking, and embedding. Source documents may contain tables, images, headers, and nested structures that do not translate cleanly into flat text chunks. Keeping the knowledge base up to date requires automated pipelines for re-indexing when documents change.

Organizations also need to consider access control and data governance. Not all retrieved documents should be accessible to all users. Implementing row-level or document-level permissions within a RAG pipeline adds architectural complexity.

Latency and Cost

RAG introduces additional latency compared to direct generation because the system must execute a retrieval query before generating a response. For real-time applications, this added step can impact user experience. Optimizing latency involves caching frequent queries, using approximate nearest-neighbor algorithms, and carefully managing the trade-off between retrieval breadth and response time.

The cost of maintaining vector databases, running embedding models, and executing retrieval queries at scale can also become significant, particularly for applications with high query volumes.

Security and Data Leakage

When a RAG system indexes sensitive or confidential data, there is a risk that the language model may surface this information to unauthorized users. Prompt injection attacks, where a malicious query manipulates the model into revealing indexed content, represent an additional security concern. Robust access controls, input validation, and output filtering are necessary safeguards.

How to Implement RAG

Step 1: Define the Knowledge Base

Start by identifying the corpus of documents the system should have access to. This might include internal wikis, PDF manuals, database exports, web pages, or structured datasets. Assess the volume, format, and update frequency of these documents to inform the choice of ingestion pipeline and vector store.

Step 2: Choose an Embedding Model

Select an embedding model that converts text into vector representations. Models like OpenAI's text-embedding-ada-002, Cohere's embed models, or open-source options like Sentence-BERT offer different trade-offs between accuracy, speed, and cost. The embedding model should be well-suited to the domain and language of the knowledge base.

Step 3: Chunk and Index Documents

Split documents into smaller, semantically meaningful chunks. Common strategies include fixed-size chunking (e.g., 500 tokens with 50-token overlap), sentence-based splitting, or recursive splitting that respects document structure such as headings and paragraphs. Each chunk is then embedded and stored in a vector database alongside its metadata, such as source file, section title, and timestamp.

Step 4: Build the Retrieval Pipeline

Configure the retrieval mechanism to accept a user query, encode it, and return the top-k most relevant chunks from the vector store. Consider implementing a re-ranking step that uses a cross-encoder model to improve the precision of results beyond what the initial embedding similarity provides.

Step 5: Design the Prompt Template

Craft a prompt template that combines the retrieved context with the user query in a format the language model can process effectively. A well-designed template instructs the model to base its answer on the provided context, cite sources when possible, and indicate uncertainty when the context is insufficient. Prompt engineering plays a critical role in the quality of RAG outputs.

Step 6: Select a Generator Model

Choose a language model for the generation step. Options range from commercial APIs offered by OpenAI and Anthropic to open-source models that can be self-hosted. The choice depends on requirements for accuracy, latency, data privacy, and budget.

Step 7: Evaluate and Iterate

Measure the performance of the RAG system using both retrieval metrics (recall, precision, mean reciprocal rank) and generation metrics (factual accuracy, relevance, faithfulness to retrieved context). Tools like RAGAS and TruLens provide frameworks for automated evaluation. Use the results to iterate on chunking strategies, retrieval parameters, prompt templates, and model selection.

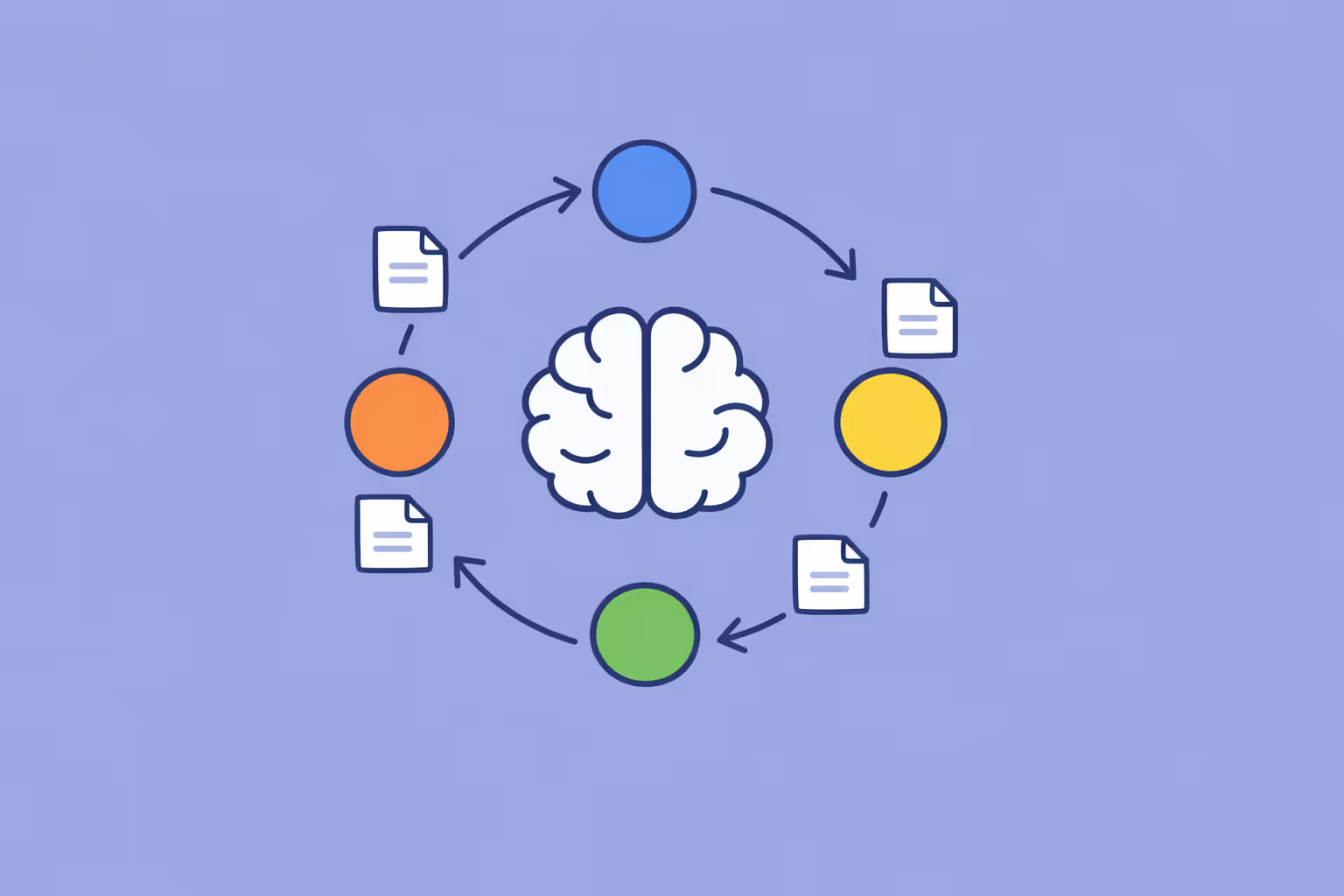

Advanced Techniques

As RAG systems mature, several advanced patterns can improve performance:

- Hybrid search combining dense vector retrieval with sparse keyword matching (BM25) for better coverage.

- Query transformation techniques that rephrase or decompose complex queries before retrieval.

- Agentic RAG, where an AI agent decides when to retrieve, what to retrieve, and how many retrieval rounds to perform.

- Integration with knowledge graph structures that provide relational context beyond what flat document retrieval offers.

- Multi-step retrieval chains that use the output of one retrieval round to inform a more targeted second round.

- Leveraging deep learning techniques for training custom retriever and re-ranker models tailored to specific domains.

FAQ

How is RAG different from fine-tuning?

Fine-tuning modifies the internal weights of a language model by training it on domain-specific data. RAG leaves the model's weights unchanged and instead provides relevant context at inference time through retrieval. Fine-tuning teaches the model new patterns and styles, while RAG gives it access to specific facts and documents.

Many production systems combine both approaches, using fine-tuning to adjust the model's tone and reasoning style, and RAG to supply it with current, verifiable information.

Does RAG completely eliminate hallucination?

No. RAG significantly reduces hallucination by grounding the model's output in retrieved evidence, but it does not eliminate the problem entirely. The model may still hallucinate if the retrieved documents are irrelevant, if the context window is overloaded, or if the prompt does not sufficiently constrain the model to use only the provided context. Ongoing evaluation and guardrails are essential.

What types of data can RAG work with?

RAG can work with any data that can be converted into text and embedded as vectors. This includes PDFs, web pages, database records, emails, chat logs, code repositories, and structured data exported as text. Multimodal RAG systems are also emerging that support image and table retrieval, though text-based retrieval remains the most mature implementation.

How does RAG relate to language modeling?

Language modeling is the foundation on which the generation component of RAG is built. A language model predicts the next token in a sequence based on the preceding context. In a RAG system, the context includes both the user's query and the retrieved documents, which means the language model's predictions are informed by external evidence rather than relying solely on patterns learned during pre-training.

Can I use RAG with open-source models?

Yes. RAG architectures are model-agnostic. The retrieval component is entirely independent of the generator, and many open-source language models (such as Llama, Mistral, and Falcon) work well as the generation backbone. Open-source orchestration frameworks like LangChain and LlamaIndex support both proprietary and open-source models.

What is the role of machine learning in RAG?

Machine learning underpins both components of a RAG system. The retriever uses learned embeddings to encode queries and documents into a shared vector space. The generator uses a neural network trained with language modeling objectives to produce coherent text.

Both components benefit from advances in deep learning research, particularly in transformer architectures and representation learning.

How does ChatGPT Enterprise use RAG?

ChatGPT Enterprise and similar products incorporate RAG-like functionality through features that allow organizations to upload their own documents and have the model answer questions grounded in that content. This gives enterprise users the ability to leverage powerful language models while keeping responses anchored in their proprietary data, combining the fluency of generative AI with the accuracy of document-based retrieval.

%201.svg)

.png)

.avif)

%201.svg)