What Is AI Watermarking?

AI watermarking is the process of embedding a detectable signal into AI-generated content, text, images, audio, or video, so that the content can later be identified as machine-produced. The signal is designed to be imperceptible to humans but recoverable by detection tools.

The concept borrows from traditional digital watermarking, where invisible markers are embedded in media files to prove ownership or track distribution. AI watermarking applies this principle differently. Instead of marking content after creation, the watermark is introduced during the generation process itself, built into how the AI model selects words, arranges pixels, or structures audio waveforms.

The purpose is provenance, not restriction. AI watermarking does not prevent content from being created, shared, or used. It provides a mechanism for verifying whether a given piece of content was generated by a specific AI system. This verification supports content authenticity efforts, misinformation detection, and regulatory compliance.

AI watermarking is distinct from AI detection tools that analyze content after the fact to guess whether it was machine-generated. Detection tools rely on statistical patterns in the output. Watermarking embeds a deliberate, known signal that can be checked with certainty, assuming the watermark remains intact.

How AI Watermarking Works

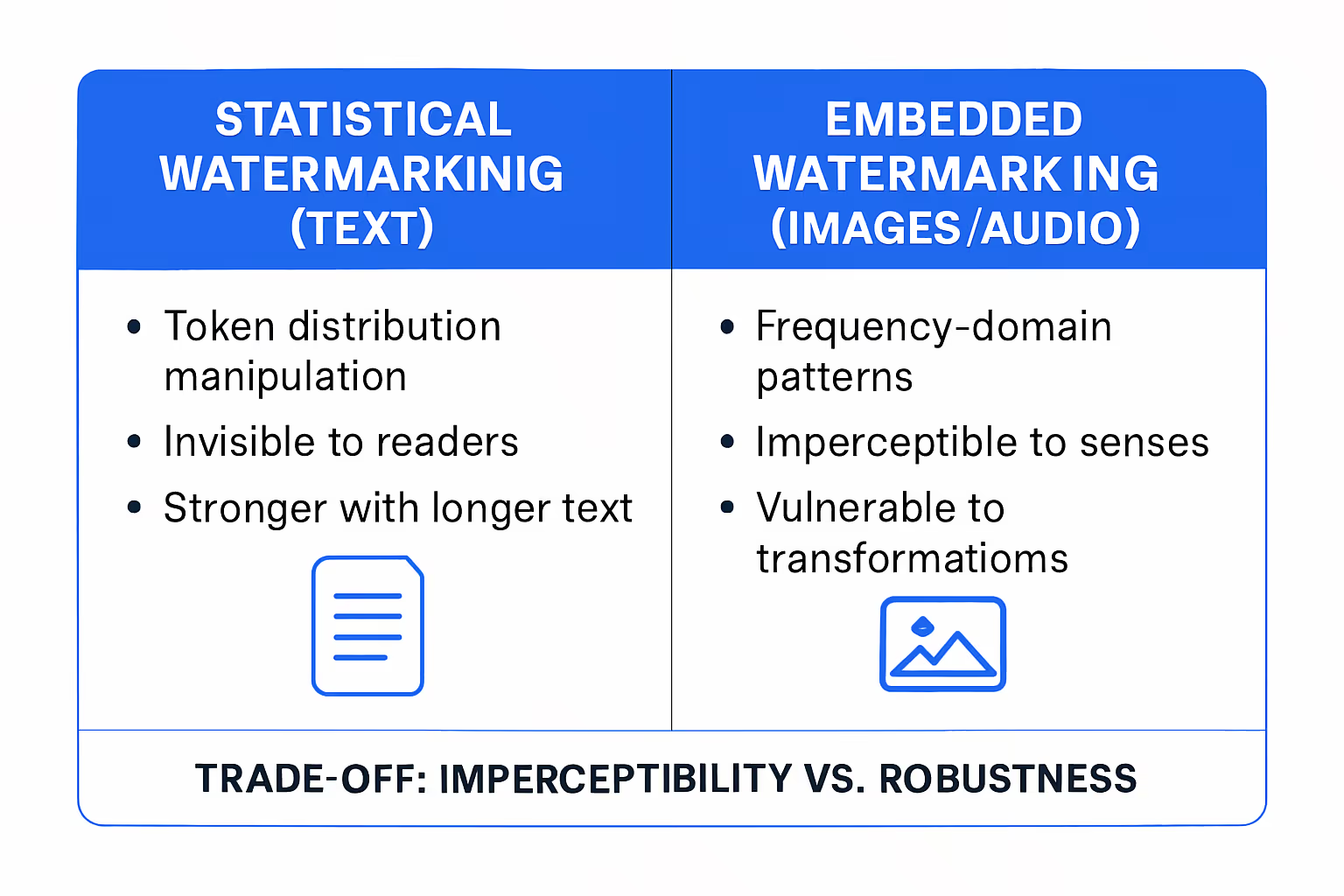

AI watermarking takes different forms depending on the content type. Text, images, and audio each present distinct technical challenges. Two broad approaches dominate current implementations.

Statistical Watermarking for Text

Large language models generate text by predicting the next token from a probability distribution. Statistical watermarking manipulates this distribution in a controlled way. During generation, the model subtly favors certain token choices over statistically equivalent alternatives, creating a pattern that is invisible to human readers but detectable by a verification algorithm that knows the watermarking key.

For example, when multiple words are equally probable in a given context, the watermarked model consistently selects from a designated subset. The resulting text reads naturally, but the pattern of token selections carries a statistical signature. A detector with the correct key can analyze a passage and determine, with quantifiable confidence, whether the watermark is present.

The strength of the signal depends on text length. Short passages carry weaker watermarks because there are fewer token decisions to embed the pattern. Longer passages allow the statistical signal to accumulate, increasing detection reliability.

Embedded Watermarking for Images and Audio

Image and audio watermarking operates at the pixel or waveform level. During generation, the AI model embeds a pattern into the output that is imperceptible to human senses but extractable by a detection algorithm.

For images, this often involves modifying pixel values in the frequency domain, a technique that survives common transformations like resizing, compression, and minor cropping. For audio, watermarks can be embedded in spectral components that are inaudible to listeners but recoverable through signal processing.

The challenge for visual and audio watermarks is robustness. Any transformation that significantly alters the content, such as screenshots of images, re-recording of audio, or heavy editing, can degrade or destroy the watermark. The trade-off between imperceptibility and robustness is a central design tension in all watermarking systems.

Why AI Watermarking Matters

AI watermarking addresses a growing structural problem: as AI-generated content becomes indistinguishable from human-created content, the ability to verify origin becomes critical for trust, safety, and accountability.

Three stakeholder needs drive the importance of watermarking.

Content Authenticity and Provenance

Journalists, publishers, and platforms need mechanisms to verify content origin. When AI-generated text, images, or audio can be passed off as human-created, the integrity of information ecosystems degrades. Watermarking provides a technical layer that supports provenance verification: if a piece of content carries a valid watermark, its AI origin can be confirmed.

This matters for newsrooms evaluating submitted content, platforms moderating uploads, and organizations verifying the authenticity of documents or media they receive. Provenance is not about labeling AI content as inferior. It is about ensuring that consumers of information can make informed judgments about what they are reading, viewing, or hearing.

Misinformation and Deepfake Defense

AI-generated deepfakes, synthetic media that fabricates events, statements, or identities, present a direct threat to public discourse and individual reputation. Watermarking does not prevent deepfake creation, but it provides a detection layer. If major AI providers watermark their outputs, synthetic media that carries a watermark can be flagged for review.

The limitation is clear: watermarking only works for content generated by systems that implement it. Content created by open-source models or systems that deliberately omit watermarks will not carry the signal. Watermarking is a partial defense, not a complete solution.

Regulatory and Policy Compliance

Regulatory frameworks are increasingly requiring transparency about AI-generated content. The EU AI Act includes provisions for labeling AI outputs. Executive actions in the United States have called on AI companies to develop watermarking capabilities. China has implemented requirements for AI-generated content identification.

For organizations operating across jurisdictions, watermarking provides a technical mechanism to comply with emerging labeling requirements. The regulatory landscape is still evolving, but the direction is consistent: provenance transparency is becoming a compliance expectation, not a voluntary practice.

Practical Limits of AI Watermarking

Watermarking is a useful tool, not a solved problem. Several practical limitations constrain its effectiveness in real-world conditions.

Robustness Against Removal

Text watermarks are vulnerable to paraphrasing. If a user takes watermarked text and rewrites it, substituting synonyms, restructuring sentences, or translating to another language and back, the statistical pattern degrades. Current research shows that aggressive paraphrasing can reduce watermark detectability to near-random levels.

Image watermarks face similar challenges. Screenshots, re-encoding at lower quality, cropping, or overlaying edits can weaken or destroy embedded signals. More sophisticated attacks, specifically designed to remove watermarks while preserving visual quality, have demonstrated high success rates in research settings.

The core tension: watermarks strong enough to survive aggressive manipulation tend to be detectable by adversaries, who can then target them more precisely. Watermarks subtle enough to resist detection by adversaries tend to be fragile against simple transformations.

False Positives and Detection Accuracy

No detection system is perfect. Statistical watermark detectors operate on probability thresholds. Setting the threshold too low produces false positives, flagging human-written content as AI-generated. Setting it too high produces false negatives, missing genuinely watermarked content.

For text watermarking, short passages are particularly problematic. A 50-word paragraph does not contain enough token decisions to produce a reliable statistical signal. Detection confidence increases with length, but many real-world use cases involve short-form content: social media posts, comments, summaries, and email.

False positives carry real consequences. A student's original essay falsely flagged as AI-generated, or a journalist's article incorrectly identified as synthetic, creates harm that undermines trust in the detection system itself.

Adoption Fragmentation

Watermarking only works at scale if major AI providers adopt compatible standards. Currently, different companies use different watermarking approaches with different detection methods. There is no universal watermarking standard. Content generated by one provider's system cannot be verified by another provider's detector.

Open-source models, which account for a growing share of AI usage, typically do not implement watermarking at all. Users who want to generate unwatermarked content can simply use models that do not participate.

This fragmentation means watermarking functions as a partial signal, useful when present but unreliable as a universal indicator of AI origin. Its value depends entirely on the breadth and consistency of adoption across the ecosystem.

AI Watermarking vs. Other Provenance Methods

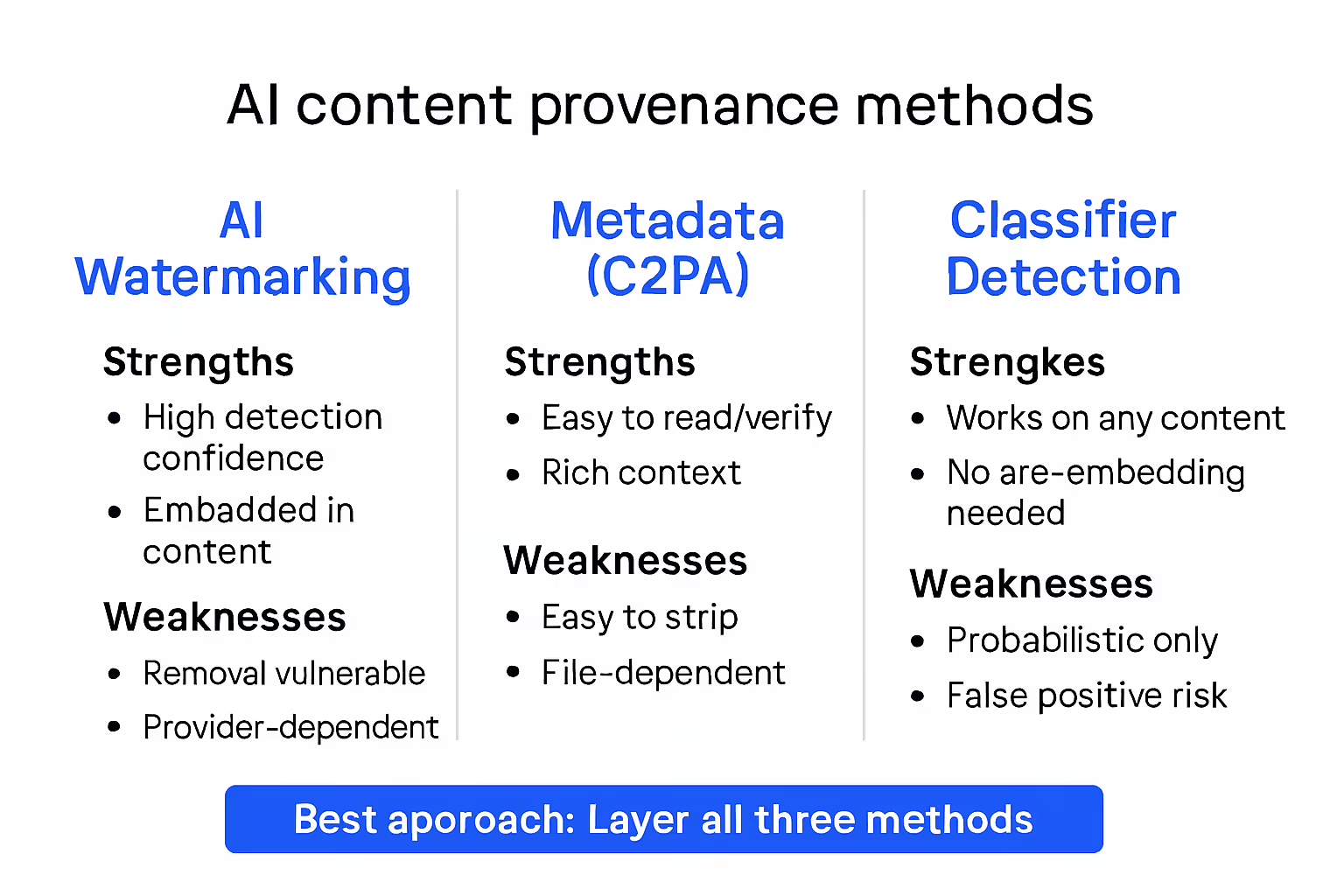

Watermarking is one tool in a broader provenance toolkit. Understanding how it compares with alternative approaches helps organizations design realistic detection strategies.

Metadata-based provenance (C2PA and content credentials). The Coalition for Content Provenance and Authenticity (C2PA) standard embeds cryptographic metadata into files, recording creation details, editing history, and AI involvement. Unlike watermarking, metadata is attached to the file rather than embedded in the content itself. This makes it easy to read and verify but also easy to strip. Saving an image without its metadata, or copying text into a new document, removes the provenance record entirely. Metadata approaches work well in controlled distribution environments but fail in open ecosystems where content is routinely copied, screenshotted, and re-shared.

Classifier-based AI detection. Detection tools analyze content statistically to estimate the probability that it was AI-generated. These tools do not rely on a pre-embedded signal; they look for patterns characteristic of AI output. The advantage is that they work on any content, regardless of origin. The disadvantage is accuracy. Classifiers produce probabilistic estimates, not binary certainties. They are prone to false positives, particularly with formulaic or technical writing, and their accuracy degrades as AI models evolve.

How watermarking fits. Watermarking offers higher detection confidence than classifiers because it checks for a known signal rather than estimating probability. It is more robust than metadata because the signal is embedded in the content rather than attached to the file. But it is limited to content from cooperating providers and vulnerable to removal attacks.

The most effective provenance strategies layer multiple methods. Watermarking provides high-confidence detection for cooperating systems. Metadata adds context where file integrity is maintained. Classifiers offer a fallback for content where neither watermark nor metadata is present. No single approach is sufficient on its own.

What Organizations Should Consider

For most organizations, AI watermarking is not something to build but something to evaluate in the tools they already use or plan to adopt.

Check what your AI providers offer. Major providers including Google, OpenAI, and Meta have implemented or announced watermarking capabilities for their models. Before investing in separate solutions, verify whether the AI tools your organization uses already watermark outputs. Understand what detection methods are available and whether they are accessible to your team.

Assess your detection needs. Organizations that publish content, operate media platforms, or manage user-generated submissions have a stronger need for watermark detection than organizations using AI internally for operational tasks. Match your investment in detection capability to the actual risk profile: where does undetected AI content create harm in your context?

Set realistic expectations. Watermarking is a useful signal, not a guarantee. It improves confidence when detected, but absence of a watermark does not prove content is human-created. Organizations that build policies solely around watermark detection will encounter edge cases where the system fails: paraphrased content, open-source model outputs, and short-form text.

Combine with other verification methods. Use watermark detection alongside metadata checks, content evaluation processes, and editorial review. A layered approach compensates for the weaknesses of any single method.

Monitor the standards landscape. Watermarking standards are evolving rapidly. Industry coalitions, regulatory requirements, and technical research will reshape what is possible and expected within the next few years. Organizations do not need to commit to a permanent watermarking strategy today, but they should track developments and be prepared to adopt emerging standards as they mature.

Watermarking is a valuable piece of the content integrity puzzle. Treating it as the entire solution overpromises; ignoring it entirely underinvests in a capability that regulatory and market pressures will increasingly expect.

Frequently Asked Questions

Can AI watermarks be removed?

Yes, with varying difficulty. Text watermarks can be weakened through paraphrasing, translation, or rewriting. Image watermarks can be degraded through screenshots, re-encoding, cropping, or targeted removal attacks. The robustness of a watermark depends on its design and the sophistication of the removal attempt. Current watermarking technology makes casual removal difficult but does not prevent determined, technically skilled adversaries from stripping watermarks while preserving content quality.

Does AI watermarking affect content quality?

Well-designed watermarks produce no perceptible quality loss. For text, the watermarked output reads identically to unwatermarked text because the model selects from statistically equivalent alternatives. For images and audio, watermarks are embedded below the threshold of human perception. Users cannot distinguish watermarked from unwatermarked content in controlled evaluations. Quality degradation is a sign of poorly implemented watermarking, not an inherent characteristic.

Which AI companies use watermarking?

Google DeepMind implements SynthID for text and image watermarking across its Gemini models. OpenAI has deployed image watermarking using C2PA metadata and has researched text watermarking methods. Meta has released watermarking research and tools for its model outputs. The landscape is evolving, and implementation details vary. Not all watermarking systems are interoperable, and detection tools from one provider cannot verify watermarks from another.

Is AI watermarking required by law?

Requirements vary by jurisdiction. The EU AI Act includes transparency obligations for AI-generated content that will require disclosure mechanisms, though specific technical requirements are still being defined. China requires AI-generated content to be labeled. In the United States, there are no federal mandates, but executive guidance has encouraged voluntary watermarking adoption by AI companies. The regulatory trajectory across major jurisdictions points toward increasing expectations for provenance transparency.

%201.svg)

%201.avif)

.png)

%201.svg)