What AI Readiness Means

AI readiness is an organization's capacity to adopt, deploy, and sustain AI tools effectively. It measures whether the necessary conditions exist for AI to produce value, not whether AI has already been deployed.

This distinction separates readiness from two adjacent concepts.

AI readiness is not AI maturity. Maturity describes where an organization sits on a spectrum of AI sophistication, from no AI usage to fully integrated, autonomous systems. Readiness is the prerequisite question: does this organization have the data, infrastructure, skills, governance, and culture to begin AI adoption successfully? An organization can have zero AI maturity and still score high on readiness if the foundational conditions are in place.

AI readiness is not AI adoption. Adoption measures what has been deployed. Readiness measures what can be deployed without predictable failure. Organizations that skip readiness assessment and jump to adoption frequently encounter the same problems: incompatible data systems, untrained staff, missing policies, and cultural resistance that undermines implementation.

Readiness is a diagnostic, not a destination. It identifies gaps before they become failures and directs investment toward the conditions that matter most for successful deployment.

Why Readiness Assessment Matters Before AI Adoption

Skipping readiness assessment does not save time. It redistributes the cost into more expensive failure modes.

Tool abandonment. Organizations purchase AI tools, deploy them to teams that lack the skills or data infrastructure to use them, and watch adoption plateau within months. The tool works. The environment does not support it. License costs continue while value stalls.

Data incompatibility. AI tools depend on structured, accessible, sufficiently clean data. Organizations that do not assess data readiness discover mid-deployment that their records are fragmented across systems, inconsistently formatted, or inaccessible through APIs. Retrofitting data infrastructure after selecting an AI tool is slower and more expensive than evaluating it beforehand.

Governance gaps surface at the worst moment. Without pre-established policies on data usage, privacy, and decision authority, organizations face reactive crises. A team deploys an AI tool that processes sensitive information, and legal discovers the data handling violates internal policy or regulatory requirements. The tool gets suspended, trust erodes, and the next AI initiative faces greater institutional skepticism.

Cultural resistance hardens. When AI tools arrive without preparation, training, or context, staff interpret the deployment as a threat rather than a resource. First impressions are difficult to reverse. Organizations that launch AI tools into unprepared teams create adversaries where they needed advocates.

Readiness assessment prevents these patterns by identifying which conditions are met and which require investment before deployment begins. The cost of assessment is a fraction of the cost of recovering from premature adoption.

Five Dimensions of AI Readiness

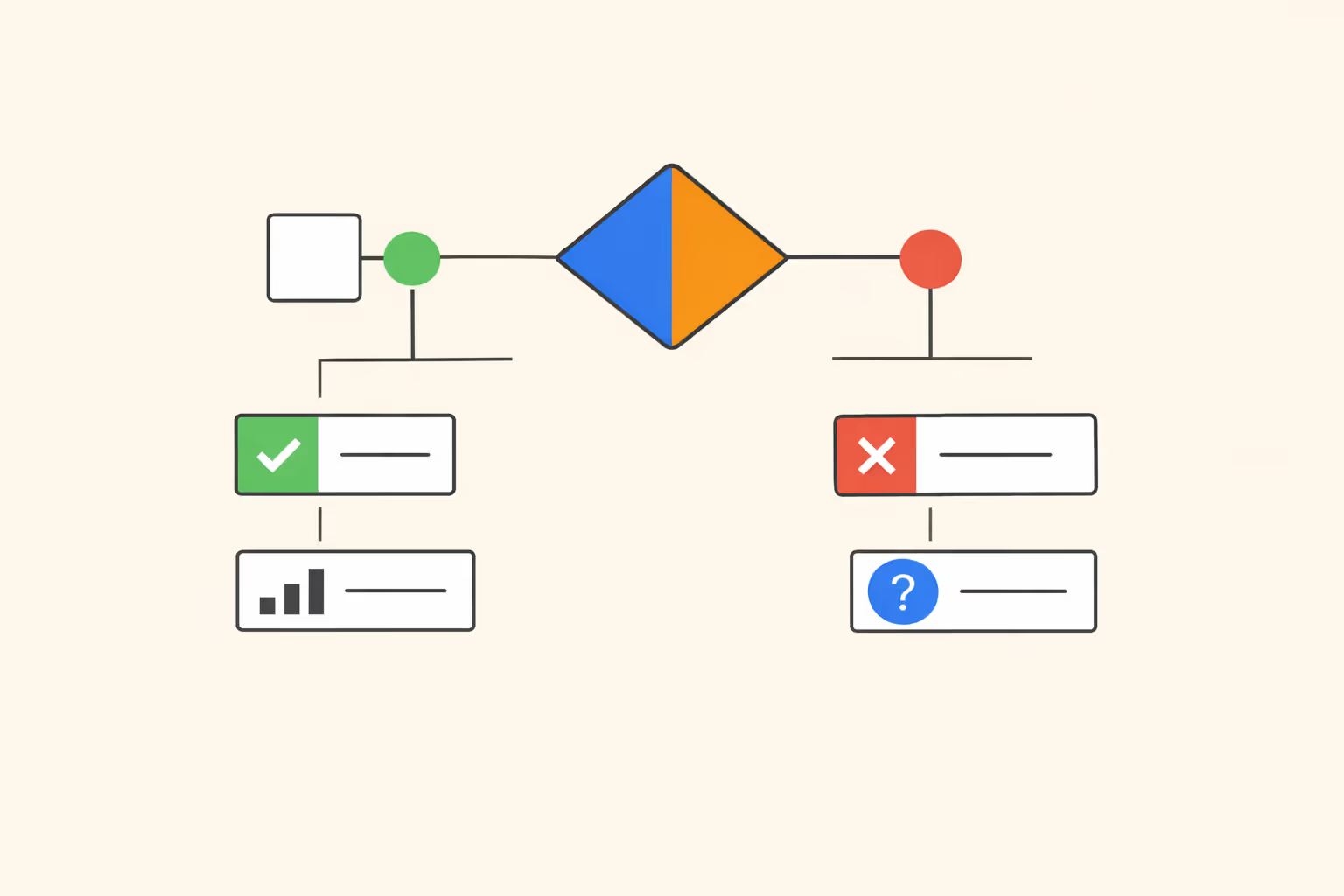

AI readiness is not a single score. It is a profile across multiple dimensions, each of which can independently block or enable successful deployment.

Five dimensions capture the full readiness picture.

Data Readiness

AI systems are only as useful as the data they access. Data readiness evaluates whether the organization's data is structured, accessible, sufficiently accurate, and governed by clear ownership and quality standards.

Key questions include whether data is centralized or fragmented, whether it can be accessed programmatically through APIs, whether sensitive data is classified and protected, and whether data quality is actively monitored. Organizations with fragmented, siloed, or poorly documented data will struggle to deploy AI regardless of how capable the tools are.

Infrastructure and Technical Readiness

Infrastructure readiness assesses whether the organization's technical environment can support AI tools. This includes compute capacity, system integration capabilities, security posture, and the availability of technical staff to manage AI deployments.

Cloud-based platforms reduce infrastructure requirements but introduce dependency on vendor ecosystems. On-premise AI deployments require more infrastructure investment but offer greater data control. The right choice depends on regulatory context, data sensitivity, and existing technical architecture.

Skills and Talent Readiness

AI adoption requires multiple skill sets across different roles. Technical teams need competency in data engineering, model evaluation, and system integration. End users need literacy in prompt design, output interpretation, and responsible use. Leaders need enough understanding to set strategy, evaluate vendors, and govern deployments.

Skills readiness is not about hiring AI specialists. It is about whether existing staff can be trained effectively within their roles. The gap between current skill levels and required competencies determines the training investment needed before deployment.

Governance and Policy Readiness

Governance readiness evaluates whether the organization has the policies, decision structures, and accountability mechanisms to manage AI responsibly. This includes data usage policies, ethical guidelines, vendor evaluation standards, and escalation procedures for AI-related incidents.

Organizations without governance readiness deploy AI tools into a policy vacuum. Decisions about acceptable use, data handling, and accountability are made ad hoc, creating inconsistency and risk.

Cultural Readiness

Cultural readiness measures whether the organization's people, leadership included, are prepared to work alongside AI systems. This goes beyond attitude surveys. It includes whether leadership communicates a clear rationale for AI, whether teams trust that AI supports rather than replaces their work, and whether the organization tolerates the experimentation and iteration that AI adoption requires.

Cultural resistance is the most difficult readiness gap to close because it cannot be solved with a purchase or a policy. It requires sustained communication, demonstrated value, and genuine responsiveness to concerns.

All five dimensions interact. Strong data infrastructure means little if governance is absent. Advanced skills are underused if culture resists change. Readiness assessment must evaluate all five to produce an actionable picture.

AI Readiness Checklist: 25 Questions to Ask

Use this checklist to evaluate your team or organization's readiness across all five dimensions. Each question targets a specific readiness condition. Rate each as "Yes," "Partially," or "No" to identify where gaps exist.

Data Readiness

Infrastructure and Technical Readiness

Skills and Talent Readiness

Governance and Policy Readiness

Cultural Readiness

How to Score and Interpret Your Results

A checklist without interpretation is a list. Scoring converts answers into a readiness profile that guides decisions.

Scoring approach. For each question, assign: "Yes" = 2 points, "Partially" = 1 point, "No" = 0 points. Calculate the total for each dimension (maximum 10 points per dimension) and the overall total (maximum 50 points).

Interpreting dimension scores:

Interpreting overall scores:

Priority logic. Not all gaps carry equal weight. Governance and data gaps tend to create the hardest recovery problems because they affect every deployment. Skills gaps can be closed through targeted training programs. Cultural gaps require the most time but are manageable with sustained effort.

Score the checklist periodically, not once. Readiness changes as the organization invests, learns, and evolves.

Common Readiness Gaps and How to Close Them

Assessment identifies gaps. Closing them requires directed action. Five gaps appear most frequently across organizations evaluating AI readiness.

Fragmented data systems. Data spread across disconnected tools with no integration layer is the most common technical blocker. Remediation starts with a data audit that maps sources, formats, access methods, and ownership. From there, prioritize integration for the data sets most relevant to your target AI use case. Full enterprise data unification is not required; targeted integration for specific workflows is usually sufficient.

No AI governance policy. Many organizations have general technology policies but nothing specific to AI. The fastest path is a lightweight AI use policy that covers acceptable uses, prohibited uses, data handling expectations, and escalation procedures. This does not need to be comprehensive on day one. A living document that evolves with experience is more valuable than a delayed, overly detailed policy.

Skill gaps at the user level. Technical teams often have AI familiarity, but end users do not. Closing this gap requires structured training that teaches practical skills: how to write effective prompts, how to evaluate AI outputs critically, and when to escalate to a human. Short, role-specific training sessions produce better results than generic AI overviews.

Leadership misalignment. When executives sponsor AI initiatives without understanding the operational requirements, teams receive mandates without resources. Leadership alignment requires structured briefings that cover what AI can and cannot do, what readiness conditions are necessary, and what investment is needed before deployment will succeed.

Cultural skepticism. Staff who fear AI will replace their roles or add unpredictable workload resist adoption regardless of technical readiness. Address this with transparency: share the specific use cases being considered, explain what will and will not change in their workflows, and create channels for feedback. Early pilots that demonstrate tangible value to the people using the tools are the strongest cultural intervention.

Each of these gaps is closable. None requires years of investment. Most can be meaningfully improved within one to two quarters with focused effort and clear ownership.

Frequently Asked Questions

How often should organizations reassess AI readiness?

Reassess at least annually, and additionally before any major AI initiative. Readiness conditions change as organizations invest in infrastructure, train staff, update policies, and gain experience with AI tools. A readiness profile from six months ago may not reflect current capabilities. Periodic reassessment also helps leadership track whether gap-closure investments are producing measurable improvement.

What is the difference between AI readiness and AI maturity?

AI readiness measures whether the conditions for successful AI adoption exist: data quality, infrastructure, skills, governance, and culture. AI maturity measures how deeply AI is integrated into operations and decision-making. Readiness is a precondition; maturity is a result. An organization can be highly ready but have low maturity if it has not yet deployed AI. Conversely, low readiness with attempted deployment produces fragile, unsustainable adoption.

Can small teams use an AI readiness checklist?

Yes. The five dimensions apply regardless of team size. Small teams often have advantages in cultural readiness and governance speed, since fewer stakeholders means faster decision-making. The primary constraints for small teams are typically data access and technical infrastructure. Scale the checklist to your context: not every question will apply equally, but the dimensional framework remains useful for identifying blind spots.

What is the most commonly underestimated readiness dimension?

Cultural readiness. Organizations invest heavily in data, infrastructure, and tools but underestimate how much adoption depends on people's willingness and ability to work with AI systems. Technical readiness without cultural buy-in produces tools that sit unused. The most successful AI deployments pair technical investment with sustained communication, training, and visible responsiveness to staff concerns.

%201.svg)

.png)

%201.svg)