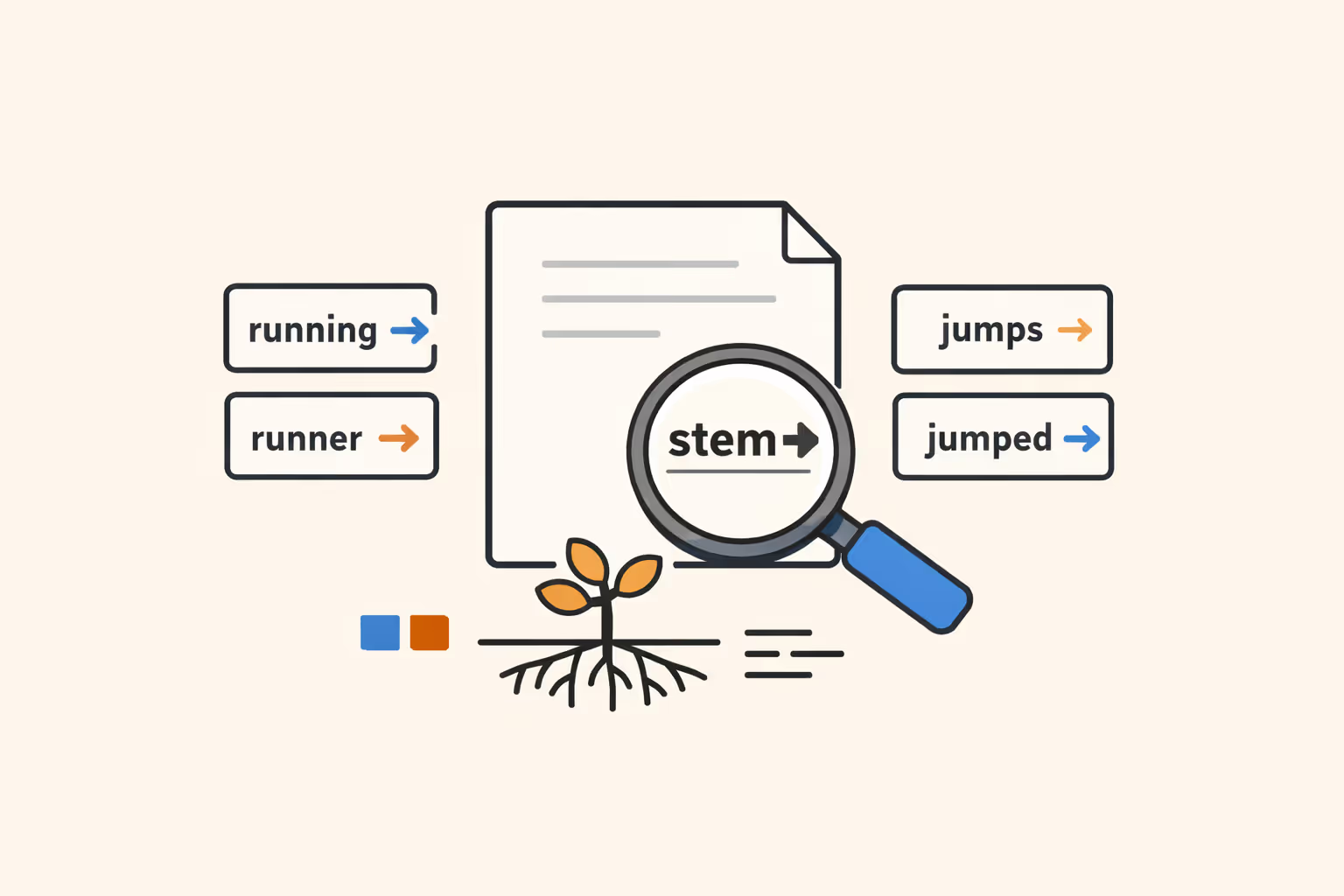

What Is Stemming?

Stemming is a text normalization technique in natural language processing that reduces words to their root or base form by stripping suffixes, prefixes, and other affixes. The resulting form, called a stem, does not need to be a valid dictionary word. It only needs to represent the common base shared by related word variants so that a system treats them as equivalent.

For example, the words "running," "runner," and "runs" all reduce to the stem "run." The words "connection," "connected," and "connecting" reduce to "connect." By collapsing these surface variations into a single canonical form, stemming reduces the vocabulary size a system must handle and helps it recognize that different word forms carry the same core meaning.

Stemming is one of the foundational preprocessing steps in computational linguistics and information retrieval. It emerged in the early days of search engine design, where matching a user query to relevant documents required treating morphological variants as the same term.

Despite the rise of more sophisticated approaches based on machine learning and deep contextual models, stemming remains widely used in systems where speed, simplicity, and computational efficiency matter.

How Stemming Works

Stemming operates by applying a set of rules or heuristics to strip known affixes from words. The process is mechanical rather than linguistic. It does not consult a dictionary or analyze the grammatical role of a word in context. Instead, it follows a deterministic sequence of character removal steps based on patterns.

A typical stemming algorithm scans a word from right to left, looking for character sequences that match known suffixes. When it finds a match, it checks whether removing the suffix satisfies certain conditions, such as a minimum remaining word length, and then performs the removal. Some algorithms apply multiple rounds of suffix stripping, progressively reducing the word until no more rules apply.

Consider the word "generalization." A stemmer might first remove "-ation" to produce "generaliz," then strip the trailing "-iz" to yield "general." The intermediate forms are not meaningful words on their own, but the final stem "general" captures the root concept. For the word "caresses," the stemmer would remove "-es" to produce "caress," which matches the stem for "caressing" and "caressed."

This rule-based approach contrasts with lemmatization, which uses vocabulary lookups and morphological analysis to return a word's actual dictionary form, or lemma. Stemming sacrifices linguistic precision for speed. A stemmer can process millions of words per second because it performs simple string operations without needing to load a full lexicon or parse sentence structure.

The preprocessing pipeline in a typical NLP system applies stemming after tokenization (splitting text into individual words) and often after lowercasing and stop word removal. The stemmed output then feeds into downstream tasks such as indexing, term frequency calculation, or feature extraction for machine learning classifiers.

Types of Stemming Algorithms

Several stemming algorithms have been developed over the decades, each reflecting a different trade-off between accuracy, speed, and linguistic coverage. The most widely used fall into three categories: algorithmic stemmers, lookup-based stemmers, and hybrid approaches.

Porter Stemmer

The Porter Stemmer, introduced by Martin Porter in 1980, is the most well-known stemming algorithm in NLP. It was designed for English and uses a series of five sequential phases, each containing a set of condition-action rules. The conditions evaluate properties of the remaining word stem, such as the number of consonant-vowel sequences, and the actions remove or replace specific suffixes.

For instance, the Porter Stemmer maps "relational" to "relat," "conditional" to "condit," and "hopefulness" to "hopeful" (then to "hope" in a later phase). The algorithm is fast, deterministic, and easy to implement, which explains its widespread adoption in search engines, text classifiers, and research prototypes. Its main limitation is that it sometimes produces stems that are unintuitive or that collapse unrelated words into the same root.

Snowball Stemmer

The Snowball Stemmer (also called Porter2) is an improved and generalized version of the Porter Stemmer, also developed by Martin Porter. It uses a dedicated programming language called Snowball that allows stemming rules to be expressed as formal procedures. This design makes it straightforward to create stemmers for many languages without rewriting the core engine.

Snowball stemmers exist for English, French, German, Spanish, Italian, Portuguese, Dutch, Swedish, Norwegian, Danish, Russian, Finnish, Hungarian, Romanian, Turkish, and several other languages. The English Snowball Stemmer produces slightly more accurate results than the original Porter Stemmer and is the recommended choice for most English natural language processing tasks that require stemming.

Lancaster Stemmer

The Lancaster Stemmer (also known as the Paice/Husk Stemmer) takes an aggressive approach to suffix stripping. It applies iterative rules that continue removing characters until no more rules match, often producing very short stems. The word "maximum" might be reduced to "maxim" or even "max," while "presumably" might become "presum."

This aggressiveness means the Lancaster Stemmer achieves high recall (it rarely misses related words) but lower precision (it sometimes merges unrelated words into the same stem). It is useful in scenarios where casting a wide net matters more than exact matching, such as exploratory semantic search over large document collections.

Lovins Stemmer

The Lovins Stemmer, developed by Julie Beth Lovins in 1968, was one of the first published stemming algorithms. It uses a single-pass, longest-match approach, removing the longest suffix it can find from a predefined list of about 260 endings. After suffix removal, it applies a set of recoding rules to clean up the resulting stem.

The Lovins Stemmer is historically significant but is rarely used in modern systems. Its single-pass design can be too aggressive or too conservative depending on the word, and it lacks the iterative refinement of later algorithms.

Lookup-Based and Hybrid Stemmers

Some stemming approaches use precomputed dictionaries rather than rules. A lookup-based stemmer stores every known word form alongside its stem, returning the stem through a simple table lookup. This approach is highly accurate for words in the dictionary but fails on novel or misspelled words.

Hybrid stemmers combine rule-based suffix stripping with dictionary lookups, using the dictionary when a word is known and falling back to algorithmic stemming otherwise. These approaches attempt to balance the speed of rule-based methods with the accuracy of dictionary-based methods, and they are particularly useful in language modeling pipelines that must handle both standard vocabulary and domain-specific terminology.

| Type | Description | Best For |

|---|---|---|

| Porter Stemmer | The Porter Stemmer, introduced by Martin Porter in 1980. | The number of consonant-vowel sequences |

| Snowball Stemmer | The Snowball Stemmer (also called Porter2) is an improved and generalized version of the. | — |

| Lancaster Stemmer | The Lancaster Stemmer (also known as the Paice/Husk Stemmer) takes an aggressive approach. | Exploratory semantic search over large document collections |

| Lovins Stemmer | The Lovins Stemmer, developed by Julie Beth Lovins in 1968. | Modern systems |

| Lookup-Based and Hybrid Stemmers | Some stemming approaches use precomputed dictionaries rather than rules. | — |

Stemming vs Lemmatization

Stemming and lemmatization both aim to reduce words to a common base form, but they differ fundamentally in method, output quality, and computational cost. Understanding the distinction is important for choosing the right approach in any NLP pipeline.

Stemming uses heuristic rules to chop affixes. It does not consider the word's part of speech, its meaning, or its context in a sentence. The output is a stem, which may not be a real word. For example, stemming "better" typically produces "better" (unchanged) or "bet," neither of which captures the relationship to "good." Stemming "studies" might yield "studi," which is not a valid English word.

Lemmatization performs a deeper analysis.

It identifies the word's part of speech, consults a morphological dictionary, and returns the lemma, the canonical dictionary form. "Better" lemmatizes to "good." "Studies" lemmatizes to "study." "Was" lemmatizes to "be." This linguistic accuracy makes lemmatization more reliable for tasks that depend on precise word meaning, such as natural language understanding, question answering, and sentiment analysis.

The trade-off is speed and complexity. Stemming runs in microseconds per word and requires no external resources. Lemmatization requires a dictionary, a part-of-speech tagger, and often a full morphological analyzer, making it slower and more resource-intensive. In large-scale information retrieval systems that process billions of documents, stemming's speed advantage is significant.

In practice, the choice depends on the task. Search engines and document indexing systems often use stemming because approximate matching across large corpora is sufficient. Conversational AI systems, machine translation pipelines, and applications requiring nuanced meaning typically prefer lemmatization.

Modern systems built on transformer models and subword tokenization sometimes bypass both approaches entirely, encoding morphological information directly in learned vector embeddings.

Stemming Use Cases

Stemming appears across a wide range of applications where reducing vocabulary size and matching word variants improve system performance. The following are the most common and impactful use cases.

Information Retrieval and Search Engines

Search is the original and most prominent use case for stemming. When a user searches for "teaching strategies," a search engine with stemming will also match documents containing "teach," "teacher," "teaches," and "taught." Without stemming, the engine would only return documents containing the exact query terms, missing many relevant results.

Modern search engines often combine stemming with other text normalization steps, synonym expansion, and ranking algorithms. Stemming reduces the index size by mapping multiple word forms to a single stem, which also improves storage efficiency and query speed. Large-scale systems serving semantic search queries use stemming as one layer in a broader retrieval architecture.

Text Classification and Sentiment Analysis

In text classification tasks, stemming reduces the feature space by collapsing related terms. A spam filter does not need to treat "discount," "discounted," and "discounting" as three separate features. Mapping them all to "discount" simplifies the model, reduces overfitting, and can improve classification accuracy when training data is limited.

Sentiment analysis systems benefit similarly. Reducing "frustrated," "frustrating," and "frustration" to a common stem helps the model learn a single association between that stem and negative sentiment rather than learning separate weights for each variant. This is especially valuable in machine learning pipelines that rely on bag-of-words or TF-IDF representations.

Document Clustering and Topic Modeling

Stemming improves document clustering by ensuring that morphological variants do not create artificial distinctions between documents. Two articles discussing "innovation" and "innovating" should cluster together, and stemming ensures they share the term "innov" in their feature vectors.

Topic modeling algorithms such as Latent Dirichlet Allocation (LDA) also benefit from stemmed input. By reducing vocabulary size, stemming helps these models converge faster and produce more coherent topics. The resulting topic labels are more interpretable when related word forms map to the same stem.

Keyword Extraction and SEO

Content analysis tools use stemming to group keyword variants. An SEO tool analyzing a webpage might count "optimize," "optimizing," "optimization," and "optimized" as instances of the same keyword concept. This gives a more accurate picture of keyword density and topical focus than counting each variant separately.

Preprocessing for Neural Models

While modern neural network architectures like BERT use subword tokenization and do not strictly require stemming, some hybrid pipelines still apply stemming as a preprocessing step. In low-resource settings with limited training data, stemming can reduce vocabulary sparsity and improve the performance of simpler models that feed into or complement neural systems.

Challenges and Limitations

Stemming is useful precisely because it is simple, but that simplicity introduces predictable problems. Understanding these limitations helps practitioners decide when stemming is appropriate and when more sophisticated alternatives are needed.

Over-Stemming

Over-stemming occurs when the algorithm strips too much from a word, collapsing unrelated words into the same stem. The classic example is "university" and "universe," which some stemmers reduce to the common stem "univers." These words share a Latin root but have entirely different meanings in modern usage. Similarly, "organ," "organization," and "organic" might all map to "organ," creating false equivalences that degrade search precision and classification accuracy.

Over-stemming is the most common complaint against aggressive stemmers like Lancaster. It reduces precision because the system retrieves or matches documents that are not actually relevant to the user's intent.

Under-Stemming

Under-stemming is the opposite problem. It occurs when the algorithm fails to reduce related words to the same stem. "Alumnus" and "alumni" might not be stemmed to a common root because the morphological change involves a Latin plural rather than a standard English suffix. Irregular forms like "went" and "go" are another common failure case.

Under-stemming reduces recall because the system misses documents or features that are semantically related to the query. In practice, most stemmers exhibit both over-stemming and under-stemming, depending on the specific words involved.

Language Dependence

Stemming algorithms are inherently language-specific. Rules designed for English suffixes do not work for Arabic infixes, Finnish agglutination, or Chinese characters (which have no affixes at all). Building a stemmer for a new language requires understanding that language's morphological system and encoding its rules.

The Snowball framework addresses this to some extent by providing stemmers for many European languages, but coverage remains uneven. Morphologically rich languages like Turkish, Hungarian, and Finnish are especially challenging because a single word root can take dozens of suffixes that modify meaning, tense, case, and number.

Developing robust text preprocessing for these languages is an ongoing challenge in computational linguistics.

Loss of Semantic Nuance

Stemming discards morphological information that sometimes carries meaning. The difference between "teacher" (a person) and "teaching" (an activity) is meaningful in many contexts, but a stemmer collapses both to "teach." In tasks that require fine-grained semantic distinctions, such as natural language understanding or relation extraction, this loss of information can hurt performance.

Interaction with Modern Architectures

The relevance of stemming has diminished somewhat with the rise of contextual language models. Models like BERT and GPT use subword tokenization schemes (WordPiece, Byte Pair Encoding) that implicitly capture morphological patterns. These models learn that "running" and "runner" share the subword "run" without needing an explicit stemming step.

In pipelines built entirely around transformer models, adding stemming as a preprocessing step can actually remove useful information and degrade performance.

This does not make stemming obsolete. It means practitioners need to match their text normalization strategy to their model architecture. Classical bag-of-words and TF-IDF pipelines benefit from stemming. Neural pipelines with learned embeddings typically do not.

FAQ

What is the difference between stemming and tokenization?

Tokenization splits a text into individual units, typically words or subwords. Stemming reduces those tokens to their root form. Tokenization happens first in the preprocessing pipeline. For instance, the sentence "The runners are running" is first tokenized into ["The", "runners", "are", "running"]. Stemming then reduces each token to its stem: ["the", "runner", "are", "run"]. Tokenization addresses segmentation; stemming addresses normalization.

Which stemming algorithm should I use?

For most English NLP tasks, the Snowball Stemmer (Porter2) provides the best balance of accuracy and speed. It produces cleaner stems than the original Porter Stemmer and is less aggressive than the Lancaster Stemmer. If you are working with a non-English language, check whether a Snowball implementation exists for that language. For exploratory analysis where recall matters more than precision, the Lancaster Stemmer is a reasonable choice.

Does stemming improve search engine accuracy?

Stemming generally improves recall by matching more relevant documents to a query. However, it can reduce precision by matching documents that share a stem but not a meaning. The net effect depends on the domain and the specificity of user queries.

Most production search engines use stemming as one component in a multi-layered ranking system that also includes semantic search, synonym expansion, and relevance scoring to balance precision and recall.

Is stemming still relevant with modern AI models?

Stemming remains relevant for specific use cases, particularly in classical NLP pipelines, information retrieval systems, and resource-constrained environments. However, modern artificial intelligence models that use subword tokenization and contextual embeddings handle morphological variation internally, reducing the need for explicit stemming.

The choice depends on the system architecture, the available computational resources, and the specific task requirements.

Can stemming handle all languages equally well?

No. Stemming algorithms perform best on languages with relatively simple and regular morphology, such as English. Languages with rich inflectional systems, extensive compounding, or non-concatenative morphology (where meaning is encoded through internal vowel changes rather than affixes) present significant challenges.

Arabic, Finnish, Turkish, and German each require specialized stemming rules, and even well-designed stemmers for these languages produce more errors than their English counterparts. For multilingual applications, lemmatization or subword-based approaches often perform more reliably.

How do I implement stemming in Python?

The NLTK library provides implementations of the Porter, Snowball, and Lancaster stemmers. The typical workflow is: import the stemmer class, instantiate it, and call the stem method on each word. For example, using NLTK's SnowballStemmer for English, the word "processing" returns "process" and "connections" returns "connect." The spaCy library does not include a built-in stemmer (it focuses on lemmatization), but stemming can be added as a custom pipeline component.

For production systems, consider the PyStemmer library, which provides Snowball stemmers implemented in C for faster execution.

%201.svg)

.png)

%201.avif)

%201.svg)