What Is REALM?

REALM (Retrieval-Augmented Language Model) is a language modeling framework that augments a pre-trained language model with a learned retrieval component. Instead of relying solely on knowledge stored in its parameters, REALM retrieves relevant documents from a large corpus at inference time and uses them as additional context when generating predictions.

Developed by researchers at Google AI, REALM was introduced to address a core limitation of standard masked language models. Models like BERT compress all world knowledge into fixed model parameters during pre-training. This forces the model to memorize facts, which becomes increasingly difficult as the scope of knowledge grows.

REALM takes a different approach by treating knowledge as something to be looked up rather than stored.

The framework works by jointly training two components: a neural retriever that selects relevant documents from a corpus, and a knowledge-augmented encoder that reads both the input query and the retrieved documents to produce predictions. Both components are trained end-to-end using backpropagation, allowing the retriever to learn which documents are most useful for the downstream task.

REALM demonstrated significant improvements on knowledge-intensive benchmarks, particularly open-domain question answering, where it outperformed models with far more parameters.

How REALM Works

REALM's architecture consists of two tightly coupled modules that operate as a single differentiable system. Understanding each module and their interaction is essential to grasping why the framework performs well on knowledge-heavy natural language processing tasks.

The Neural Knowledge Retriever

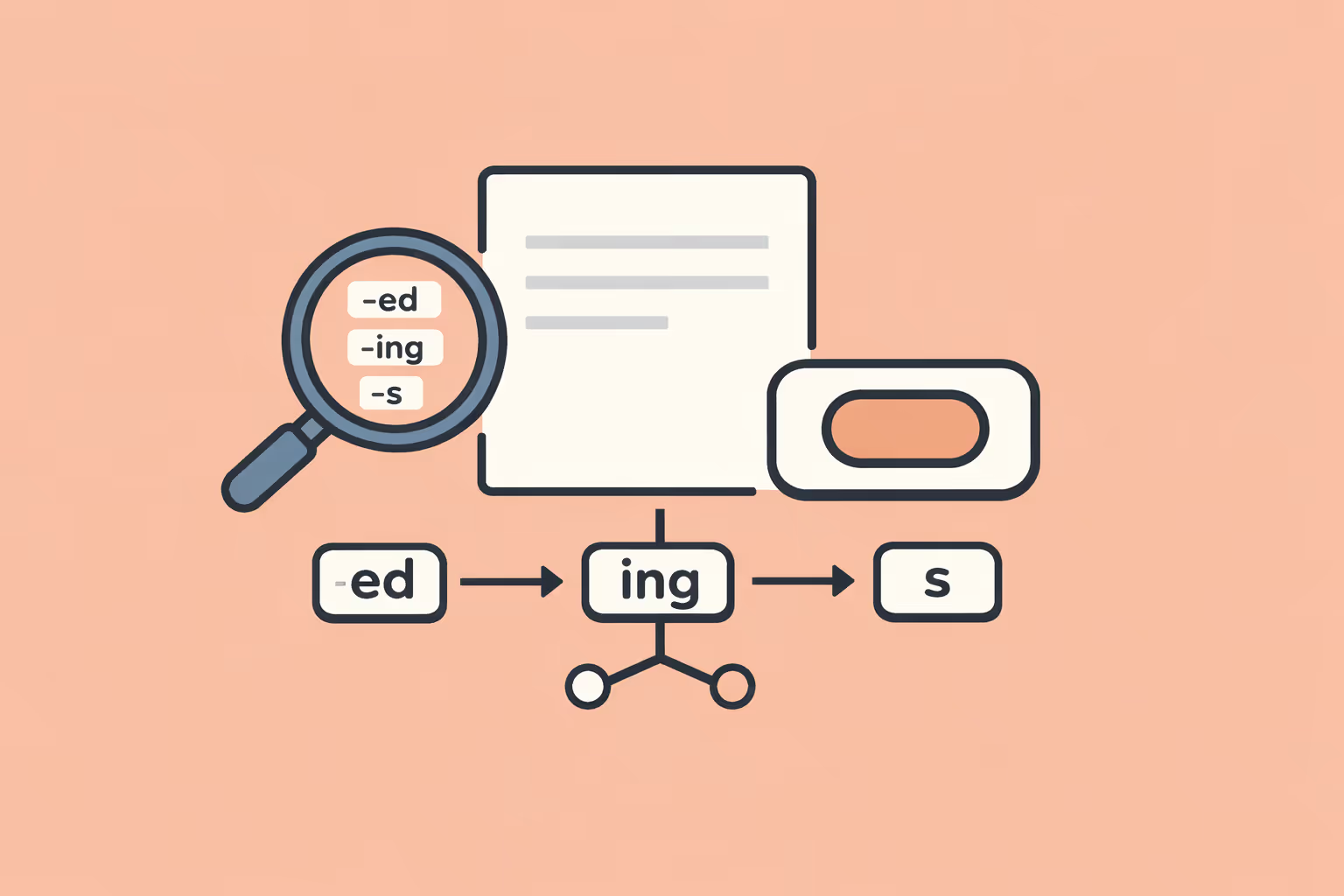

The retriever is a neural network that takes an input (such as a sentence with a masked token or a question) and scores every document in a large text corpus based on relevance. It encodes the input and each document into dense vector embeddings, then computes relevance using the inner product of these vectors.

The retriever uses a dual-encoder architecture built on top of BERT. One encoder processes the input query and the other processes candidate documents. Both produce fixed-length vector representations. The inner product between the query vector and each document vector determines a relevance score. The top-scoring documents are selected and passed to the knowledge-augmented encoder.

To make retrieval over millions of documents tractable, REALM pre-computes document embeddings for the entire corpus and stores them in an index. During training, this index must be periodically refreshed because the document encoder's parameters change as the model learns. The authors addressed this with asynchronous index updates, refreshing the embeddings every few hundred training steps.

The Knowledge-Augmented Encoder

The second component is a transformer model that takes the input query concatenated with a retrieved document and produces a prediction. For masked language modeling, this means predicting the identity of masked tokens. For question answering, it means extracting an answer span from the retrieved document.

The encoder processes each retrieved document independently, producing a separate prediction for each query-document pair. REALM then marginalizes over all retrieved documents, combining predictions weighted by the retriever's relevance scores. This marginalization step is critical because it makes the entire pipeline differentiable.

Gradients flow back through the prediction scores, through the marginalization, and into the retriever, allowing the retriever to learn which documents improve prediction accuracy.

End-to-End Pre-Training

REALM pre-trains both the retriever and encoder jointly using a masked language modeling objective. The process works as follows. A sentence is sampled from the training corpus and tokens are masked. The retriever selects the most relevant documents from a knowledge corpus (such as Wikipedia). The encoder reads each query-document pair and predicts the masked tokens. The loss signal from prediction accuracy propagates back through both the encoder and the retriever.

This end-to-end training is what separates REALM from simpler retrieve-then-read pipelines. In a standard pipeline, the retriever and reader are trained separately, which means the retriever has no direct signal about what information the reader actually needs. In REALM, the retriever learns to fetch documents that specifically help the encoder make correct predictions.

This tight coupling between retrieval and reasoning is a form of deep learning applied to the problem of knowledge access.

| Component | Function | Key Detail |

|---|---|---|

| The Neural Knowledge Retriever | The retriever is a neural network that takes an input (such as a sentence with a masked. | A sentence with a masked token or a question) |

| The Knowledge-Augmented Encoder | The second component is a transformer model that takes the input query concatenated with a. | The encoder processes each retrieved document independently |

| End-to-End Pre-Training | REALM pre-trains both the retriever and encoder jointly using a masked language modeling. | Wikipedia) |

REALM vs RAG

REALM and retrieval-augmented generation (RAG) both combine retrieval with language models, but they differ in design goals, training methodology, and output capabilities. Understanding the distinction helps practitioners choose the right architecture for a given task.

Training Approach

REALM trains the retriever and encoder jointly from the start. The retriever receives gradient signals from the language modeling loss, which means it learns to retrieve documents that are specifically useful for making predictions. This end-to-end training makes the retriever task-aware.

RAG, introduced by Facebook AI Research, also trains the retriever and generator jointly but pairs a dense passage retriever (DPR) with a sequence-to-sequence generator (typically BART). While RAG fine-tunes both components together, the retriever is often initialized from a separately pre-trained DPR model rather than being learned entirely from scratch during pre-training.

Output Type

REALM uses a BERT-style encoder that excels at extractive tasks. It identifies answer spans within retrieved documents or predicts masked tokens. It does not generate free-form text.

RAG uses a generative AI decoder that produces entirely new sequences. It can synthesize information from multiple retrieved documents into a coherent, novel response. This makes RAG suitable for summarization, open-ended question answering, and dialogue systems.

Architecture

REALM is built on an encoder-only transformer. It marginalizes over retrieved documents to produce token-level predictions. The architecture is optimized for tasks where the answer exists somewhere in the retrieved text.

RAG uses an encoder-decoder architecture. The encoder processes retrieved passages and the decoder generates output tokens autoregressively. RAG supports two modes: RAG-Sequence (generates the full output conditioned on a single document, then marginalizes) and RAG-Token (marginalizes over documents at each generation step).

When to Use Each

REALM is the stronger choice when accuracy on extractive knowledge tasks is the priority, particularly open-domain question answering where answers are factual and concise. Its pre-training approach produces a retriever that is deeply aligned with the encoder's needs.

RAG is the better fit when the application requires generating natural language responses, combining information from multiple sources, or producing outputs that go beyond direct extraction. Most modern production systems that implement semantic search with generation use RAG-style architectures because of their flexibility.

REALM Use Cases

Open-Domain Question Answering

REALM's primary benchmark was open-domain question answering, where a system must answer factual questions using a large, unstructured text corpus rather than a pre-defined context paragraph. On the Natural Questions benchmark, REALM significantly outperformed previous systems, including models with three times as many parameters.

The strength of REALM in this setting comes from its ability to retrieve precisely the right evidence. Rather than trying to recall facts from compressed parameters, it retrieves the Wikipedia passage most likely to contain the answer and extracts the relevant span. This approach scales better with corpus size because adding more documents to the index does not require retraining the model.

Knowledge-Intensive Language Tasks

Any task where the model needs access to factual information benefits from REALM's retrieval mechanism. Fact verification, for example, requires checking a claim against a knowledge source. REALM can retrieve relevant evidence passages and use them to determine whether a claim is supported, refuted, or lacks sufficient information.

Entity linking, where mentions in text are connected to entries in a knowledge graph or database, also benefits. REALM can retrieve the relevant knowledge base entry for an ambiguous mention and use the full context to resolve the correct entity. This is especially valuable in domains like biomedical literature, where entity names are highly ambiguous.

Improving Pre-Training with External Knowledge

REALM demonstrated that retrieval-augmented pre-training produces language representations that encode world knowledge more effectively than standard masked language modeling. This insight has applications beyond question answering.

Models pre-trained with retrieval augmentation perform better on tasks that require factual reasoning, such as relation extraction (identifying relationships between entities in text) and slot filling (extracting specific attributes of entities).

For teams building artificial intelligence applications that depend on factual accuracy, retrieval-augmented pre-training offers a path to better performance without scaling model size.

Enterprise Search and Knowledge Management

While REALM was designed as a research system, its core principle of learned retrieval for knowledge access maps directly to enterprise applications. Organizations with large document repositories can use REALM-style architectures to build search systems where the retriever is trained to surface the most task-relevant documents rather than relying on keyword matching or generic embeddings.

This is particularly valuable in regulated industries like healthcare, finance, and legal services, where answers must be traceable to specific source documents. REALM's extractive approach provides built-in provenance: the system points to the exact passage that supports its answer.

Challenges and Limitations

Computational Overhead of Index Maintenance

REALM requires a pre-computed index of document embeddings for the entire knowledge corpus. During training, the document encoder's parameters change with each update, which means the index becomes stale. Refreshing the index requires re-encoding every document in the corpus, which is computationally expensive.

The original REALM paper addressed this with asynchronous index refreshes every few hundred steps. This is a practical workaround but introduces a lag between the model's current state and the index it retrieves from. For very large corpora containing millions of documents, the cost of index maintenance can become a significant bottleneck. Teams without access to substantial GPU or TPU clusters may find this requirement prohibitive.

Retriever Bottleneck

The quality of REALM's predictions depends heavily on the retriever. If the retriever fails to surface the correct document, the encoder has no way to produce the right answer. This creates a hard ceiling on system performance tied to retrieval accuracy.

Improving retrieval quality often requires better document representations, which means larger or more sophisticated encoder models for the document side. This creates a tension between retrieval quality and computational cost. In practice, retrieval errors account for a significant portion of REALM's failures on benchmarks.

Limited to Extractive Tasks

REALM is fundamentally an extractive system. It identifies spans within retrieved documents rather than generating novel text. This limits its applicability in scenarios that require synthesis, summarization, or creative output. For machine learning teams building applications that need to combine information from multiple sources into a coherent response, generative retrieval-augmented approaches like RAG are more appropriate.

Corpus Dependency

REALM's performance is tied to the quality and coverage of the knowledge corpus it retrieves from. If the answer to a question does not exist in the corpus, the system cannot produce it regardless of how good the retriever and encoder are. Keeping the corpus current requires ongoing data curation, and domain-specific applications may need carefully constructed corpora that cover the relevant knowledge space.

Scalability of Pre-Training

End-to-end pre-training with retrieval is substantially more expensive than standard masked language model pre-training. Each training step involves retrieving documents, which requires a forward pass through the retriever and an index lookup, in addition to the encoder forward and backward passes. This overhead limits the scale at which REALM-style pre-training is practical.

Achieving the benefits of fine-tuning on top of REALM pre-training adds another layer of computational cost.

How to Get Started with REALM

Understand the Prerequisites

Working with REALM requires a solid foundation in several areas. You should be comfortable with transformer model architectures, particularly BERT-style encoders. Familiarity with masked language models and their training objectives is essential.

You should also understand dense retrieval concepts, including how vector similarity search works with vector embeddings.

Key prerequisites include:

- Working knowledge of Python and PyTorch or TensorFlow

- Understanding of BERT architecture and masked language modeling

- Familiarity with dense passage retrieval and approximate nearest neighbor search

- Access to GPU or TPU compute resources for training and index construction

Explore the Original Implementation

Google Research released the original REALM code as part of the language-table repository. The implementation is built on TensorFlow 1.x and includes scripts for pre-training, index building, and evaluation on the Natural Questions benchmark. Reviewing this codebase provides a concrete understanding of how the retriever, index, and encoder interact during training and inference.

The original REALM paper, "REALM: Retrieval-Augmented Language Model Pre-Training" by Guu et al. (2020), is the authoritative reference. It details the mathematical formulation, training procedure, and experimental results. Reading this paper is the best starting point for understanding the design decisions behind the framework.

Start with a Simplified Pipeline

Before attempting full REALM pre-training, build a simplified retrieve-then-read pipeline to understand the core mechanics. Use a pre-trained dense retriever (such as DPR from Facebook AI) paired with a pre-trained BERT reader. Evaluate this pipeline on an open-domain QA dataset like Natural Questions or TriviaQA. This gives you a working baseline and practical experience with the retrieval and reading components.

Once the pipeline works, experiment with joint fine-tuning of the retriever and reader. This intermediate step bridges the gap between a simple pipeline and full end-to-end REALM pre-training.

Leverage Modern Frameworks

Several open-source frameworks have implemented REALM-style retrieval-augmented models with improved tooling. Hugging Face Transformers includes RAG implementations that share architectural principles with REALM. ColBERT and DRAGON provide advanced dense retrieval models that can serve as the retriever component. FAISS (Facebook AI Similarity Search) is the standard library for building and querying the vector index.

For teams focused on production deployment rather than research, starting with a RAG framework and adapting it toward REALM-style end-to-end training is often more practical than reimplementing REALM from scratch.

Evaluate and Iterate

Use established benchmarks to measure your system's performance. Natural Questions, TriviaQA, and WebQuestions are standard evaluation sets for open-domain QA. KILT (Knowledge Intensive Language Tasks) provides a broader evaluation suite covering fact checking, entity linking, slot filling, and dialogue.

Track both end-to-end accuracy and retrieval recall separately. If end-to-end performance plateaus, diagnosing whether the bottleneck is retrieval or reading tells you where to invest further effort. This diagnostic approach aligns with how deep learning practitioners systematically improve complex systems.

FAQ

What does REALM stand for?

REALM stands for Retrieval-Augmented Language Model. It refers to a framework developed by Google Research that integrates a neural document retriever with a language modeling encoder. The retriever fetches relevant documents from a knowledge corpus, and the encoder uses those documents as additional context when making predictions.

How is REALM different from a standard language model?

A standard language model like BERT stores all knowledge in its parameters during pre-training. REALM supplements this parametric knowledge with non-parametric knowledge accessed through retrieval. This means REALM can consult external documents at inference time rather than relying entirely on what was compressed into its weights during training. The result is better performance on tasks that require factual knowledge.

Can REALM generate text?

REALM is not a generative model. It uses a BERT-style encoder that excels at extractive tasks, such as identifying answer spans within documents or predicting masked tokens. For applications that require text generation, retrieval-augmented generation (RAG) combines retrieval with a generative decoder and is the more appropriate choice.

Is REALM still used in practice?

REALM itself is primarily a research framework, and direct deployment of the original REALM system is uncommon in production. However, its core innovations, particularly learned retrieval and end-to-end training of retriever-reader systems, have been widely adopted. Modern retrieval-augmented systems, including RAG and systems built with tools like ColBERT, owe significant architectural debt to REALM.

The principles REALM introduced remain central to how natural language processing systems handle knowledge-intensive tasks.

What kind of hardware do I need to run REALM?

REALM pre-training requires significant compute resources, including multiple GPUs or TPUs for training and sufficient memory to store and periodically refresh the document index. For a corpus the size of Wikipedia (approximately 13 million passages), the index itself requires substantial storage. Inference is less demanding but still requires GPU acceleration for real-time performance due to the retrieval and encoding steps.

Conclusion

REALM introduced a fundamental insight into how language models should handle knowledge: rather than forcing a model to memorize every fact in its parameters, give it the ability to look up information when needed. By jointly training a neural retriever and a knowledge-augmented encoder, REALM demonstrated that retrieval-augmented pre-training produces models that are more factually accurate and more parameter-efficient on knowledge-intensive tasks.

The framework's influence extends well beyond its original implementation. The design pattern of learned retrieval paired with neural reading has become the foundation for modern retrieval-augmented systems across artificial intelligence. RAG, ColBERT-based pipelines, and production search systems all trace architectural lineage to the principles REALM established.

For practitioners working on knowledge-intensive natural language processing applications, REALM remains an important reference point. Its approach to treating knowledge as a retrievable resource rather than a memorized artifact continues to shape how the field builds systems that need to reason over large bodies of information.

%201.svg)

.png)

%201.svg)