What Is Edge AI?

Edge AI refers to the deployment of artificial intelligence algorithms directly on local devices, such as sensors, cameras, smartphones, or embedded hardware, rather than relying on centralized cloud servers for computation. The model runs where the data is generated, enabling real-time inference without the need to transmit raw data to a remote data center.

In a traditional cloud AI architecture, data flows from a device to a server, the server processes the data, and the result is sent back. Edge AI eliminates the round trip. The device itself contains the processing power and the trained model needed to analyze inputs and produce outputs locally. This distinction matters because it directly affects response time, bandwidth consumption, data privacy, and system resilience.

The concept builds on two converging developments: the miniaturization of computing hardware capable of running machine learning models, and the growing volume of data generated by connected devices at the network periphery. When billions of devices produce data continuously, sending everything to the cloud becomes impractical. Edge AI addresses that bottleneck by distributing intelligence to the point of action.

How Edge AI Works

Understanding the mechanics of edge AI requires separating two phases: model training and model inference.

Training typically happens in the cloud or on high-performance computing clusters. Engineers prepare datasets, define model architectures, and iterate through training cycles that require significant computational resources. The result is a trained model, a set of mathematical parameters that encode the patterns the model learned from the data.

Inference is where edge AI operates. Once trained, the model is optimized and compressed to run on resource-constrained hardware. Techniques like quantization (reducing the precision of model weights), pruning (removing unnecessary connections), and knowledge distillation (training a smaller model to mimic a larger one) make it possible to deploy capable models on devices with limited memory and processing power.

The inference pipeline on an edge device follows a consistent pattern:

- Input capture: a sensor, camera, or microphone collects raw data

- Preprocessing: the data is normalized, resized, or filtered to match what the model expects

- Model execution: the preprocessed data passes through the neural network or algorithm on the local processor

- Output action: the result triggers a decision, alert, classification, or control signal

Specialized hardware accelerates this pipeline. Neural processing units (NPUs), tensor processing units (TPUs), and dedicated AI chips from companies like NVIDIA, Qualcomm, and Intel are designed to handle the matrix operations that neural networks require. These chips deliver high throughput per watt, a critical constraint for battery-powered or thermally limited devices.

Some edge AI systems use a hybrid approach. The device handles routine inference locally, but offloads complex or ambiguous cases to a cloud-based model for deeper analysis. This tiered architecture balances speed, accuracy, and cost.

Benefits of Edge AI

Edge AI solves specific problems that cloud-only architectures struggle with. Each benefit ties to a concrete operational outcome.

Reduced Latency

Cloud inference introduces network latency. Depending on server location, network congestion, and payload size, round-trip times can range from tens to hundreds of milliseconds. For applications where milliseconds matter, such as autonomous vehicle navigation, industrial safety shutoffs, or real-time anomaly detection, cloud latency is unacceptable.

Edge AI processes data on-device, producing results in single-digit milliseconds. The absence of network dependency makes the system more predictable and responsive.

Data Privacy and Sovereignty

When data stays on the device, it never traverses public networks or reaches third-party servers. This is significant for healthcare, finance, and government applications subject to strict data residency regulations. A medical imaging device that runs diagnostic inference locally does not need to send patient scans to an external cloud, simplifying compliance with privacy frameworks.

Edge AI does not eliminate privacy risk entirely. The device itself must be secured, and model updates require careful governance. But reducing data movement reduces the attack surface and the regulatory complexity.

Bandwidth Efficiency

Video cameras, industrial sensors, and IoT devices generate massive data volumes. A single high-resolution security camera can produce several gigabytes per day. Sending raw video streams from hundreds of cameras to the cloud for processing is expensive and impractical.

Edge AI processes data locally and transmits only the results, a fraction of the original data volume. A camera running object detection on-device sends metadata (object type, timestamp, location) instead of full video, reducing bandwidth requirements by orders of magnitude.

Reliability and Offline Operation

Cloud-dependent systems fail when the network fails. In remote industrial sites, ships, agricultural operations, or disaster response scenarios, connectivity is intermittent or unavailable. Edge AI systems continue to operate regardless of network status because the model and the computation reside on the device.

This independence makes edge AI suitable for mission-critical applications where downtime is not an option.

Cost Optimization

Cloud computing charges accumulate based on data transfer, storage, and compute time. For high-volume, continuous inference workloads, those costs scale quickly. Edge AI shifts computation to hardware that has already been purchased, reducing ongoing cloud expenditure. The economics favor edge deployment when inference demand is constant and predictable, while cloud bursting remains useful for peak loads or model retraining.

| Benefit | Description | Impact |

|---|---|---|

| Reduced Latency | Cloud inference introduces network latency. | Autonomous vehicle navigation, industrial safety shutoffs |

| Data Privacy and Sovereignty | When data stays on the device, it never traverses public networks or reaches third-party. | This is significant for healthcare, finance |

| Bandwidth Efficiency | Video cameras, industrial sensors, and IoT devices generate massive data volumes. | — |

| Reliability and Offline Operation | Cloud-dependent systems fail when the network fails. | In remote industrial sites, ships, agricultural operations |

| Cost Optimization | Cloud computing charges accumulate based on data transfer, storage, and compute time. | Peak loads or model retraining |

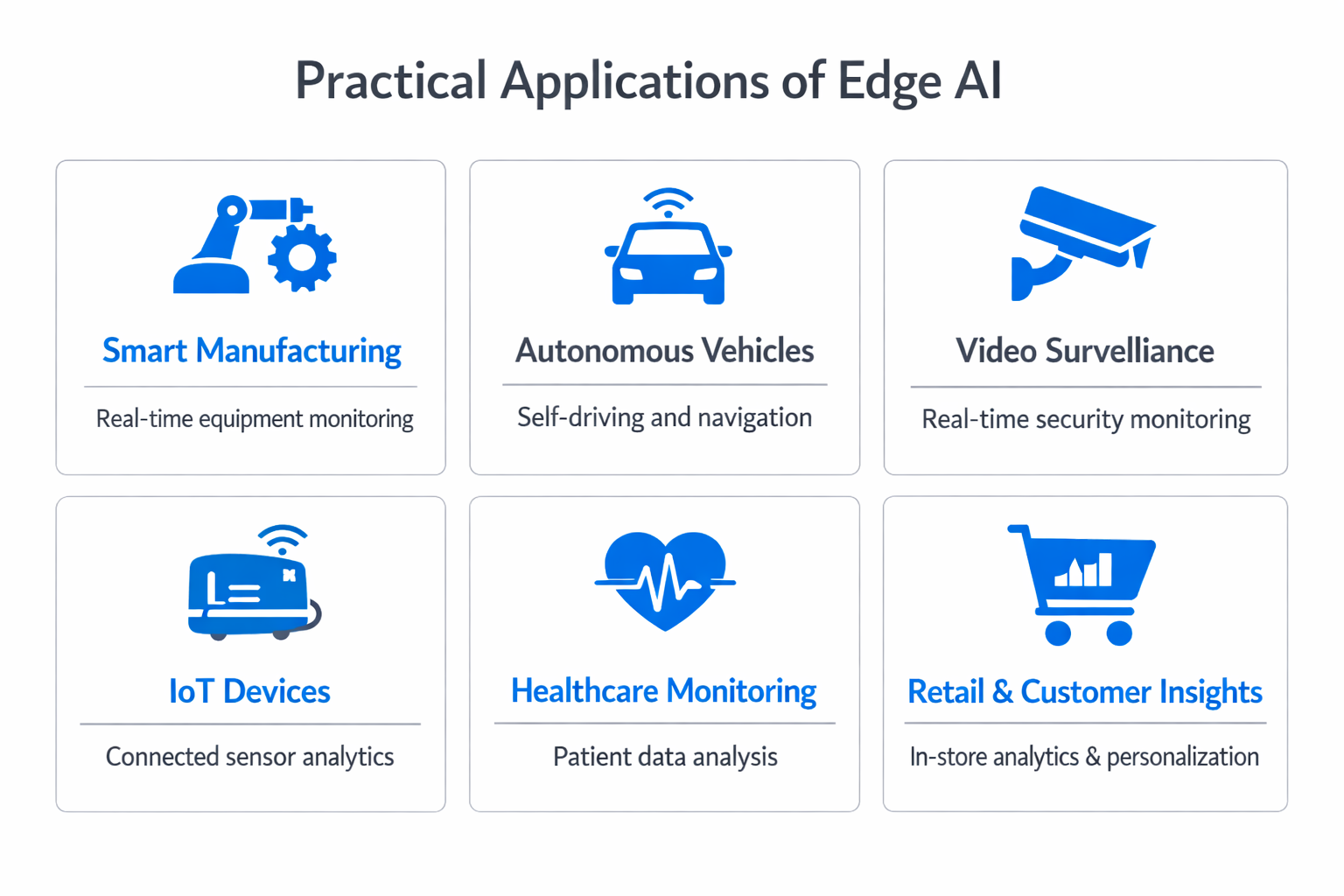

Edge AI Use Cases Across Industries

Edge AI is not a theoretical concept. It operates in production across sectors where real-time decisions, privacy, and reliability are non-negotiable.

Manufacturing and Industrial Automation

Factories deploy edge AI for visual quality inspection on production lines. Cameras with embedded models identify defects, measure tolerances, and flag anomalies faster than human inspectors and without fatigue. Predictive maintenance models analyze vibration, temperature, and acoustic data from machinery to detect early signs of failure before breakdowns occur.

The operational impact is reduced downtime and lower scrap rates. Because the models run on local hardware, the factory floor does not depend on external connectivity for real-time quality control.

Healthcare and Medical Devices

Edge AI enables portable diagnostic tools that operate in clinics, ambulances, and rural health settings without reliable internet. Wearable devices monitor cardiac rhythms, blood glucose levels, and respiratory patterns, running inference locally to detect abnormalities and alert patients or clinicians immediately.

In hospital settings, edge AI powers surgical robots that require sub-millisecond response times and cannot tolerate network interruptions. Medical imaging workstations with built-in AI assist radiologists by pre-screening scans for areas of concern, all without sending patient data outside the facility.

Autonomous Vehicles and Transportation

Self-driving vehicles are among the most demanding edge AI applications. A single autonomous vehicle generates terabytes of sensor data per day from cameras, lidar, radar, and ultrasonic sensors. Processing that data in the cloud and waiting for a response is not viable when braking decisions must happen within milliseconds.

Vehicle-mounted AI systems fuse inputs from multiple sensors, classify objects, predict trajectories, and plan safe paths in real time. The entire perception-to-action pipeline runs on embedded hardware inside the vehicle.

Retail and Customer Experience

Retailers use edge AI for inventory management, theft detection, and customer analytics. In-store cameras with on-device models track shelf stock levels and trigger automatic reorder alerts. Self-checkout systems use local computer vision to identify products without barcode scanning.

Edge processing keeps customer behavior data within the store's infrastructure, avoiding the privacy concerns associated with transmitting video footage to external platforms.

Smart Cities and Infrastructure

Traffic management systems use edge AI at intersections to adjust signal timing based on real-time vehicle and pedestrian density. Surveillance networks process video feeds locally to detect incidents, manage crowd flow, and coordinate emergency response without overwhelming central servers.

Utility grids deploy edge intelligence at substations to balance loads, detect faults, and optimize energy distribution. The distributed nature of these systems demands processing at the point of measurement, not at a central location miles away.

Challenges and Limitations of Edge AI

Edge AI introduces trade-offs that organizations must evaluate before committing to a deployment strategy.

Hardware Constraints

Edge devices have limited memory, processing power, and energy budgets compared to cloud servers. Not every model can run on every device. Large transformer models that perform well in the cloud may be too resource-intensive for embedded hardware, even after optimization. The gap between what a model can do in the cloud and what a compressed version of that model can do on a device is real and sometimes significant.

Choosing the right model architecture for the target hardware is a design decision that directly affects accuracy, speed, and power consumption.

Model Management at Scale

Deploying a model to one device is straightforward. Managing model versions, updates, and rollbacks across thousands or millions of heterogeneous devices in the field is complex. Organizations need robust over-the-air update mechanisms, monitoring systems, and rollback procedures.

If a model update introduces a regression, the issue may not be detected immediately across a distributed fleet. Model lifecycle management for edge deployments requires the same rigor as software release management for distributed systems.

Security Considerations

Edge devices are physically accessible, which creates attack vectors that do not exist in secured cloud data centers. An attacker with physical access to a device can extract model weights, reverse-engineer proprietary algorithms, or manipulate sensor inputs to produce false outputs.

Securing edge AI requires hardware-level protections (secure enclaves, encrypted model storage), tamper detection, and secure boot processes. These measures add cost and complexity.

Limited Model Capability

Edge models are typically smaller and less capable than their cloud counterparts. Tasks that require reasoning over large contexts, accessing extensive knowledge bases, or performing multi-step inference chains may exceed what an edge-optimized model can deliver. The accuracy-versus-efficiency trade-off is inherent to edge deployment.

Hybrid architectures can mitigate this limitation. The edge device handles most inferences locally and escalates complex cases to a more powerful cloud model when connectivity permits.

How to Evaluate Edge AI for Your Organization

Not every AI workload belongs at the edge. The decision depends on specific operational requirements, not on technology trends.

Start by mapping your inference workloads against four criteria:

- Latency sensitivity. If your application requires sub-100ms response times and cannot tolerate network variability, edge inference is likely necessary.

- Data sensitivity. If the data involved is regulated, personally identifiable, or competitively sensitive, local processing reduces exposure.

- Connectivity reliability. If your deployment locations have intermittent or no connectivity, edge AI provides the only viable path.

- Volume and cost. If continuous inference from many devices would generate unsustainable cloud costs or bandwidth demands, local processing shifts the economics.

If none of these conditions apply, cloud inference may be simpler, cheaper, and more maintainable. Edge AI is a deployment strategy, not an upgrade. It solves specific problems, and the decision should be driven by those problems rather than by the appeal of the technology itself.

For organizations building AI readiness assessments, evaluating edge versus cloud trade-offs should be an explicit step in the process. The infrastructure choice shapes everything downstream, from model selection to team skill requirements to operational governance.

FAQ

What is the difference between edge AI and cloud AI?

Cloud AI processes data on remote servers, typically in large data centers with significant computational resources. Edge AI processes data directly on local devices near the data source. The core difference is where inference happens. Cloud AI offers more powerful models and easier centralized management. Edge AI offers lower latency, reduced bandwidth, improved privacy, and the ability to operate without network connectivity. Many production systems combine both in a hybrid architecture.

What hardware is needed for edge AI?

Edge AI runs on a range of devices, from microcontrollers and single-board computers to dedicated AI accelerators and GPUs embedded in industrial equipment. Common hardware includes NVIDIA Jetson modules, Intel Movidius processors, Google Coral TPUs, and Qualcomm AI-capable chipsets. The right hardware depends on the model complexity, inference speed requirements, power budget, and environmental conditions of the deployment.

Can edge AI models be updated after deployment?

Yes, but updating models on edge devices requires over-the-air (OTA) update infrastructure, version control, and rollback capabilities. Organizations typically maintain a model registry, push updates to devices in staged rollouts, monitor performance metrics after deployment, and retain the ability to revert to a previous model version if issues arise. The process is more complex than updating a single cloud endpoint, especially across large fleets of heterogeneous devices.

Is edge AI suitable for small organizations?

Edge AI is accessible beyond large enterprises. Pre-trained models, model optimization frameworks like TensorFlow Lite and ONNX Runtime, and affordable edge hardware lower the barrier to entry. Small organizations with specific needs, such as a retail store using local computer vision for inventory or a clinic running on-device diagnostics, can deploy edge AI without building custom infrastructure.

The deciding factor is whether the use case genuinely requires local inference, not the size of the organization.

%201.svg)

.png)

%201.avif)

%201.avif)

%201.svg)